Mobile gaming studios scale in 2026 by shifting from install-volume growth to revenue-first strategies built on signal density, daily live ops, and cross-functional team alignment. Chasing installs without tracking what those users generate is the fastest way to burn budget in a market where over 95% of players churn within 30 days.

TLDR — Key Takeaways for Mobile Game Growth in 2026

- Stop leading with CPI. Optimize toward revenue events, not install volume.

- Signal density governs everything. Consolidate channels until you can hit ad platform optimization thresholds.

- Attribution is broken — build around it. Align your team on shared assumptions and act on directionally useful data.

- Kill campaigns on back-end revenue, not front-line metrics. Click-through rates don’t pay salaries.

- Shorten your feedback loops. Find early proxy signals so you’re not waiting a month to learn what’s working.

- Treat creative testing as R&D. Fixed monthly budget, distinct hypotheses, collaborative sprints — not a lottery.

- Build live ops into the game from day one. No post-launch engagement plan means no retention curve.

- Break the silos between UA, product, and analytics. Misaligned KPIs are one of the most expensive growth problems in gaming.

- Report to your board in three layers. Core paid performance, experimentation portfolio, organic foundation.

- Not sure where to start? Talk to MAVAN. 360 audit, 90-day roadmap, six months of free analytics — no long-term commitment.

Mobile Gaming Is Making More Money Than Ever — So Why Are Mobile Gaming Studios Still Struggling?

The mobile gaming industry made roughly $82 billion in consumer spending last year, according to Sensor Tower. Downloads dipped. Layoffs kept coming — over 9,000 jobs lost in 2025 alone, per tracking by business development director Amir Satvat. And yet, studio after studio is still building growth strategies around the same metric that stopped working years ago: install volume.

We get it. Installs feel tangible. They go up, and it looks like progress. But the gap between what looks like progress and what actually drives sustainable revenue has never been wider — and the studios caught in that gap are the ones bleeding budget, burning runway, and scrambling to explain the numbers at their next board meeting.

Luckily, we have Sam McLellan, MAVAN’s VP of Growth — and a veteran of Zynga and Take-Two — to build something more useful than a trend report.

What follows is a growth playbook grounded in the operational reality of mobile gaming in 2026 — covering signal density, attribution, live ops, creative testing, board reporting, and the cross-functional alignment that separates studios scaling sustainably from studios just burning money. Whether you’re running a team at a midsize studio or trying to figure out where your first $15K in UA budget should go, this is built for you.

Why Are Mobile Gaming Studios Still Chasing Installs?

Mobile gaming studios still chase installs because the metric is visible, easy to report, and deeply embedded in how the industry has measured success for over a decade. But install volume alone doesn’t predict revenue, retention, or long-term viability — and in 2026, flat global downloads alongside rising revenue prove that the studios winning are the ones extracting more value per user, not acquiring more users at any cost.

The muscle memory is real. For years, the first question in every growth meeting was “What’s our CPI?” Sam McLellan, MAVAN’s VP of Growth, puts it bluntly: “I don’t care.” And he never really did — even during his time at Zynga, where the install obsession ran deep across the industry. His reasoning is simple and hard to argue with. Mobile gaming is a whale-based industry. A small number of players generate an enormous share of total revenue. Some individual players spend $30,000 a month. Others spend over a million dollars a year. When that’s the economic reality of your business, optimizing for the cheapest possible install is like optimizing for foot traffic at a luxury car dealership. Volume means nothing if the people walking through the door aren’t buyers.

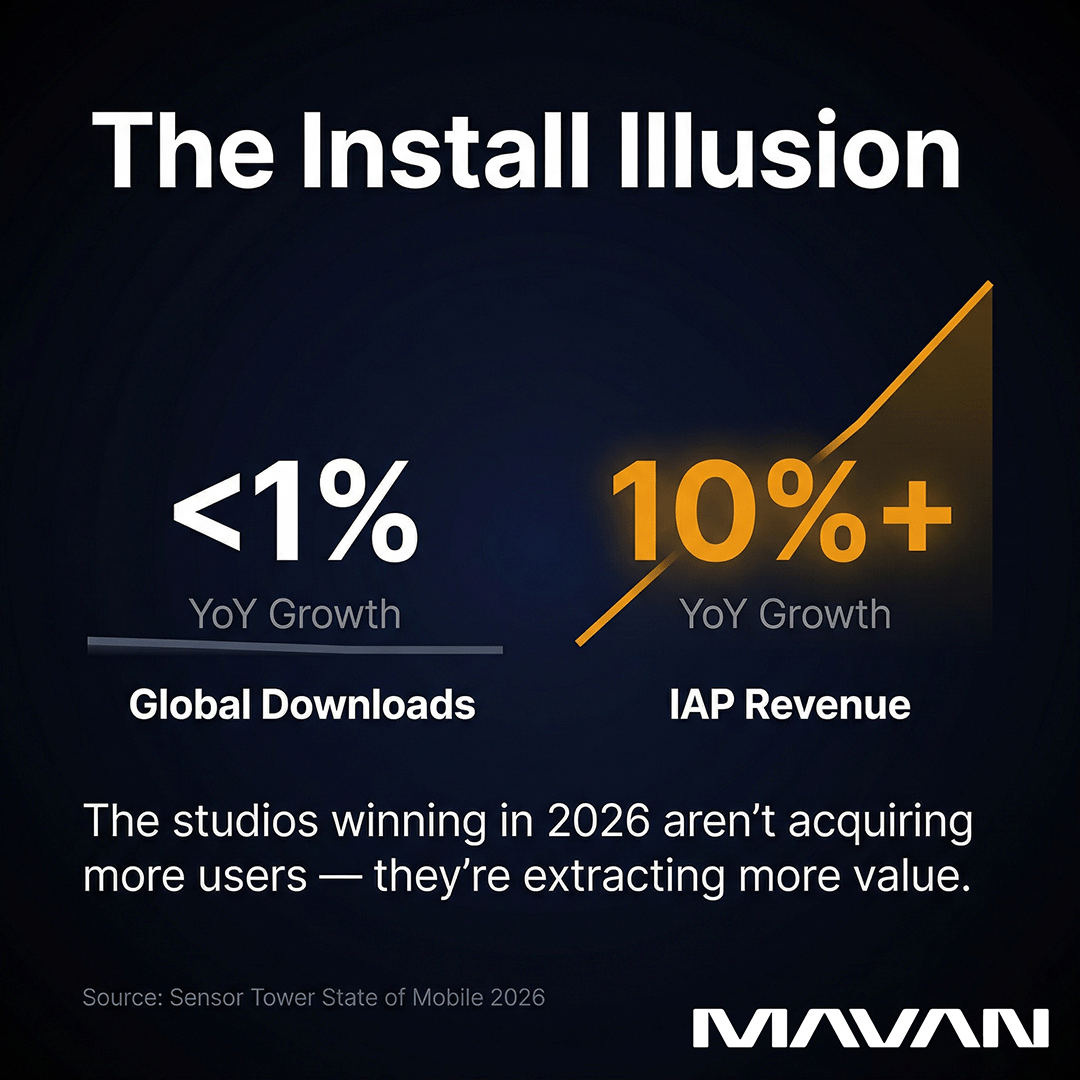

The data backs this up at the macro level. Sensor Tower’s State of Mobile 2026 report found that global downloads grew less than 1% year-over-year, while in-app purchase revenue surged over 10%. Deconstructor of Fun, analyzing the same data, called it a structural shift — not a blip. The era of growth-at-all-costs is over. The studios compounding value are doing it through monetization, retention, and smarter acquisition — not bigger top-of-funnel numbers.

And yet, the install-first mindset persists. Partly because it’s what most reporting dashboards surface first. Partly because boards and executives who aren’t deep in performance marketing still ask about it. And partly because the alternative — building a growth engine around revenue events, signal density, and cross-functional coordination — is harder to explain in a slide deck. It requires a different operating system, not just a different metric.

McLellan saw this tension play out at scale. Competitors like Machine Zone were running open-bid campaigns on specific zip codes, spending $60 per install. By any front-line CPI standard, those campaigns looked insane. But the revenue those users generated made every one of them profitable. The lesson isn’t that you should spend $60 per install. The lesson is that CPI alone tells you almost nothing about whether a campaign is working. If you’re still leading your growth conversations with “What’s our CPI?”, you’re answering the wrong question — and likely making decisions that leave real money on the table.

What Is Signal Density — and Why Is It the Biggest Lever in Mobile Gaming?

Signal density is the volume of meaningful user events — purchases, tutorial completions, subscription conversions — that you can feed back to ad platforms so their algorithms can find more people like your best users. It is the single most important factor in whether your paid campaigns can actually scale, because every major ad network requires a minimum threshold of conversion data before it can optimize effectively. If you can’t hit that threshold, your campaigns are flying blind.

Think of it this way. You launch a campaign on Meta or Google. You tell the platform, “Go find me people who will make a purchase in my game.” The platform says, “Great — show me what a purchaser looks like.” If you’re sending back 54 new payers a day, the algorithm has enough data to work with. It can pattern-match, target smarter, and bring you more users who behave like the ones already spending. But if you’re sending back three payers a day — because your game is early, your budget is small, or your optimization event is too deep in the funnel — the platform essentially shrugs. It doesn’t have enough signal to do its job. Your campaign stalls, your cost per acquisition spikes, and you’re left wondering why you can’t scale.

McLellan calls signal density “by far your biggest lever.” And it cuts both ways — it determines what you can optimize toward and how many channels you can realistically run at once.

How Does Signal Density Affect Your Channel Strategy?

This is where budget and ambition collide. A studio spending $15K a month on UA simply cannot spread that budget across five channels and expect any of them to reach the conversion thresholds required for optimization. The math doesn’t work. If each platform needs, say, 50 conversion events per campaign per week to optimize properly — a threshold Apple’s SKAN framework reinforces through its crowd anonymity tiers — then a small budget split five ways will starve every single campaign.

The practical move, McLellan explains, is to consolidate. Start with one channel. Maybe just Google. Maybe just Meta. Get enough signal flowing through a single platform to hit those thresholds, prove out your unit economics, and build confidence in your LTV model. Then, as budget grows, you expand — deliberately and with enough density behind each new channel to make it viable.

For larger studios launching with seven- or eight-figure budgets, the calculus flips. Now you have enough signal to run a diversification strategy from day one. You can do geo-clustering — grouping high-performing countries together and bidding more aggressively on them. You can dedicate budget to creative testing across multiple platforms. You can run experimental channels alongside your core performers. But even at that scale, signal density still governs the architecture. Every channel needs enough data flowing through it, or it’s just noise.

What Optimization Event Should You Choose?

This is one of the most consequential decisions a growth team makes, and it’s easy to get wrong. The instinct is to optimize toward the event closest to revenue — a purchase, a subscription conversion, a high-value in-app transaction. And if you have the volume to support that, you should. But many studios don’t, especially early on.

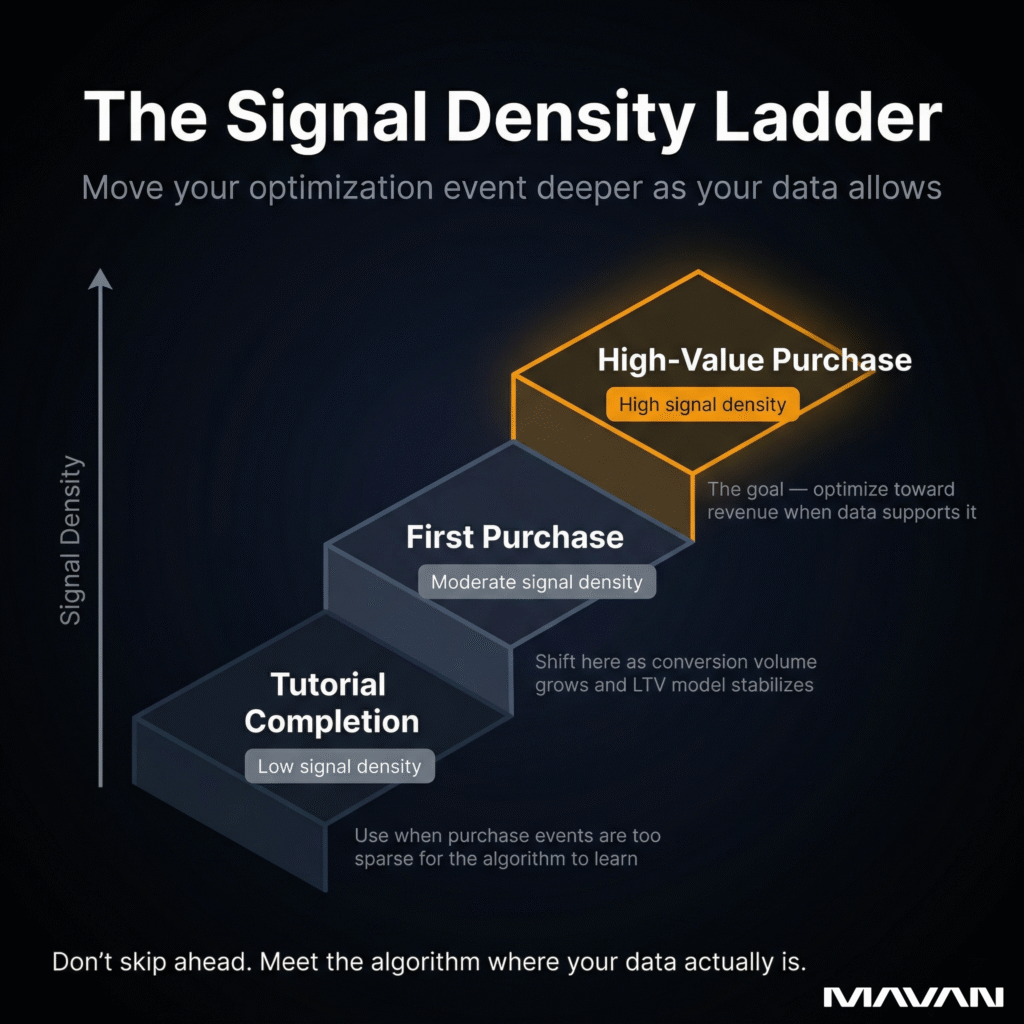

McLellan’s guidance is pragmatic. If you can’t generate enough purchase events to feed the algorithm, find an interim event — something earlier in the funnel that still correlates with long-term value. Tutorial completion, for instance. It’s not a revenue event, but it signals a semi-retained user. You can monitor the conversion rate from that event to eventual purchase, and scale with reasonable confidence while you build toward a deeper optimization point. As budget grows and more users convert, you move that event further down the funnel — from tutorial completion to first purchase to high-value purchase. Each step unlocks more precision, but only if the signal density supports it.

The mistake studios make is trying to skip ahead. They optimize toward a $50 purchase event when they’re only generating a handful a week. The platform can’t learn from that. It needs volume to find patterns. Meet the algorithm where your data actually is — then graduate forward as your density grows.

Is Mobile Game Attribution Still Broken in 2026?

Yes — and it probably always will be, at least partly. No attribution system in mobile gaming today is fully reliable. SKAN is delayed and aggregated. MMPs rely on probabilistic models that are, as Geeklab’s 2026 attribution analysis puts it, educated guesses. Fingerprinting is fading. The studios that scale aren’t the ones waiting for a perfect system. They’re the ones who accept the flaws, pick a framework, and build discipline around it.

McLellan doesn’t sugarcoat this. His take is that every attribution system is “pretty flawed” — and that the productive response is to take certain assumptions as truth and then work within that framework. That might sound uncomfortable if you’re used to deterministic, device-level tracking from the pre-ATT era. But that era is gone, and the studios still mourning it are the ones falling behind.

The practical question isn’t “Which system is right?” It’s “Which system gives me enough usable signal to make decisions — given my scale, my budget, and my goals?”

Why Do Product Teams and Marketing Teams See Attribution Differently?

This is one of the most underappreciated friction points inside gaming studios, and McLellan flags it directly. Product teams love granularity. They want to examine a specific A/B test served to a narrow audience segment in a specific geography, sliced six different ways. And they should — that level of detail is exactly how you improve a product.

But marketing can’t operate at that resolution. When product runs a micro-targeted test on a tiny slice of users, the attribution system might register two events from that campaign. Two. The ad platform looks at that and says, effectively, “I’ve got nothing to work with.” It ignores the data entirely. So marketing has to find ways to group events together — to aggregate enough signal that the system can actually learn from it. That means broader optimization events, wider audience targets, and less surgical precision than product would prefer.

Neither side is wrong. They’re just working at fundamentally different levels of zoom. The problem shows up when they don’t talk to each other about it — when product makes changes that fragment the data marketing depends on, or when marketing runs campaigns that flood the product with traffic that behaves nothing like the core user base. We see this constantly, and it’s one of the first things we look for when we come in to audit a studio’s growth operations.

What Does a Workable Attribution Setup Actually Look Like?

It looks like compromise — informed, deliberate compromise. Industry analysis from RevenueCat and others points toward a hybrid model: use SKAN as your compliance baseline and campaign-level measurement layer, keep an MMP for Android and cohort reporting, and layer in creative-level attribution where you can. No single tool will give you the full picture. The goal is overlapping lenses that, together, give you enough confidence to make spending decisions.

Signal density plays a role here, too. To unlock SKAN’s more granular data tiers — the fine-grained conversion values that actually tell you something useful — you need enough install volume per campaign to meet Apple’s crowd anonymity thresholds. If you can’t meet those thresholds, your data gets lumped together or suppressed entirely. This is why McLellan emphasizes consolidating campaign structures. Stop running hyper-segmented campaigns like “Male, 18–24, Texas.” That level of targeting dilutes your data and keeps you locked at the lowest anonymity tier. Broaden your targeting, maximize signal density per campaign, and let the platform’s algorithm do the segmentation work it was designed to do.

The studios getting attribution right in 2026 aren’t the ones with the fanciest tech stack. They’re the ones with the clearest internal agreement on which assumptions they’re treating as truth — and the discipline to act on imperfect data rather than waiting for data that will never be perfect.

How Do You Kill a Campaign That’s Spending Real Money?

You kill a campaign when the back-end revenue data says it won’t pay for itself within your target payback window — regardless of how good the front-line metrics look. The decision framework should always be anchored to revenue, not clicks, not free trials, not interaction rates. If the money coming in doesn’t justify the money going out within the time horizon your model defines, the campaign needs to be cut or fundamentally restructured.

That sounds clean on paper. In practice, it’s one of the hardest calls in user acquisition — because the front-line metrics are often screaming “keep going” while the back-end truth is subtly saying “stop.”

When Front-Line Metrics Lie

McLellan lived this firsthand. He was working on a subscription-based app that had been split out from a larger product — a deliberate move to isolate its data, build a clean audience around it, and scale it with its own dedicated campaigns. The front-line numbers were phenomenal. Click-through rates were strong. Over 40% of incoming traffic was starting a free trial, well above benchmarks for the company’s other products. By every surface-level indicator, this was a winner.

Then the free trials started expiring. At the 30-day mark, 85% or more of those trial users weren’t converting to paid. The actual revenue — the number that pays salaries and justifies budget — was nowhere close to making the campaigns profitable. Every front-line metric said grow. The only metric that mattered said no.

The team’s response was instructive. They couldn’t optimize toward free trial starts, because that event — despite its volume — had almost no correlation with revenue. So they shortened the trial. First to 14 days, then to one week. Not because a shorter trial was better for the consumer, but because it compressed the feedback loop. Instead of waiting 30 days and burning a month’s worth of budget before learning a campaign was unprofitable, they could get a read in seven days. That faster signal allowed them to make spending decisions in near-real-time instead of in hindsight.

What’s the Actual Decision Framework?

It starts with your target LTV curve and your ROAS (return on ad spend) floor. You build a model around your monetization — how much revenue the average acquired user generates over 7, 14, 30, 90 days — and you set a breakeven threshold. If a campaign isn’t tracking toward that breakeven within the defined window, you have a decision to make.

But McLellan adds nuance here. Not every underperformer deserves an immediate kill. If a campaign is 10% below your target ROAS, that might still be a viable channel. It’s close. It could be seasonal. It could improve with creative iteration. That’s something you can frame for leadership or for the board — “This channel is slightly below our target, here’s why, and here’s our plan to close the gap.” That’s a responsible, evidence-based conversation.

What you can’t afford is a campaign that’s completely flat. No revenue signal. No path to breakeven. No sign that the users coming in will ever monetize at the level you need. That one gets killed — quickly and without sentimentality. The longer you let a dead campaign run, the more budget it siphons from channels that could actually perform.

How Do You Shorten the Feedback Loop?

This is the operational unlock most studios miss. The free trial example is one version of it, but the principle applies broadly. If your optimization event takes 30 days to materialize, you’re making every spending decision with month-old information. In a market where creative fatigue cycles are shortening and acquisition costs rose 12% year-over-year according to Aarki’s data in the SocialPeta 2026 whitepaper, month-old information is practically ancient.

The fix is finding proxy events — earlier signals that reliably predict the outcome you care about. Tutorial completion as a proxy for retention. First session length as a proxy for engagement depth. Day-one spend as a proxy for LTV. None of these are perfect, but they compress the time between spending money and knowing whether that money was well spent. And when you pair shorter feedback loops with disciplined signal density, you build a system that can learn and adapt fast enough to keep pace with a market that won’t wait for you.

How Should Studios Think About Creative Velocity Without Burning Out Their Audience?

Studios should treat creative velocity as a disciplined testing system, not a volume contest. Producing more ads faster — especially with AI — doesn’t automatically mean better performance. In fact, it may be accelerating the fatigue problem it’s supposed to solve. The studios that win on creative are the ones with a structured sprint process, a dedicated testing budget, and the humility to recognize that AI is a production tool, not an idea engine.

McLellan frames this as a potential chicken-and-egg problem. AI has made it dramatically faster and cheaper to produce ad variants. That’s real and valuable. But if every studio is using the same tools, feeding them the same briefs, and flooding the same platforms with the output — what’s actually different about any of it? The creative just becomes more of the same noise. Players see more ads, care less about them, and fatigue cycles shorten. The SocialPeta 2026 whitepaper backs this up, finding that structured creative refresh cycles improve performance stability by roughly 30%, while Aarki’s data shows that creative velocity is now a “controllable lever for growth” — but only if it’s managed intentionally.

Why AI-Generated Creative Isn’t Enough on Its Own

Here’s the part the AI evangelists tend to leave out. McLellan points to a pattern he sees constantly: someone announces, “I built X app in X days using AI.” Impressive. But where did the idea start? A human came up with the concept, the strategy, the angle. AI executed the production. That distinction matters enormously in creative.

If your competitor is using the same AI tools, feeding it the same type of prompts based on the same market research, the output will converge. AI doesn’t generate originality — it recombines what already exists. McLellan puts it plainly: “It’s a photocopy of everything that’s been done.” That’s useful for speed. It’s terrible for differentiation. And differentiation is the entire point of creative in a market where the average monthly number of mobile game advertisers exceeded 84,000 in 2025 — a 22% year-over-year increase, according to SocialPeta. You are not competing for attention in a quiet room. You are shouting in a roaring stadium.

The practical answer is to use AI to accelerate production — generating variants, resizing assets, iterating on hooks — while reserving the strategic and conceptual work for humans. The best creative ideas still come from a group of people in a room riffing on something unexpected. AI can help you get those ideas into market faster. It cannot replace the room.

What Does a Well-Designed Creative Testing Sprint Look Like?

It starts with ground rules. McLellan recommends setting a fixed percentage of your total ad budget — monthly, not quarterly — dedicated purely to creative testing. Not leftover budget. Not whatever’s unspent at the end of the month. A deliberate, protected allocation that signals this is a real operational priority.

From there, the sprint should be collaborative. Bring in your UA team, your product people, your designers — ideally your client or stakeholders, too. Running creative in a vacuum introduces bias and blind spots. One person’s instincts will skew toward what they’ve seen work before. A group catches what an individual misses.

The weekly rhythm matters, too. Each week, you’re reviewing winners and losers, identifying patterns, and feeding insights back into the next round of concepts. This isn’t the “arena method” McLellan describes — where you throw a pile of ads into a campaign, let the platform pick winners, then repeat mindlessly. That approach treats creative testing as a lottery. A real sprint treats it as a learning system, where each cycle makes the next one smarter.

Here’s a practical framework to anchor the process for creative velocity:

- Set the budget floor. Dedicate a specific, non-negotiable percentage of monthly ad spend to creative testing. Treat it like R&D — because that’s what it is.

- Batch and brief together. Hold a weekly or biweekly creative session with cross-functional input. Define the concepts, hooks, and angles you want to test — before anyone opens an AI tool.

- Produce fast, but vary meaningfully. Use AI to generate variants quickly, but make sure each variant is testing a distinct hypothesis — a different hook, a different value proposition, a different emotional register. Ten versions of the same idea aren’t ten tests.

- Read the results honestly. A creative that wins this week might lose next week. Seasonality, platform algorithm shifts, and audience fatigue all play a role. Don’t declare permanent winners. Rotate losers back in later — McLellan notes they sometimes win the second time around.

- Separate signal from noise. Track performance at the concept level, not just the asset level. If three different executions of the same hook all underperform, the problem is the hook — not the thumbnail.

The goal isn’t to make the most ads. It’s to learn the fastest. Studios that treat creative testing as a structured discipline — not a production treadmill — will outlast the ones drowning their audience in volume.

What Does Live Ops Look Like When It’s a Real Growth System?

Live ops — the practice of continuously updating, event-cycling, and monetizing a game after launch — is the single biggest growth lever a studio can pull outside of paid marketing. It’s what keeps players in your game, what funds your ability to acquire more of them, and what separates titles that last years from titles that flame out in months. In 2026, live ops isn’t a differentiator. It’s a survival requirement. The studios treating it as “run an event every two weeks” are the ones watching their retention curves flatten while competitors build engagement engines that compound value daily.

McLellan is emphatic about this. Live ops is one of his first interview questions for any gaming hire — and has been for over a decade. His definition is broader than most. Not every live ops effort has to be revenue-generating. Some of it is pure retention — giving players a reason to open the app today, even if that session doesn’t produce a transaction. Login bonuses, guild contributions, collaborative builds, daily challenges — all of these are live ops. And all of them serve the larger growth system by keeping users active long enough to eventually monetize.

The data makes the stakes clear. Adjust reports that 84% of all mobile in-app purchase revenue in 2024 came from games using live ops, with 95% of studios now building or maintaining at least one live-service title. Meanwhile, Aarki’s data in the SocialPeta 2026 whitepaper shows that more than 95% of mobile game users churn within 30 days. Those two numbers together tell the whole story. Almost everyone leaves. The revenue comes from the ones who stay. Live ops is how you make them stay.

Why Does “More Live Ops” Sometimes Make Things Worse?

Here’s where it gets counterintuitive. McLellan’s biggest pet peeve isn’t studios doing too little live ops — it’s studios doing too much of it, badly. He points to a pattern especially common in Japanese and Korean mobile games: the player logs in, and every edge of the screen is exploding with offers, modals, sparkles, and notifications. Sixteen things pop up after completing a level. The player won something but doesn’t know where to claim it. There are a dozen different live ops systems running simultaneously, but they’re all variations on the same mechanic in different colors.

That approach makes money — McLellan is careful not to call it ineffective in raw revenue terms. But it creates a miserable experience for new players and a numbing one for existing players. The best live ops blends into the game’s natural rhythm so smoothly that players barely notice they’re being engaged. The worst live ops feels like walking through a casino lobby where every slot machine is screaming at you simultaneously. Some users will engage. Many will just want to get to the thing they came for and leave.

The Onboarding Gap Nobody Wants to Fund

There’s a related problem that McLellan calls out directly, and it’s one of the biggest missed opportunities in mobile gaming today. What happens when a player joins a game that’s been live for two years?

Take a game like Destiny 2. A first-time player logs in and faces a decade of layered content, systems, and progression paths — with minimal guidance on where to start. The live ops that keeps veteran players engaged is the same live ops that overwhelms newcomers. There’s no clear on-ramp from “I just installed this” to “I understand how to play and why I should keep playing.” That player is going to YouTube or Reddit to figure it out — if they bother at all.

McLellan understands why studios don’t fix this. It’s an incredibly hard pitch internally. The retained players — the elder cohorts — are generating real LTV right now. They’re paying monthly subscriptions, buying season passes, making in-app purchases. Spending money to re-engineer the new-player experience is a bet on potential, while the elder cohorts represent certainty. In an industry dealing with widespread layoffs and shrinking budgets, asking an executive to fund speculative onboarding work over proven content for paying users is a tough sell.

But the cost of not doing it compounds silently. Every new player who bounces from a confusing first session is acquisition spend wasted. Your UA team paid to bring that person in. If the product can’t retain them because the onboarding experience was designed for a game that launched two years ago and has since added hundreds of hours of content — that’s a growth leak no amount of ad spend will fix.

How Do You Build Live Ops That Actually Functions as a Daily System?

The operational shift is moving from “we have a live ops calendar” to “live ops is how this game breathes.” That means a few things in practice.

First, it means building live ops into the game from day one — not bolting it on after launch. McLellan points to Monopoly GO as the clearest recent example. That game didn’t ship a bare product and add events later. It launched with live ops fully integrated into the core loop, and every major update since has layered on top of that foundation. That’s now the standard. Studios still building games with the “launch now, figure out live ops later” approach are guaranteeing themselves a frantic scramble the moment the game goes live.

Second, it means thinking about live ops in layers of visibility. Some systems should be overt — season passes, limited-time events, beginner offers. Players expect them and respond to them. But the most powerful live ops systems are the ones players don’t consciously register. Guild mechanics that create social obligation. Daily login rewards that build a habit loop. Progression systems that reward consistency without punishing absence. Mixpanel’s 2026 mobile gaming benchmarks found that overall in-game actions per player reached 642 — up 30% year-over-year — with the sharpest increases in regions where studios invested heavily in live ops content cycles. Players are doing more inside games than ever. The studios driving that engagement are the ones whose live ops feels less like a sales pitch and more like a reason to play.

Third, it means treating live ops as a cross-functional effort — not just a product team deliverable. What events you run, when you run them, and who you target them at should be informed by your UA data, your CRM segmentation, your monetization analytics, and your retention curves. If those teams aren’t talking to each other, your live ops is operating in a vacuum — and probably creating more friction than value.

Why Do UA and Product Teams Keep Fighting Each Other?

UA and product teams clash because their KPIs are structurally misaligned, their data needs operate at different resolutions, and most studios lack a unifying layer — a conductor — to make both functions work toward the same outcome. The problem isn’t talent. Studios often have exceptional people in both roles. The problem is that without cross-functional coordination, those talented people are optimizing in opposite directions.

McLellan uses an analogy he comes back to often: an orchestra. You can have a world-class violinist and a world-class drummer. Put them in the same room without a conductor, and you don’t get music. You get noise. Maybe jazz, he jokes — but not the kind of coordinated performance that actually moves the business forward.

Where Does the Friction Actually Come From?

It starts with what each team is measured on. Product is typically accountable for engagement depth, feature adoption, retention curves, and per-user monetization metrics. UA is accountable for volume, cost efficiency, ROAS, and hitting revenue targets from acquired traffic. On paper, those goals should complement each other. In practice, they often collide.

McLellan gives a concrete example. A UA team decides to test a new acquisition channel — say, a rewarded or incentivized network. The traffic that comes through behaves very differently from organic or standard paid users. These players might install the game, collect their reward, and never open it again. Retention is abysmal. The product manager sees this flood of low-quality traffic distorting their metrics and rightly asks, “What are you doing? These users aren’t sticking around for more than an hour.”

But the UA team has a reason for running that test. Maybe they’re exploring a cheaper acquisition source to supplement core channels. Maybe they’re testing whether that traffic can be segmented and retargeted later. Maybe they’re trying to hit a signal density threshold that requires raw volume in the short term. The intent is legitimate — but if no one communicated it to the product team beforehand, all they see is garbage traffic wrecking their numbers.

The same friction works in reverse. When product runs highly targeted A/B tests on narrow audience slices — a specific geography, a specific user segment, a specific feature variant — the data generated by those tests is often too thin for marketing to use. Two conversion events from a micro-targeted experiment gives the ad platform nothing to optimize against. Marketing needs aggregated signal. Product needs granular insight. Without someone bridging that gap, both sides end up frustrated and working around each other.

What Happens When You Put a Conductor in the Room?

This is where the concept of growth — as a function, not just a buzzword — becomes essential. McLellan is deliberate about the distinction. When he’s carried a “growth” title rather than a “marketing” or “UA” title, the scope of the work changes. Growth sits above UA, product, live ops, data analytics, and CRM. It’s the umbrella function that ensures all those pieces are playing the same tune at the same beat.

That doesn’t mean growth overrides those individual functions. Product still owns product decisions. UA still owns campaign execution. But growth provides the shared context — the common operating picture — that prevents one team’s smart decision from becoming another team’s operational headache.

When we come into a studio, the first thing we do is a full 360-degree review. We talk to everybody — UA, product, analytics, CRM, live ops, leadership. And what we consistently find is that these teams aren’t just siloed. They’re often unaware of what the other teams are even measuring, let alone optimizing toward. Product doesn’t know what events UA is feeding to ad platforms. UA doesn’t know what product changes are coming that might shift conversion behavior. Analytics is sitting on insights that neither team has asked for because nobody realized the question existed.

McLellan doesn’t frame this as a failure of the people involved. It’s normal. It happens at companies of every size — and sometimes the biggest companies are the worst at it, because political dynamics around protecting individual team metrics make cross-functional transparency feel risky. Nobody wants to be the team whose data looks bad in someone else’s meeting.

How Does Breaking Silos Produce Measurable Results?

The impact shows up in places you wouldn’t expect if you’ve never seen it happen. When UA and product align on which events feed back to ad platforms, campaigns get better signal and costs come down. When live ops coordinates with CRM on event timing and audience segmentation, engagement lifts without increasing content production burden. When analytics surfaces a data integrity issue — say, event volumes that don’t match across platforms — and both UA and product agree to prioritize fixing it, every downstream decision gets more reliable.

McLellan is candid about one of the less glamorous realities: often the first finding from a 360 review is that the data itself is a mess. Events aren’t firing correctly. Attribution is Frankensteined together from years of rapid scaling. Dashboards tell different stories depending on which team built them. That’s unsexy work — nobody wants to spend their first 90 days cleaning up event taxonomy. But if the data foundation is a house of cards, nothing built on top of it will hold. You can’t scale effectively on fractured data, no matter how talented the people reading it are.

The 90-day roadmap we deliver after a 360 audit is built around this reality. It prioritizes the biggest levers — which are often structural fixes, not new campaigns. Get the teams talking. Get the data clean. Get consensus on which metrics actually matter. Then execute. That sequencing feels slow to studios under board pressure, but it’s the difference between building a growth engine and building a bigger version of the same broken system.

What Do Boards Actually Need to See From a Growth Team?

Boards need to see that you have a plan — a real one, with clear priorities, defined metrics, and enough strategic depth to show you’ve thought beyond “spend money, get installs.” Most studios get board reporting wrong in one of two ways: they’re either too vague and hand-wavy, or they’re too deep in the UA weeds. Both erode confidence. The sweet spot is a framework that communicates the growth strategy clearly, proves you’re measuring what matters, and shows you’re experimenting beyond a single channel.

McLellan has sat on both sides of this conversation — presenting to boards as an operator and helping studios prepare for board meetings as an advisor. The failure modes he describes are strikingly consistent.

The Two Ways Studios Get Board Reporting Wrong

The first is being too broad. The growth team shows up with something like, “We’re going to get eyeballs on the product. We’ll spend X budget and we’re hoping to get X installs.” That’s not a plan. That’s a wish list with a price tag. Boards exist partly to stress-test the plan. If there’s nothing to stress-test, they lose confidence — not just in the strategy, but in the team presenting it.

The second failure mode is the opposite: drowning the board in platform-level detail. Campaign names, channel-specific CPIs, ad network performance breakdowns, SKAN conversion schema nuances. The board doesn’t need to know you’re running AppLovin or that your Moloco campaigns are outperforming TikTok by 14% this week. They need to know how your budget is deployed at a category level, what the expected return looks like, and where you’re placing strategic bets. The details underneath should exist — and should be available if asked — but they don’t belong in the primary narrative.

What Do VCs and Boards Actually Want to Hear in 2026?

The funding landscape has shifted, and McLellan is blunt about what that means for growth teams seeking investment or board support. VCs aren’t interested in funding pure UA machines anymore. The days of raising a round and pouring the majority of it into paid acquisition are largely over. Dedicated UA funding models exist now — revenue-share arrangements where a financing partner fronts the ad spend, takes their cut first, and returns the remainder to the studio. VCs don’t want to compete with that model. They want to see something more.

Specifically, boards and investors in 2026 want to hear about product-led growth. What’s on the product roadmap that you believe will drive organic traction? What features are you building that create retention loops independent of ad spend? They want to see experimentation. Are you exploring content communities? Discord servers? Telegram groups? Are you building an organic presence that compounds over time? They want to know if AI is part of your distribution strategy — not just your production workflow. Can your game surface in AI-generated answers and recommendations?

McLellan frames it as a question of depth. If your entire growth story is “we put money out there and we got X back,” any agency can tell that story. Any freelancer with a Meta Business Manager login can execute that. You’re at this level — raising capital, presenting to a board — because you’re supposed to have more than that. The board wants to see the “what else.” What are you doing beyond paid acquisition that gives you a defensible growth advantage?

A Reporting Framework That Earns Trust

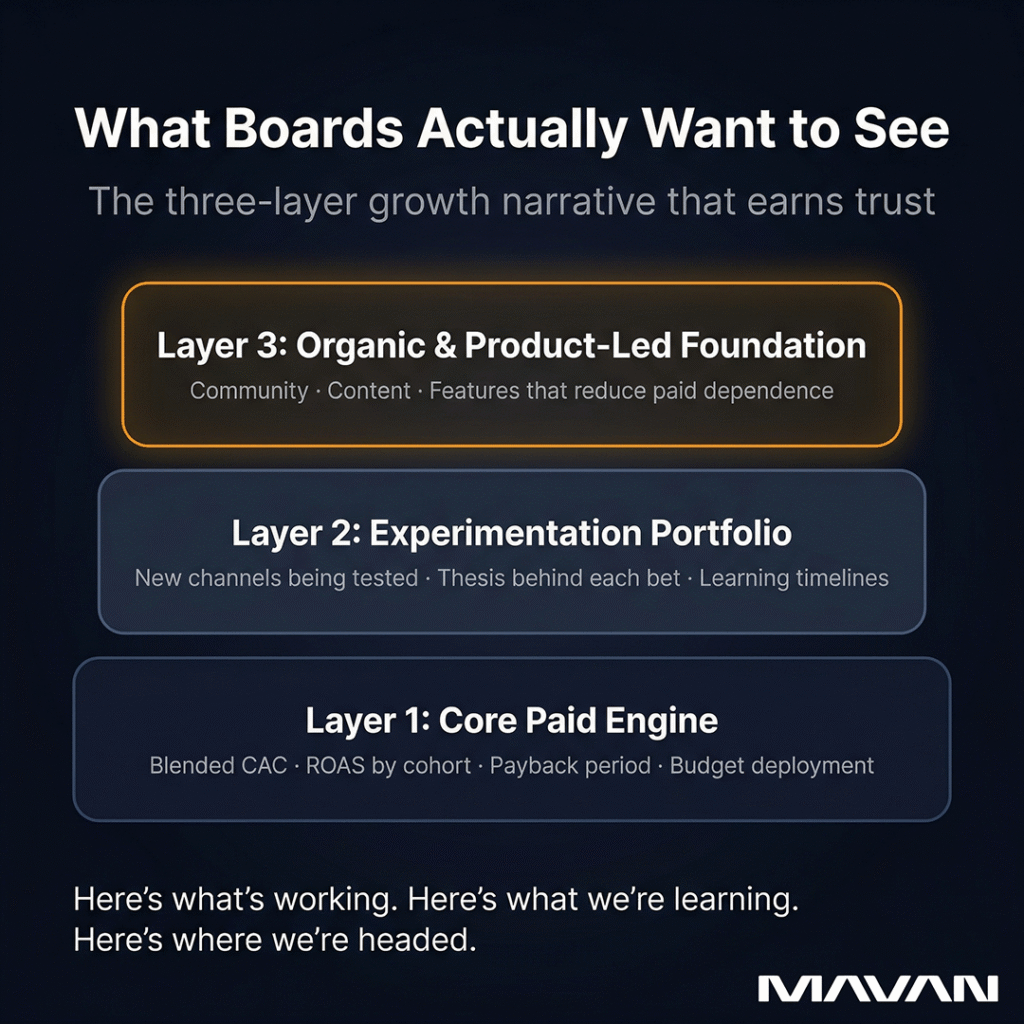

The studios that consistently earn board confidence tend to organize their growth narrative around three layers.

The first layer is the core paid engine. How is budget deployed? What are the headline efficiency metrics — blended CAC, ROAS by cohort window, payback period? Is the engine trending in the right direction? This should be concise. A few clear charts, a summary of what’s working and what’s being adjusted.

The second layer is the experimentation portfolio. What channels, strategies, or tactics are you testing that haven’t been proven yet? What’s the thesis behind each experiment? What’s the timeline for learning whether it works? This is where you demonstrate strategic thinking — the ability to find growth levers competitors haven’t found, or that are less competitive for your specific title.

The third layer is the organic and product-led foundation. What are you building — in the product, in community, in content — that reduces your dependence on paid acquisition over time? This is the long-term story. It’s what separates a growth team running campaigns from a growth team building a compounding asset.

When you present all three layers together, you tell a story the board can actually evaluate: here’s what’s working now, here’s what we’re learning, and here’s where we’re headed. That’s a plan. That earns continued investment.

What’s the Minimum Viable Growth Stack at Each Revenue Stage?

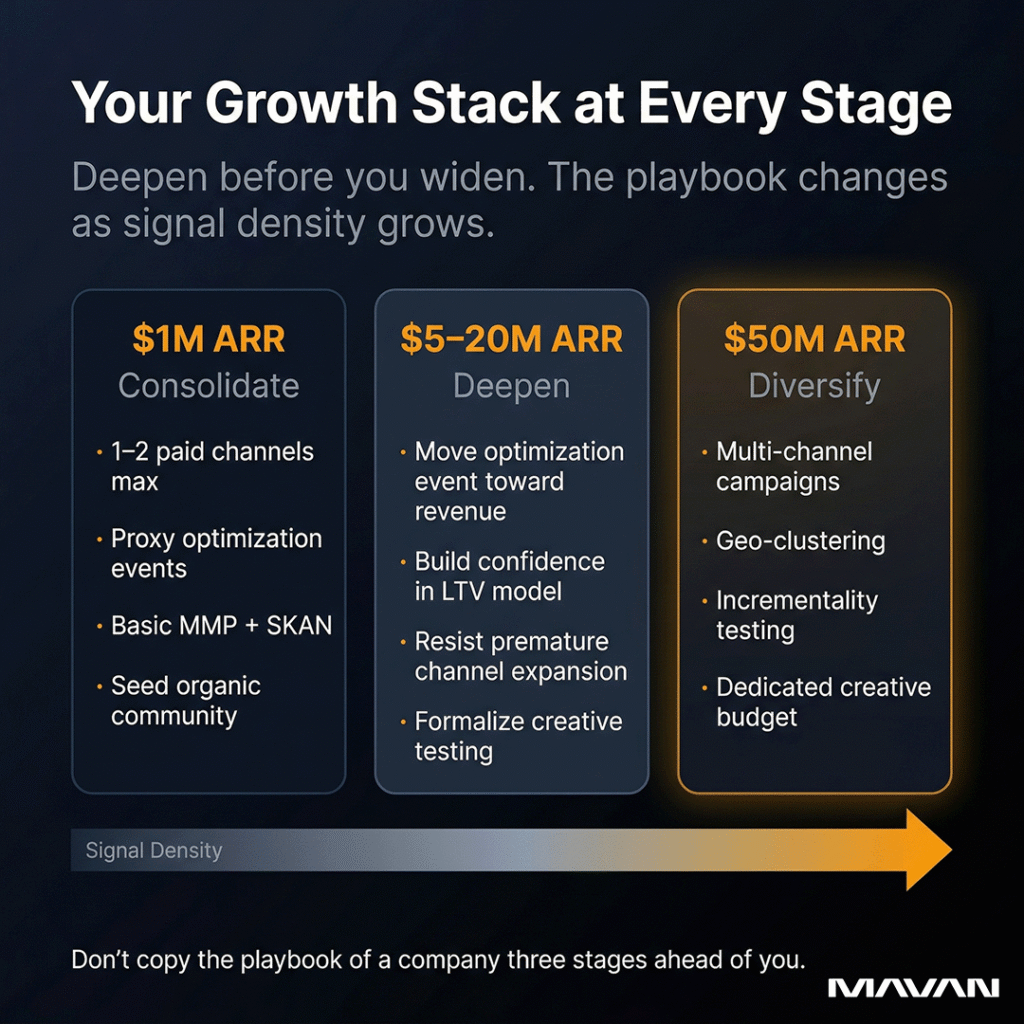

The minimum viable growth stack depends almost entirely on signal density — how much meaningful conversion data you can generate and feed back to ad platforms. A studio doing $1 million in annual recurring revenue needs a fundamentally different setup than one doing $50 million, not because the principles change, but because the volume of data available to work with changes everything about what’s tactically possible. The mistake most studios make is copying the playbook of a company three stages ahead of them and wondering why it doesn’t work.

McLellan is clear that signal density is the gating factor at every level. It determines which optimization events you can target, how many channels you can run simultaneously, and how granular your testing can get. Everything else flows from that constraint.

What Should a Studio at $1M ARR Focus On?

Consolidation. At this stage, your budget is limited, your install volume is modest, and every dollar of ad spend needs to pull double duty — generating revenue and generating learning. You likely cannot afford to run campaigns across Meta, Google, TikTok, AppLovin, and Apple Search Ads at the same time. If you try, you’ll spread your budget so thin that no single platform gets enough conversion events to optimize effectively. Every channel starves.

The move is to pick one platform — maybe two — and go deep. McLellan suggests Google or Meta as the most common starting points, depending on the title and the audience. Concentrate your budget there. Find an optimization event you can actually hit at volume — which might be tutorial completion or a proxy engagement event rather than a purchase, if your purchase volume is still low. Build confidence in your unit economics on that single platform before expanding.

At $1M ARR, your growth stack should be lean by necessity:

- One to two paid channels with consolidated campaign structures to maximize signal density per campaign.

- A clear optimization event that balances proximity to revenue with enough volume to let the platform learn.

- Basic attribution — an MMP plus SKAN compliance, with realistic expectations about data granularity.

- A monetization model you trust enough to project an LTV curve, even if it’s early and imperfect.

- An organic foundation — App Store Optimization, a content presence, community seeds on Discord or Reddit. These won’t drive volume immediately, but they compound over time and reduce your total dependence on paid.

McLellan acknowledges the hard reality for studios with zero budget. You can put a game on the app store and make noise on social media. Some studios have built genuine organic followings by blogging their development process on Reddit or building communities around early access. Those stories are real — but they’re also rare. Most games launched with no paid budget and no distribution strategy don’t find an audience. Publishing partnerships can help, but they’re not a silver bullet either. The honest answer is that some level of paid investment is a near-requirement in mobile gaming, and the question is how to deploy even a small amount of it with maximum efficiency.

What Changes at $50M ARR?

Almost everything opens up. At this scale, you likely have enough signal density to support a multi-channel strategy. You have enough conversion events flowing across platforms to optimize toward deeper, higher-value events — first purchase, high-value IAP thresholds, subscription renewals. The platforms are working for you instead of guessing.

Now the strategic question shifts from “Can we scale?” to “How do we scale smartly?” McLellan describes several levers that become available at this stage:

- Channel diversification. You can — and should — be running campaigns across multiple platforms. Meta, Google, TikTok, CTV, programmatic networks, Apple Search Ads. Each one needs enough signal density behind it to function independently, but at $50M ARR, the math usually works.

- Geo-clustering. Instead of running broad global campaigns, you group high-performing countries together and bid more aggressively on those clusters. You identify where your best LTV users live and over-index your spend there. Lower-performing geos get grouped into separate campaigns with different expectations and different budgets.

- Dedicated creative testing budgets. At this scale, creative testing isn’t carved out of leftover spend. It’s a formal line item — a percentage of monthly budget with its own objectives, its own sprint cadence, and its own reporting.

- Competitive intelligence. You’re now big enough that competitor behavior directly affects your costs and performance. Keyword competitiveness, seasonal bid patterns, creative trends in your genre — these become operational inputs, not background reading.

- Advanced attribution and incrementality. You should be running incrementality tests to understand what your paid spend is actually adding versus what would have happened organically. Media mix modeling, holdout tests, geo-based lift studies — these tools become viable and necessary at scale to prevent overspending on channels that look good in last-touch dashboards but aren’t driving true incremental value.

The gap between these two stages isn’t just budget. It’s operational maturity. A $1M studio needs discipline and focus. A $50M studio needs systems and coordination. Both need signal density as the foundation — but what you build on top of that foundation changes dramatically as the data allows it.

What About the Studios in Between?

The messy middle — somewhere between $5M and $20M ARR — is arguably the hardest stage. You have enough budget to feel like you should be doing more, but not enough signal density to do everything you want. McLellan’s advice here is to resist the temptation to expand channels prematurely. Instead, use the growing budget to move your optimization event deeper down the funnel. If you’ve been optimizing toward tutorial completion, now you might have enough purchase events to shift toward first purchase. That single change — moving the optimization event closer to revenue — can dramatically improve campaign efficiency without requiring any new channels at all.

The progression is sequential, not parallel. Deepen before you widen. Move your optimization event back as density allows. Add channels only when your core platforms are performing reliably and you have budget headroom that won’t cannibalize what’s already working. Every expansion should be funded by the efficiency gains from the previous stage — not by hope.

How Do You Balance Monetization Pressure With Retention Health?

You balance monetization pressure with retention health by making bold product bets inside controlled test groups — not by optimizing cautiously across your entire user base. The conventional wisdom says monetization must be handled delicately to protect user experience. McLellan pushes back on that framing. The products that move fast and perform well are the ones willing to take big swings. The ones making 2% UI tweaks to a payment screen are rarely the ones seeing transformational results.

That might sound reckless. It’s not — when it’s done with discipline.

Why “Optimize Cautiously” Can Actually Hold Studios Back

There’s a version of this advice that’s genuinely useful: don’t wreck your game’s economy for a short-term revenue spike. Don’t alienate your entire player base by shoving aggressive monetization into every interaction. That’s sound. Nobody is arguing for recklessness at scale.

But there’s another version of this advice — the one McLellan sees far more often — that kills growth. It’s the studio that spends months A/B testing minor color changes on a purchase button. The team that rearranges UI elements on a payment screen and calls it a monetization experiment. These tests might yield a 2% improvement, and at high enough volume, 2% matters. But they’re incremental by design. They will never produce the kind of step-change improvement that actually moves a business from struggling to sustainable.

McLellan’s point isn’t that small tests are useless. It’s that studios — especially startups and midsize teams with limited runway — can’t afford to spend their time and budget exclusively on safe bets. If you have eight figures in the bank and years of runway, sure, optimize slowly. The Take-Twos and EAs of the world can afford patience. Their goal, McLellan notes, is essentially to move as slowly as possible, because nothing is at risk. If a competitor or startup discovers something that works, they have the resources and depth to copy it later.

Startups don’t have that luxury. Limited runway means every experiment carries real opportunity cost. Spending three months proving that a button color change lifts conversions by 1.5% is three months you didn’t spend testing whether a completely overhauled offer screen could lift conversions by 40%. The bold bet has higher variance, yes. But for a company that needs to find product-market fit or hit a revenue milestone before the next funding round, variance is the point. You need to find the thing that works — not polish the thing that sort of works.

How Do You Take Big Swings Without Wrecking the Game?

Small test groups. That’s the entire answer, and it’s surprisingly underutilized. McLellan describes a model where major monetization experiments — overhauling a payment flow, restructuring an offer, testing an aggressive new live ops mechanic — get run on a small percentage of the user base. For large games, that might be 1%, further divided into sub-groups for different variants. For smaller games, the percentage might be higher, but the principle is the same: expose a limited audience to the experiment, measure the impact, and scale only what works.

Yes, some users in that test group will have a bad experience. That’s real, and McLellan doesn’t pretend otherwise. A player who encounters a jarring, poorly tuned monetization experiment might leave. That’s a cost. But it’s a cost measured in a small, controlled slice of your user base — not a catastrophe that touches everyone. And McLellan makes a point that challenges conventional retention orthodoxy: if the product eventually finds its moment and reaches real scale, many of those burned users will come back. They’ll hear about improvements from friends, see an ad, or notice the game trending. When they return, the bad test is long gone — killed and replaced by whatever version actually won.

The Prestige Mechanic: A Case Study in Bold Thinking

McLellan offers a thought experiment that captures this philosophy perfectly. Consider the prestige mechanic — a system where a player who has maxed out their character, accumulated every upgrade, and reached the top voluntarily resets to level one. Everything they built disappears. In exchange, they get a permanent stat boost that compounds over multiple resets. Games like Arc Raiders run this cycle monthly. Marathon is building it into quarterly resets.

Imagine pitching that concept to a risk-averse executive. “We’re going to ask our most invested players to give up everything they’ve earned.” The instinct is to recoil. “They’ll leave at level five. They’ll quit the moment they realize what happened.” And some will. But the ones who stay — the ones who understand the long game and want that compounding advantage — become the most deeply retained, highest-LTV players in your game. They’re playing for years, not weeks. The lifetime value of a player who prestiges four or five times dwarfs the value of one who hits max level and drifts away.

You would never discover that without testing it. And you’d never test it if your operating philosophy was “optimize cautiously.”

What Does This Mean for Studios Under Board Pressure?

The tension is real. Boards want revenue growth now. Retention is the foundation that makes revenue growth sustainable. McLellan’s answer isn’t to pick one over the other — it’s to structure your experimentation so that you’re taking aggressive bets on monetization within controlled environments, while protecting the broader user experience for the majority. Run bold tests on small groups. Kill what doesn’t work quickly. Scale what does. And frame the narrative for your board honestly: “We’re running X experiments this quarter. Here’s what we’re testing, here’s the expected risk, and here’s the upside if any of them hit.”

That’s not a story about recklessness. That’s a story about disciplined aggression — which is exactly what boards, especially venture-backed boards operating in an industry that lost over 25,000 jobs in two years, actually want to hear.

Frequently Asked Questions About Mobile Gaming Growth

What is signal density in mobile gaming?

Signal density is the volume of meaningful conversion events — purchases, subscriptions, tutorial completions — that your game sends back to ad platforms like Meta, Google, or TikTok. These platforms need a minimum threshold of events per campaign to optimize effectively. If you’re below that threshold, the algorithm can’t learn what a valuable user looks like, and your campaigns stall. Signal density determines which optimization events you can target, how many channels you can run, and how granular your testing can get. It’s the single most important factor in whether paid UA can actually scale.

How do you fix broken attribution in a post-SKAN world?

You don’t fix it — you work within it. No attribution system in mobile gaming today is fully accurate. SKAN is delayed and aggregated. MMPs use probabilistic models that are inherently imprecise. The studios scaling profitably in 2026 are using a hybrid approach: SKAN for compliance and campaign-level measurement, an MMP for Android and cohort reporting, and creative-level attribution tools where available. The key is getting internal alignment on which assumptions your team will treat as truth — and building decision-making discipline around imperfect but directionally useful data rather than waiting for a perfect system that doesn’t exist.

What’s the biggest mistake studios make when reporting growth to their board?

Either being too vague or too granular. The too-vague version is showing up with “we’ll spend X and hope to get Y installs” — which isn’t a plan and doesn’t give the board anything to evaluate. The too-granular version is walking the board through ad network performance breakdowns and SKAN schema nuances that obscure the strategic picture. Boards want to see three things: how budget is deployed and performing at a category level, what experiments you’re running to find new growth levers, and what you’re building organically that reduces dependence on paid acquisition over time. That three-layer narrative — core engine, experimentation portfolio, organic foundation — consistently earns trust.

How does a cross-functional growth partner differ from a traditional UA agency?

A traditional UA agency manages your paid acquisition campaigns. They optimize spend, manage channels, report on CPI and ROAS, and ask for more budget. A cross-functional growth partner operates across UA, product, live ops, data, analytics, and CRM as one coordinated unit. The difference matters because growth problems are rarely contained to a single function. Bad attribution data affects campaign efficiency. Misaligned product KPIs create friction with UA. Live ops that isn’t coordinated with CRM leaves retention value on the table. A growth partner diagnoses and addresses the system — not just one channel inside it. At MAVAN, we start with a 360-degree audit that looks at every function, deliver a prioritized 90-day roadmap, and then either execute alongside the studio’s internal team or hand off the plan for them to run independently.

What should a mobile gaming studio do first if they have limited budget?

Consolidate. Pick one or two paid channels — Meta and Google are the most common starting points — and concentrate your entire budget there. Choose an optimization event you can hit at volume, even if it’s an early-funnel proxy like tutorial completion rather than a purchase. Build your LTV model, even if it’s imperfect and early. Invest in App Store Optimization and start seeding an organic community on Reddit, Discord, or social platforms. The goal isn’t to do everything at once. It’s to build enough signal density on a single platform to prove your unit economics and create a foundation you can expand from as budget grows.

Does every mobile game need live ops to survive in 2026?

Effectively, yes. Live ops — the ongoing cycle of events, content updates, offers, and engagement mechanics after launch — has shifted from a competitive advantage to a baseline requirement. Adjust reports that 84% of mobile IAP revenue comes from games running live ops, and 95% of studios now build or maintain at least one live-service title. The depth and sophistication of your live ops will vary by genre, team size, and budget. But launching a game without any plan for post-launch engagement is essentially planning for your retention curve to flatline within weeks.

So How Do Mobile Gaming Studios Actually Scale in 2026?

Mobile gaming studios don’t scale in 2026 by acquiring the most users. They scale by building growth systems that turn signal density into smarter campaigns, live ops into daily engagement engines, and cross-functional alignment into a compounding advantage. The install-volume era is over. The studios winning now are the ones optimizing toward revenue, making bold bets inside controlled test groups, consolidating channels to maximize data quality, and reporting to their boards with a strategy that goes deeper than “we spent X and got Y.” Whether you’re working with a $15K monthly budget or an eight-figure war chest, the fundamentals are the same: meet the algorithm where your data actually is, get your teams talking to each other, and build a growth engine that compounds — not just one that spends louder.

Where Do You Start If Your Game Studio’s Growth Strategy Isn’t Working?

If you read this and recognize your studio in the problems described — silos between UA and product, attribution data you don’t trust, live ops that runs on autopilot, board meetings where the growth story feels thin — then start with one step this week: pick the single biggest friction point in your growth operation and write down what fixing it would require. Not a slide deck. Not a strategy doc. Just the honest version: what’s broken, who needs to be in the room, and what you’d need to see in 90 days to know it’s working.

If you want a team that’s done that exercise across dozens of gaming studios — and can do it alongside yours in weeks, not months — reach out to us about auditing your company and building a 90-day growth roadmap. We’ll run a full 360-degree audit of your growth operation, hand you a prioritized 90-day roadmap you can execute with or without us, and give you six months of free access to our analytics platform, Nexus, so you can track whether the roadmap is actually working. No long-term commitment required. Just clarity on where the levers are — and which ones to pull first.

Casey Rock is Content Director at MAVAN, where he helps turn complex ideas into clear, strategic content that drives growth. With over 15 years of experience across content strategy, SEO, media, and digital marketing, Casey focuses on building content systems that connect audience insight, brand storytelling, and measurable business outcomes.

Book a complimentary consultation with one of our experts

to learn how MAVAN can help your business grow.

Want more growth insights?

Thank you! form is submitted

[hubspot type=”form” portal=”20951211″ id=”9c538ed2-fb12-45f1-a573-ad7953c058cc”]

Related Content

-

How Can SaaS and Consumer Products Improve Retention?

SaaS and consumer brands need to take a page out of gaming’s retention playbook. Games keep players for years by treating launch as the starting line, building an engagement loop into the product’s core, and continuously deploying content and rewards. Non-gaming products can apply the same live-operations approach to turn retention into their cheapest source of growth.

-

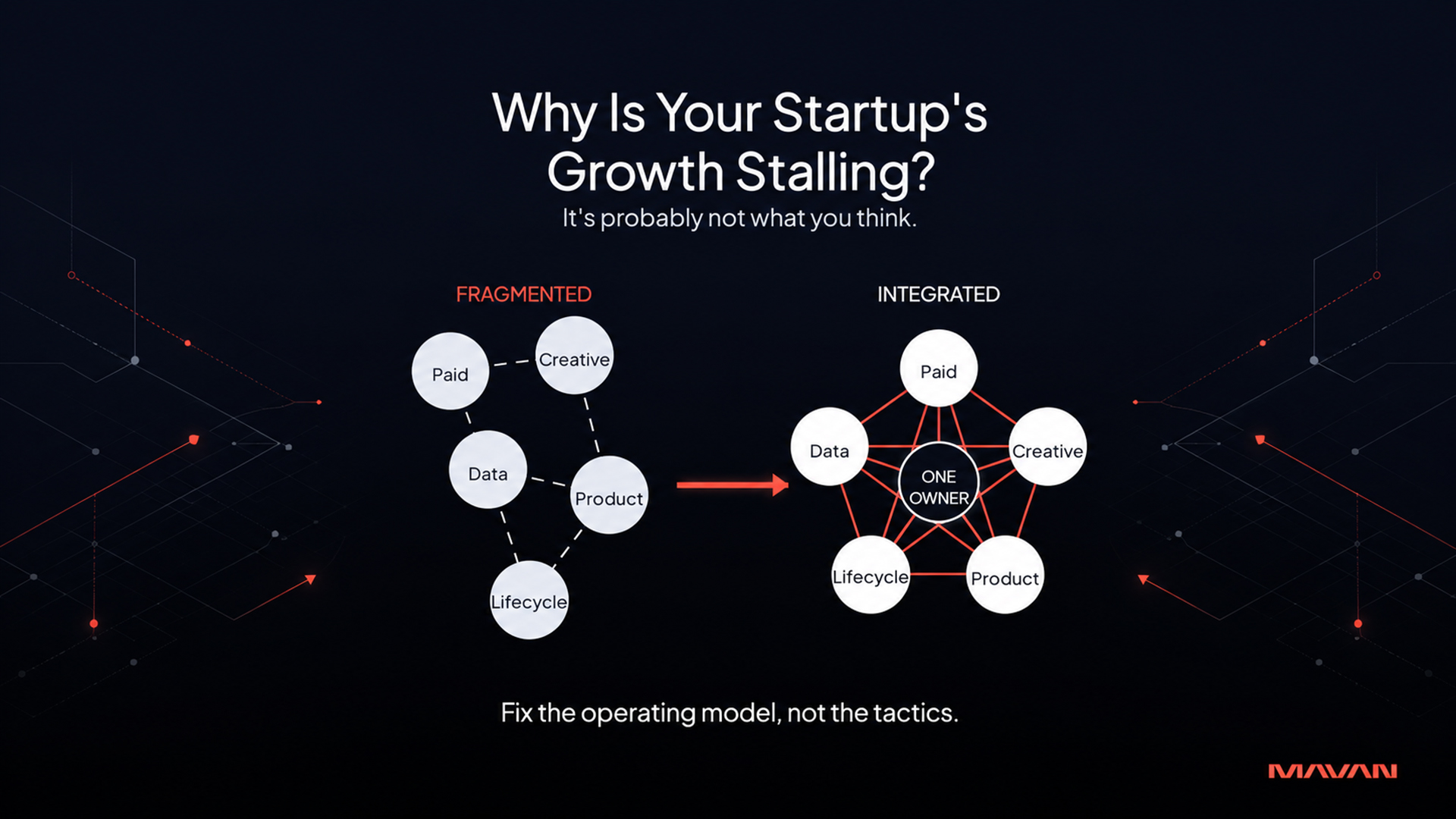

Why Is Your Startup’s Growth Stalling? Here’s The Fix.

Growth-stage startups don’t usually stall because the team isn’t working hard enough. They stall because hard work gets distributed across too many disconnected efforts, and nobody owns the system that ties them together. The fix isn’t more ad spend or more specialists — it’s integrating what you already have.

-

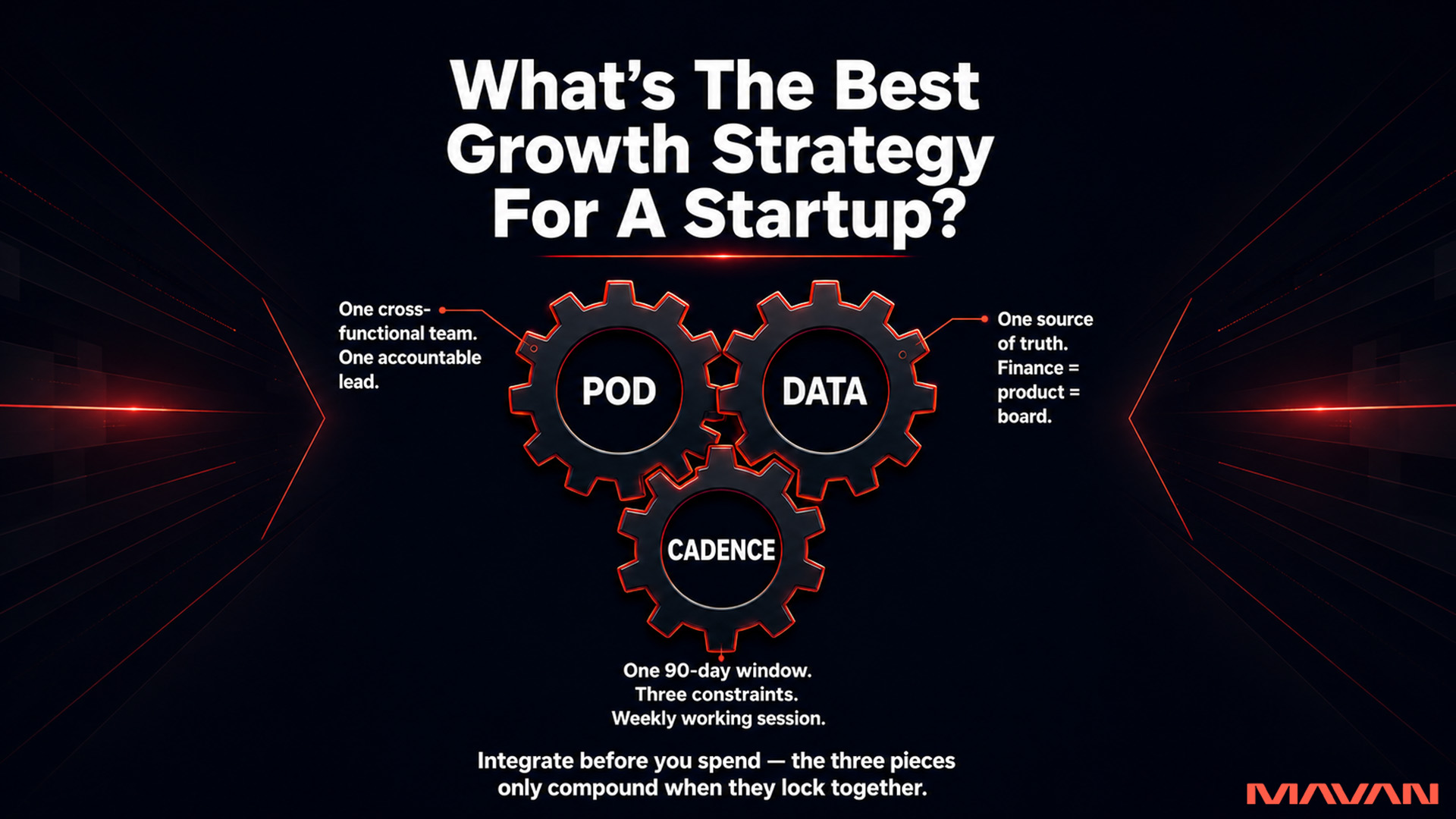

What’s The Best Growth Strategy For A Startup In 2026?

The best growth strategy for a venture-backed startup in 2026 is to integrate before you spend. Fragmented teams, tools, and data silently inflate CAC even when every individual channel looks healthy. Fix the operating model — one accountable pod, one source of truth, one 90-day cadence — and the wins start compounding instead of canceling each other out. Channels do not break unit economics. Lack of measurement discipline does.