TLDR — 10 Growth Takeaways for Acquisition Architecture

- Rising CAC is usually a system problem, not just a channel problem.

- Diagnose traffic, measurement, conversion, and signal quality before changing spend.

- Scalable growth comes from architecture, not isolated campaign tweaks.

- Get minimum viable measurement in place before pushing budget.

- Don’t over-segment when your budget is too small to support clean learning.

- Match your acquisition model to your actual buying journey.

- Fix activation, retention, or monetization leaks before scaling acquisition.

- Add channels based on buyer fit and measurement readiness, not hype.

- Treat creative as an ongoing testing system, not a one-time asset.

- Want to find your real growth constraint fast? Reach out to us!

When Rising CAC Isn’t Just a Paid Media Problem

It’s not uncommon for people to treat acquisition like a media problem. You might swap channels, raise bids, or cut creative. And since you’re taking action, it can feel like you’re accomplishing something..

But as Sam McLellan, MAVAN’s VP of Growth notes, when a team says “we need growth help,” it can mean many things. It can point to product issues. It can point to execution issues. It can point to attribution issues. It can point to expansion issues.

Sam says the more common problem now is attribution. That gets sharper once last-touch stops matching how people actually buy.

Regardless, if the product is not converting, then media will not save it. If tracking is thin, your insight is mostly guesswork. Weak signal density teaches nothing, no matter how precise it is. And if you expand channels before the basics work, you are burning runway.

When acquisition breaks, the smartest first move is not to spend more. It is to find the real break.

This article will show how to architect scalable acquisition — measurement, audience logic, creative, landing experience, nurture, and product readiness. And, not to worry, we’ll also show how to diagnose weak links before they burn a hole in your investors’ pockets.

Why Acquisition Problems Usually Aren’t Just Paid Media Problems

When a team says, “we need acquisition help,” we should hear that as a symptom, not a diagnosis. Sam McLellan, MAVAN’s VP of Growth, puts it plainly: high costs can come from product issues, execution issues, attribution issues, or expansion issues. Sometimes the team simply does not know which of those is failing.

More often now, he says, the real problem is attribution — especially once last-touch no longer matches how people actually buy.

That is an uncomfortable truth, because it removes the fantasy of the clean fix. We cannot bid our way out of a broken onboarding path or creative-test our way out of missing conversion events. And scaling around a product that is not ready to convert or retain people will just bring everything crashing down.

Sam is blunt on that point too: sometimes the hard conversation is telling a founder that their baby (the product) isn’t ready to go outside yet, because the revenue, engagement, and retention signals are not there.

This is also why so many growth teams feel stuck even when they are working hard. They’re not lazy, but they are fragmented. When leaders are trying to move from founder-led heroics to a repeatable growth engine, the real challenge is not effort — it is building a system clear enough, coordinated enough, and trustworthy enough to scale without breaking.

What these teams need is one accountable team that can identify the real constraint, run the right experiments, ship the fixes, and build enough shared understanding to scale intelligently.

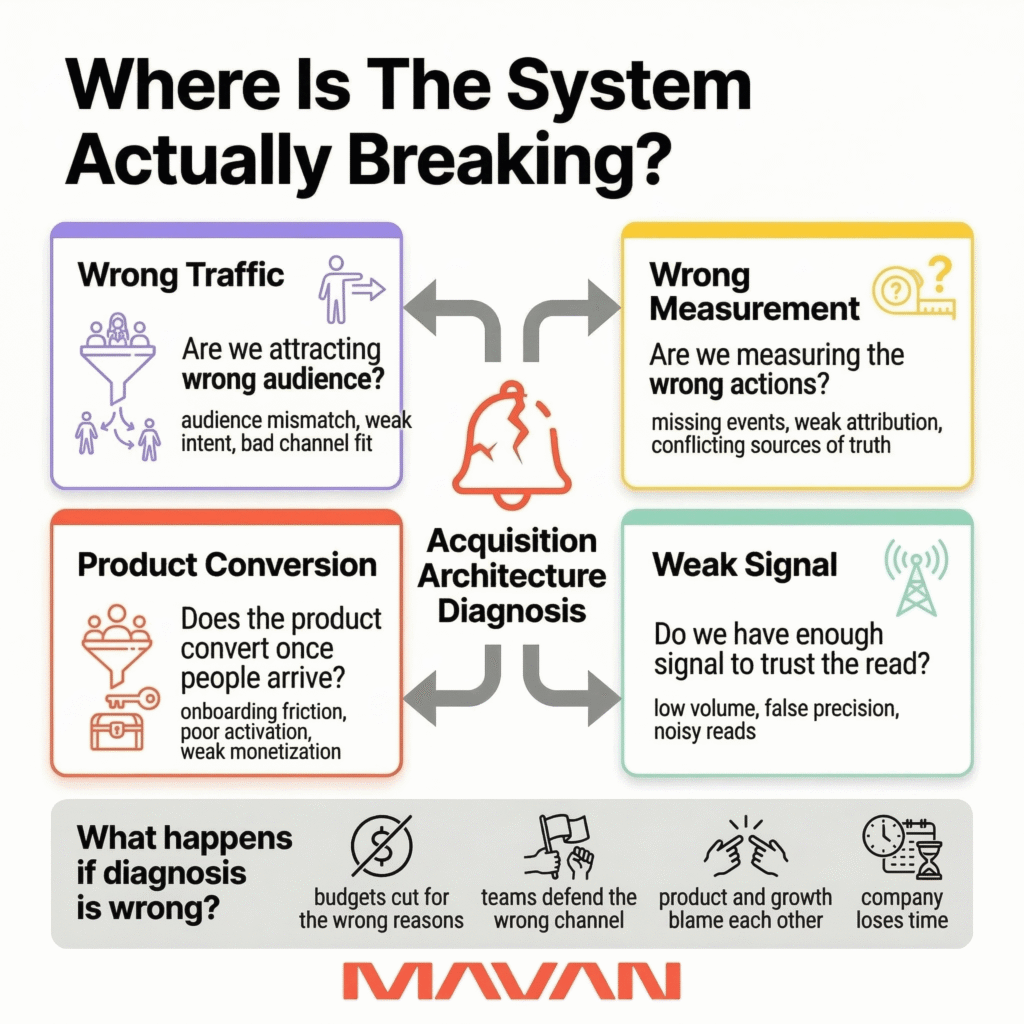

That is the first discipline of good acquisition architecture: do not ask, “Which channel should we push harder?” Ask, “Where is the system actually breaking?”

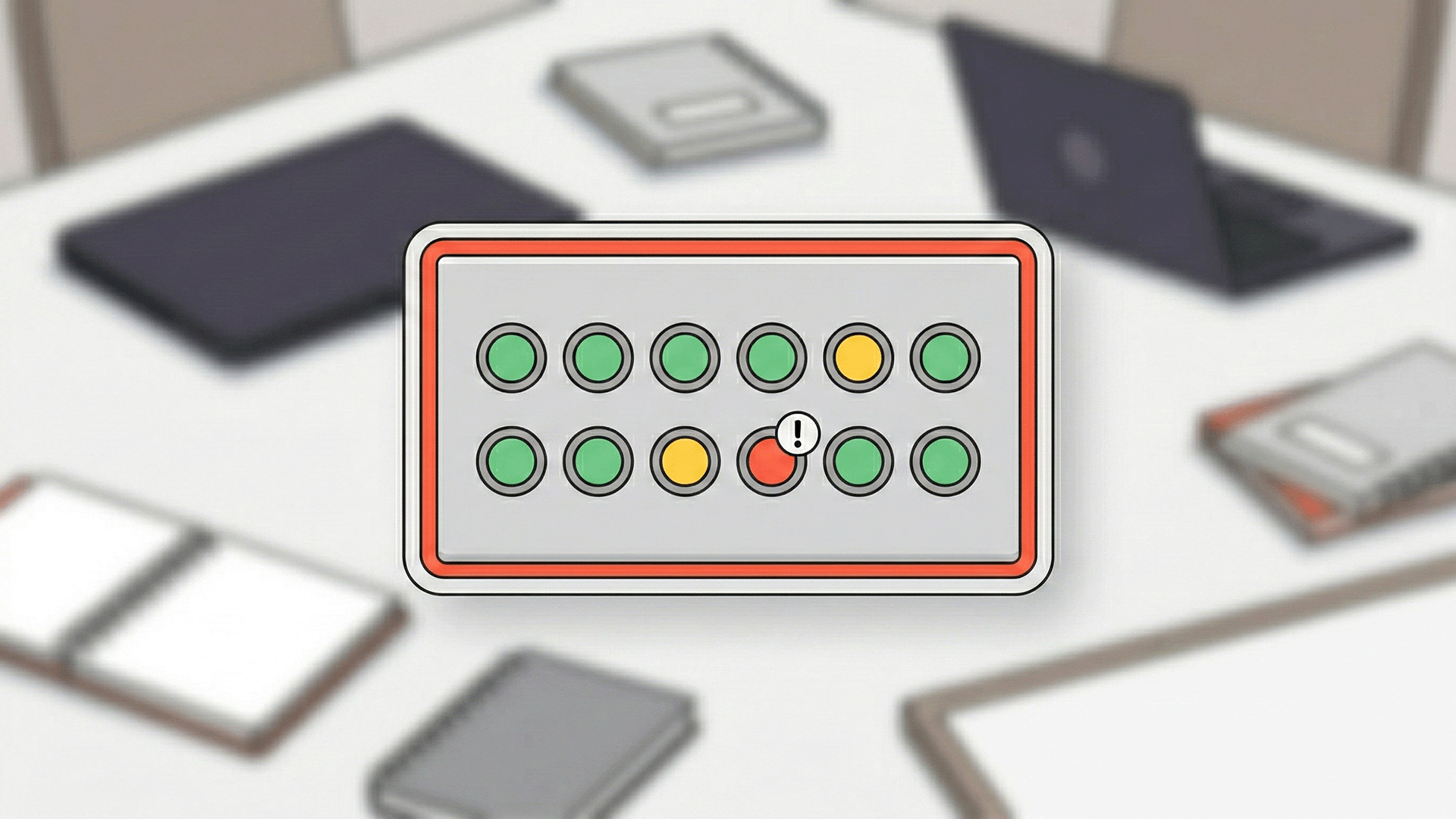

We recommend starting with four questions:

- Are we attracting the wrong traffic?

- Are we measuring the wrong actions?

- Does the product convert once people arrive?

- Do we have enough signal to trust the read?

That last question matters more than many teams realize. Sam explained that smaller budgets do not buy precision. They buy constraint. If signal density is low, teams often need broader groupings and a single source of truth they can actually trust, instead of pretending thin data is insight.

If we get this diagnosis wrong, every downstream decision gets noisier. Budgets get cut for the wrong reasons. Teams defend channels that were never the issue. Product and growth start blaming each other. And the company loses time it does not have.

So before we talk about media plans, bidding, or expansion, we need to define the system itself. That is what acquisition architecture is really for.

What Acquisition Architecture Actually Is — And Why Most Teams Skip It

When we say acquisition architecture, we do not mean a channel mix. We mean the full system behind growth. That system decides who we target, what promise we make, where we send people, what we measure, and what happens after the click.

Sam McLellan, MAVAN’s VP of Growth, described the working checklist in plain terms: objective, audience definition, conversion events, creative promise, onsite experience, KPIs, decision windows, and the gates that tell us when to scale or stop.

He also made a harder point. Most startups do not need perfection first. They need the bare minimums that let them learn honestly.

That distinction matters because many teams still treat paid acquisition like a series of isolated bets. They hire one media buyer, one creative shop, one lifecycle tool, and hope the pieces cooperate.

But a company should be built around a connected model: acquisition, data and experimentation, product, creative, and lifecycle working in sync, with line of sight across the whole system. The point is not to look comprehensive on paper. The point is to stop solving one part of the funnel while another part breaks without notice.

In practice, strong acquisition architecture has six working parts:

- A clear objective — not just more traffic, but a real business outcome.

- Audience logic — who we want, who we do not, and why.

- Conversion design — the event chain that shows real intent.

- Creative-to-landing alignment — the ad promise must match the page.

- Measurement rules — one source of truth, with enough signal to trust it.

- Decision windows — agreed points to hold, scale, cut, or fix.

Teams often launch without agreeing on what success looks like, or when to judge it. Then the channel gets blamed for confusion the system created.

Sam notes that, instead, we should build enough attribution to know where the money is going. We should keep the setup broad enough to get signal. Then we should add complexity only when scale earns it.

Good acquisition architecture does not remove uncertainty — it makes uncertainty visible, manageable, and useful.

That is why architecture beats tactics. Tactics can buy clicks. Architecture tells us whether those clicks can become revenue. It also tells us which team owns the next fix. If the ad is weak, or the landing page isn’t connecting with our audience, or the product just isn’t ready: we know. And if the nurture path is missing, we know that too. That is the standard we should hold ourselves to before we call any channel broken.

Start With Measurement, Not Spend

When pressure rises, teams often reach for budget first. We understand why. Spend feels like action. Measurement feels slower. But if we cannot trust what we are seeing, more spend only buys more confusion.

Sam McLellan, MAVAN’s VP of Growth, gave us a more honest starting point. From an MVP standpoint, he said, teams need the bare minimums first. That starts with attribution. In his words, you need to know where the money is going.

He also made an important distinction: If you are only using one or two major channels, like Google or Meta, you can often start with their own tools. You do not need to rush into an expensive MMP on day one. That comes later, when channel count, spend, and complexity justify it.

That is the right level of rigor for most venture-backed teams. We do not need perfect attribution before launch. We do need enough truth to make sane decisions.

This matters even more now because the measurement environment is still shifting. Old measurement assumptions are not stable enough to build a growth system on autopilot.

So what does enough truth look like?

We recommend a minimum viable measurement stack with four parts:

- A defined conversion path — from click to signup, demo, trial, install, or purchase.

- Platform-native tracking — pixels, SDKs, and server-side events where available.

- One source of truth — even if basic, it must be documented and shared.

- A review cadence — someone must check signal quality before scale decisions.

Sam warns that smaller budgets do not buy enough signal for precision. If you do not have the volume, you cannot carve campaigns into endless slices and expect clean reads. You need enough density to trust the pattern. That often means broader geos, broader groupings, and simpler setups until scale earns more granularity.

We see this same pattern in MAVAN’s own audit work. In one B2B SaaS engagement, attribution was limited to last-touch and original source. Many key touchpoints were untracked. CAC had climbed to a reported $60K to $100K. The recommended fix was not to buy more, it was better instrumentation, fuller touchpoint tracking, funnel automation, and a purpose-built multi-touch setup that could support real budget decisions

Similar recommendations helped cut CAC by 3x while scaling paid acquisition 5x at Titan.

That is the practical lesson. Measurement should answer the next business question, not win a software beauty contest.

Here is the simplest way to think about it:

- If you run one or two core channels, start with native tracking.

- If you cannot see key conversion events, fix that before scaling.

- If sales and marketing disagree on what “worked,” define one source of truth.

- If you are expanding channels, geos, or buying motions, prepare for stronger attribution.

We should earn complexity, not prematurely add it.

That mindset protects both speed and runway. It also creates better conversations across teams. Finance gets cleaner inputs. Product gets clearer signal on activation quality. Growth gets fewer arguments based on vibes.

Most of all, measurement keeps us honest. It tells us whether we have a media problem, a funnel problem, or a product problem. Without that, every scaling decision is partly superstition.

Match Your Acquisition Strategy to the Buying Motion

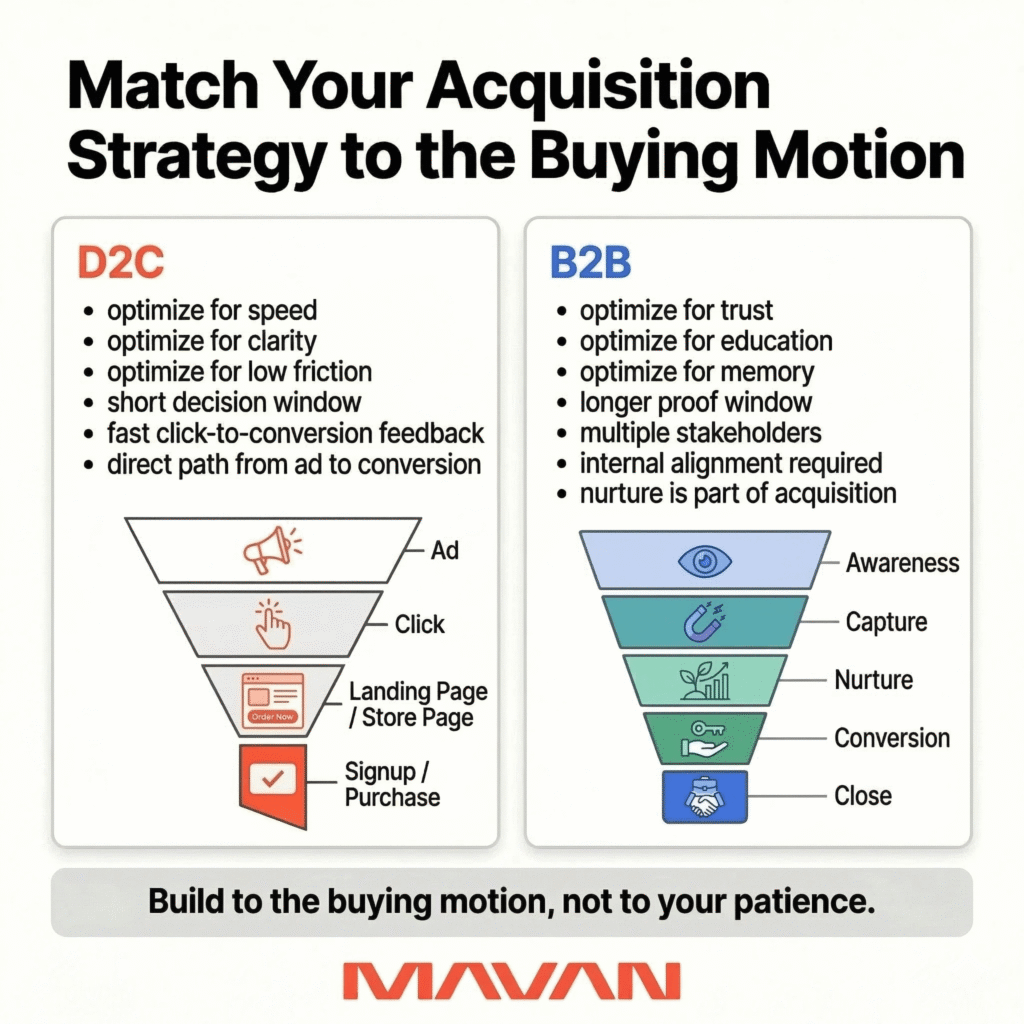

We should say this plainly: B2B and D2C are not the same acquisition problem.

Sam McLellan, MAVAN’s VP of Growth, notes that they are “two very different beasts.” In D2C, the window is short. We show the ad, the person clicks, and we learn fast. In many cases, if they do not act within days, or even hours, we may have missed the moment. In B2B, that logic breaks. The buyer may have multiple stakeholders, budget sign-off, legal review, and internal politics before anything closes.

That difference should change the whole architecture.

If we treat B2B like direct response, we usually create two problems. First, we demand speed from a buying process that cannot move that fast. Second, we judge channels too early, then cut them before they can do their job. That is not discipline. That is impatience dressed up as rigor.

Gartner reported in June 2025 that 61% of B2B buyers prefer an overall rep-free buying experience, and 73% actively avoid suppliers who send irrelevant outreach. That means many buyers want room to research on their own before they ever want a seller in the room.

That lines up with Sam’s funnel. For him, B2B acquisition starts with awareness. Then it moves into a value exchange, like a newsletter signup or an industry report download. Then it shifts into nurture — useful content, reminders, leadership content, and retargeting that keeps the brand front of mind. Later, when the timing is right, the buyer may search the company by name, click a branded result, and convert into an MQL for sales to close.

So we should build to the motion, not to our impatience.

Here is the practical split:

- If the motion is D2C or self-serve, optimize for speed, clarity, and low friction.

- If the motion is B2B or multi-stakeholder, optimize for trust, education, and memory.

- If the deal size is large, expect higher acquisition costs and longer proof windows.

- If the sale needs internal alignment, content and nurture are part of acquisition, not “extra.”

In gaming or other direct-response setups, costs are often measured in low double digits or less. In B2B, that math is different. A multi-million-dollar contract will not have a $12 acquisition cost. The work is slower, more expensive, and more dependent on nurture over time.

This is where many teams create false pressure for themselves. They launch B2B campaigns, send traffic to a demo page, and expect near-term proof. Then they conclude the channel failed. But often the real issue is architectural. There was no value exchange. No nurture path. No thought leadership. No branded search coverage. No plan for what happens between first touch and sales handoff.

We should not ask paid media to carry the whole story alone.

For most venture-backed SaaS teams, a better B2B architecture looks like this:

- Awareness: paid social, search, or programmatic introduces the problem and the brand.

- Capture: a useful asset, webinar, report, or newsletter earns the next step.

- Nurture: email, retargeting, and leadership content build trust over time.

- Conversion: branded search, high-intent return visits, and sales-ready actions create the handoff.

- Close: sales takes an informed, warmed-up lead instead of a cold click.

That does not mean every company needs a long, elaborate machine. It means the machine must fit the buying reality. Some products can close quickly. Some cannot. Some offers need a free trial. Some need proof, education, and internal consensus first.

The job is not to force one funnel on every business. The job is to build the shortest honest path to revenue.

Do Not Buy Precision Your Budget Cannot Support

We should be careful not to confuse granularity with rigor. They are not the same thing. A tight audience, a dozen geos, and five campaign splits can look disciplined. In reality, they can starve the system of signal and leave us reading noise as truth.

Sam McLellan, MAVAN’s VP of Growth, explains this clearly. Bigger budgets buy more freedom to get granular. Smaller budgets do not. When signal density is thin, he says, we often need broader groupings we can actually trust. That can mean targeting a whole country instead of a few cities. It can mean grouping markets together until volume supports cleaner reads. Only then does finer segmentation start to help.

Google Ads Help makes a similar point from the platform side. When there is little conversion data, Smart Bidding leans on broader query-level signals first. It gets more granular as more data comes in. In other words, the machine itself does not assume instant precision. Neither should we.

We see this logic inside MAVAN’s own audit work too. In one B2B SaaS growth plan, MAVAN recommended testing broad audiences against narrower 6sense targeting, while also using Advantage+ audience expansion. This provided a way to learn faster with the budget and signal available.

Here is the practical rule:

- If volume is low, simplify and consolidate the structure.

- If conversion data is thin, widen the grouping.

- If results swing wildly, question the sample first.

- If a segment looks ideal, ask whether it has enough data.

- As scale comes, add more granularity step by step.

We should not optimize for the feeling of control. We should optimize for signal we can trust.

That approach is less glamorous. It is also how better decisions get made. False precision burns time, money, and confidence. Honest grouping gives us a system that can learn.

Expand Channels Because the Audience Fits

Channel expansion should not start with novelty. It should start with fit.

Sam McLellan, MAVAN’s VP of Growth, notes that he does not pick new channels because they sound exciting. He pairs channels to the product and the audience. In his example, CTV worked for a trivia product because the audience already loved game-show environments. The leap from watching trivia on TV to playing trivia on a phone was small and intuitive. That is why the channel worked. Not because CTV was fashionable, but because the audience-product fit was real.

He made the same point about Reddit. Historically, Reddit was too manual for many performance teams. Now, he sees it as more viable because the platform has matured toward performance marketing, especially when the product serves a specific, high-intent audience that actually gathers there.

For some B2B products, that concentration matters more than brand safety theater might. (We know some people have concerns about the NSFW nature of Reddit.) The point is not that Reddit is always good. The point is that channel choice should follow where the decision-makers already spend attention.

The broader market supports that shift. IAB reported in 2025 that CTV rebounded with 16% year-over-year growth in 2024, helped by programmatic and self-serve tools, while digital video was projected to capture nearly 60% of all TV/video ad spend in 2025. Reddit’s own Audience Manager documents that advertisers can target by keywords, communities, and interest groups, then expand reach with automated targeting beyond those initial choices.

But none of that means we should spray budget across every shiny option. Sam was clear on this too. Early on, teams should not spread $10,000 across 20 channels. They should pick one or two core channels, get signal, and expand only when the data, spend level, and measurement setup can support it.

We recommend a simple three-part test before any expansion:

- Audience fit — do the right buyers actually spend time there?

- Measurement readiness — can we track quality, not just clicks?

- Creative fit — can we make work that suits the channel itself?

Creative fit is critical. A channel can be right in theory and still fail in practice because the creative was lazy, mismatched, or built for another format. Sam said as much about CTV. We cannot treat it like a mobile placement and expect it to work.

We also see this discipline in MAVAN’s case work. In one acquisition engagement, MAVAN first improved efficiency through value-based optimization and tROAS guardrails. Then the team expanded into Bing and Tier 2 through Tier 4 markets against performance benchmarks, rather than expanding blindly. So, to sum up: Stabilize first. Expand second.

Creative Is Part of the Acquisition Architecture

We should stop treating creative like garnish.

Creative is not the pretty layer on top of media. It is part of the system, carries the product’s promise, informs the click, and sets expectations. It even affects whether the product feels coherent once someone arrives.

That is why Sam McLellan, MAVAN’s VP of Growth, does not talk about creative as a handoff. He talks about it as a loop: ideation, concepts, review, launch, a second phase tied to product and revenue, then documented learnings shared back with the team. He also emphasized that the process has to stay collaborative. Growth cannot just be handed a file and told to hope.

Google’s 2025 AI Essentials guide makes a similar case from the platform side. It says creative now matters more than ever, and cites NCS research that creative drives 49% of total sales impact in advertising. Google’s advice is simple: give the system better inputs, more varied assets, and a steady flow of learnings back into production.

That should change how we think about performance marketing. If creative is weak, vague, or disconnected from the landing page, the media team is forced to optimize around a bad promise. That is not efficient. It is expensive denial.

The creative loop we trust

We recommend a six-part loop:

- Ideate around real buyer tension — not internal preferences.

- Build a few distinct concepts — not tiny edits of one idea.

- Review cross-functionally — growth, creative, and product together.

- Launch in a test lane — isolated enough to read honestly.

- Tie winners to product and revenue — not clicks alone.

- Document what worked — hooks, offers, formats, audiences, and why.

Sam is especially firm on the test lane. He prefers a dedicated creative-testing budget and a separate testing structure, because major platforms will often favor assets with historical data. If we throw fresh creative into a live campaign without protection, the algorithm may never give it a fair read.

If we do not protect the learning, we do not really have a testing program.

That lesson shows up in MAVAN’s case work too. In one gaming audit, MAVAN found creative refreshes were happening only two to three times a year. That pace was driving weaker engagement and running at less than 5% of elite creative-testing velocity. The recommendation was not to make more ads, but to launch weekly creative sprints, produce four to six new variants per week, build platform-native content, and diversify beyond a format mix that had become too narrow.

In a B2B SaaS audit, MAVAN made the same argument in a different language. We recommended moving beyond static formats into video, carousels, competitive positioning, and educational short-form content that could work both organically and as paid creative. Different market, same principle: creative variety is not indulgence. It is operating discipline.

We’ve used these and similar strategies to drive a 32% increase in conversions for KidStrong — whose CMO also said we cut customer acquisition costs by 60% — and in Titan, as part of a broader acquisition rebuild that reduced CAC by more than 3x while scaling paid acquisition 5x.

What creative fatigue actually is

Teams often mislabel fatigue. Sometimes the ad is passe or the audience is saturated. Maybe the reference is fading from pop culture. Or sometimes the offer no longer matches the moment.

Sam broke this down in practical terms. If the same person sees an ad 10 times in a week, that is probably too much. If they see it seven times over three months, that is a different story. Context matters. Frequency alone is not the whole diagnosis. He also told us he keeps a double-digit share of budget reserved for creative testing at all times, even in startup settings, so teams are not scrambling when a top performer fades.

That is the healthier model. We should not panic when a top asset slows down. We should rotate in the next promising concept, let the old winner rest, and bring it back later if the context changes. Sam described this as building a portfolio you can cycle in and out, instead of betting the whole quarter on one hero ad.

So the actionable rule is simple:

- Keep a standing test budget.

- Separate testing from scale campaigns.

- Judge creative on downstream quality, not CTR alone.

- Refresh before panic sets in.

- Reuse winners after a rest period.

- Share learnings across media, product, and lifecycle.

That is what systems-aware creative looks like. It is specific, collaborative, and honest about what the ad can and cannot do on its own.

Payback Math Is a Cross-Functional Decision — Not a Media-Team Fantasy

Payback math is where acquisition stops being abstract.

Up to this point, teams can hide inside dashboards. They can argue about CTR, CPM, and volume. But payback forces a harder question: when should this spend come back as real money, and who is accountable if it does not?

Sam McLellan, MAVAN’s VP of Growth, states that payback needs to be “a cooperative thing.” The window cannot be set by growth alone. Product, finance, and the growth team all have to agree on what is realistic. Otherwise, one team picks an easy fantasy and the rest of the company pays for it later.

Payback can shift too, depending on context and circumstances. In mobile gaming, Sam recalls that six months was often the working window. However, as games became more live-service driven, some teams started using longer horizons because retention and monetization supported it. The window changed because the business changed.

This makes sense because the payback period should follow the economics of the product.

This is also where internal tension shows up fast, Sam says. Growth usually wants a longer window, because longer payback lets the team bid more aggressively and acquire more customers. Product may want a shorter window, because product ends up carrying more of the burden to turn those acquired users into actual revenue.

That is how blame starts. Growth says, “we brought the right people.” Product says, “you brought us the wrong people.” Both may have part of the truth.

If payback assumptions are not shared, every team gets to invent its own story.

That is why we treat payback as governance, not just finance math.

Sam makes another important point that leaders should pay attention to: LTV is still theoretical until the agreed time window actually passes. You can model it. You can estimate it. You can build proxy signals around it. But until the revenue arrives, it is still a forecast. That means teams should stop speaking about projected LTV as though it were cash already in the bank.

That sounds obvious, yet it gets ignored all the time In practice.

We see teams say, “The model says this cohort is profitable.” Then nobody checks back in six months later. Or twelve. Or eighteen. Sam calls this out directly. He sees teams set a CAC, project an LTV by some future date, call it technically profitable, and move on. That is not enough. You have to come back and see if reality matched the curve.

There is another reason break-even math fails. Break-even is not profit. Sam lists the hidden drag plainly: platform fees, payment processing, personnel, tools, and all the other costs a real company carries. If the model only gets you back to zero on paper, it is probably losing money in practice.

For venture-backed SaaS teams, outside benchmarks can help here, but only if we use them humbly. Bessemer’s cloud benchmark guidance has framed CAC payback this way: 12 to 18 months is “good,” 6 to 12 months is “better,” and 0 to 6 months is “best.”

That can be useful context, but it’s not a law of nature. The right window still depends on product type, gross margin, retention quality, and how much expansion revenue is real versus hopeful.

So what should a healthy payback process include?

- A shared payback window, agreed by growth, product, and finance.

- A written definition of LTV, including what is excluded.

- A list of real costs, not just ad spend.

- Regular check-in dates to compare forecasted versus actual cohort behavior.

- A rule for what happens if the curve misses.

Teams usually define the target, but they rarely define the consequence. If actual payback slips, do we cut bids? Tighten targeting? Fix onboarding? Rework monetization? Reset the window? If nobody agrees on that in advance, payback becomes a political weapon instead of a planning tool.

That is exactly the kind of mess acquisition architecture should prevent.

The Leading Indicators That Matter Before CAC Settles Down

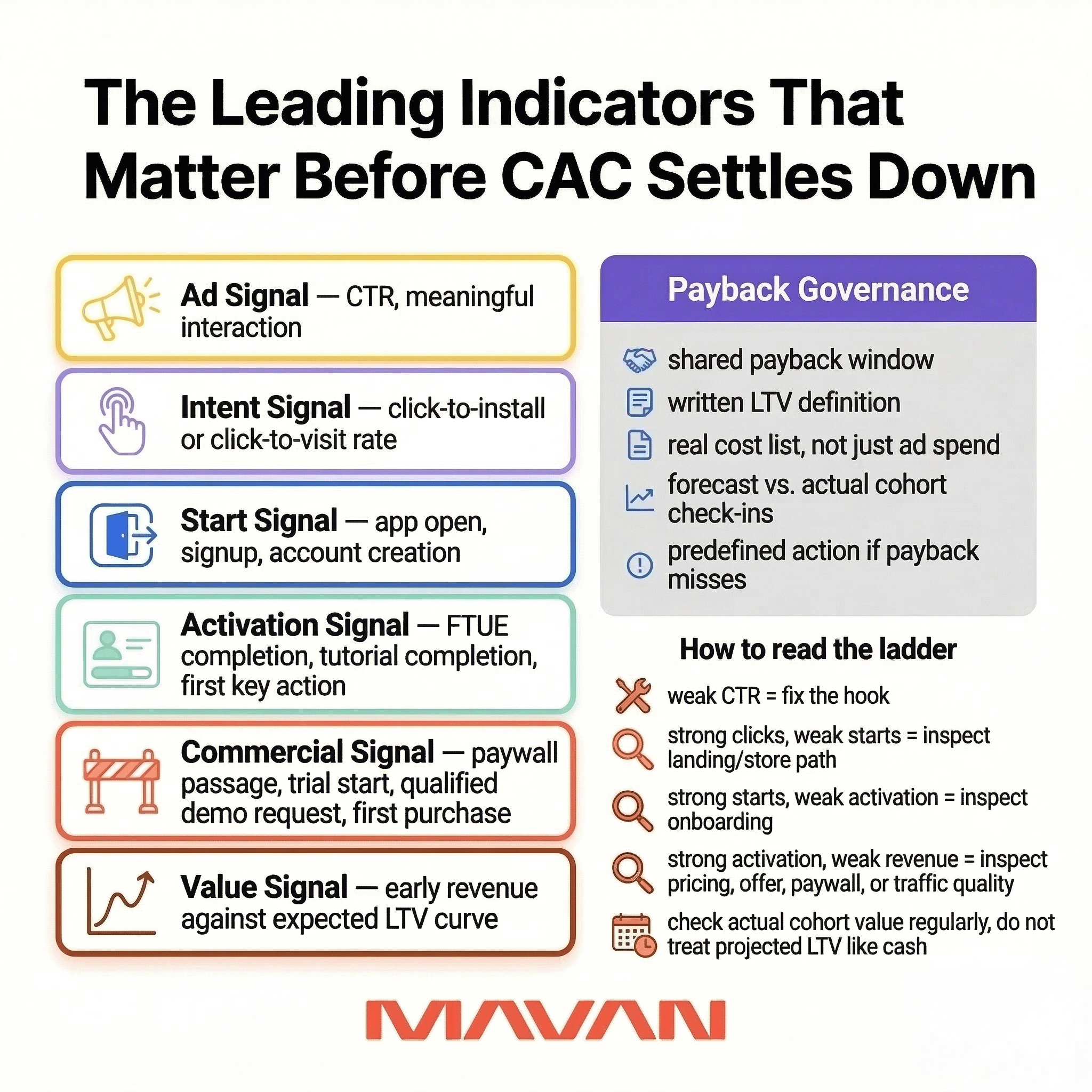

Before CAC stabilizes, we should not stare at blended efficiency and hope clarity appears. We need a ladder of signals. Each step should tell us whether the next step has a chance to happen. That is how we avoid overreacting to noise and underreacting to real breakdowns. Sam McLellan, MAVAN’s VP of Growth, describes this as starting “all the way to the top” of the funnel, then moving down only as the system earns more evidence.

Sam’s first question is simple: are people even engaging with the ad? He starts with click-through rate and meaningful interaction, because a weak hook makes every later metric worse. If the ad does not earn attention, we are not testing acquisition. We are funding invisibility.

Then we move one step deeper. Sam looks at click-to-install rate and app open rate, because a download is not the same as a real start. People get distracted and apps disappear into folders. Intent leaks between install and first open. That gap matters because it tells us whether the promise in the ad survives contact with the store page and the device itself.

After that, the signals need to get more behavioral. Sam’s sequence is sign-up, FTUE completion, tutorial completion, paywall passage, or free-trial progress, depending on the product. Those are the moments where traffic turns into real product exposure. They are not revenue yet, but they are far closer to value than impressions, clicks, or installs alone.

That is why we recommend reading early performance in layers:

- Ad signal: CTR and meaningful interaction.

- Intent signal: click-to-install or click-to-visit rate.

- Start signal: app open, signup, or account creation.

- Activation signal: FTUE completion, tutorial completion, or first key action.

- Commercial signal: paywall passage, trial start, qualified demo request, or first purchase.

- Value signal: early revenue mapped against the expected LTV curve.

This is also where false wins show up. Sam gave a sharp example from rank-pushing campaigns. Top-line traffic can spike and make the day look great. But those installs are often low-intent. Many never reach signup or get retained. If product and finance only see the surface, they may think acquisition is working right before monetization and retention say the opposite.

The right leading indicator is not the earliest metric. It is the earliest metric that still predicts value. That is the standard. Not what can be measured fastest, but what can be measured early that still tells the truth.

For most teams, the working discipline should look like this:

- If CTR is weak, fix the hook first.

- If clicks are fine but starts are weak, inspect the store page or landing path.

- If starts are fine but activation is weak, inspect onboarding.

- If activation is fine but revenue lags, inspect pricing, paywall, offer, or traffic quality.

- If every early signal looks strong, check back later against actual cohort value.

That last step matters because Sam warned against a common failure: teams project LTV, call the cohort profitable, and never return to see whether reality matched the curve. CAC is real on day one. LTV is still a forecast until time proves it.

The Founder Conversation Nobody Likes: Your Product May Not Be Ready to Scale

When CAC climbs, it can feel kinder to blame the channel or algorithm. That doesn’t make it true though.

Sam McLellan, MAVAN’s VP of Growth, says he has had to tell founders and executives that their product simply isn’t ready to go to market. His point is simple: if the revenue, engagement, and retention signals are not there, paid acquisition will not magically create them. It will just make the weakness more expensive.

This isn’t an insult to the founder or proof the product is bad. It is a sequencing problem. A product can be marketable enough to get installs, clicks, or trials. That does not mean it is scalable. Sam drew that distinction clearly. A product might get people in the door, but still lack the revenue, engagement, and retention needed to support durable acquisition.

That is important to know for founders moving from hustle-driven GTM to a repeatable growth system. Their fear of burning runway before they find a scalable channel, or discovering too late that the GTM story does not really resonate, is not abstract.

But throwing money at acquisition is often a substitute for doing the slower work of product readiness.

That slower work usually lives in four places:

- activation

- retention

- monetization

- measurement

If one of those is weak, acquisition could get blamed for a product problem.

Sam shares a sharp example. A subscription-based company saw very strong free-trial starts. On the surface, it looked great. But paid conversion later collapsed into the single digits. The problem was not just the ad. The company had attracted people who were willing to start a trial, but unlikely to become paying customers. In that case, many were teenagers without credit cards. Early demand looked promising. Actual monetization said otherwise.

That is the danger of scaling too early. Spend amplifies whatever is true. If the product works, scale helps. If the product leaks value, scale just widens the leak.

If the product is not ready to retain or monetize demand, more acquisition is not ambitious hope — it is acceleration toward failure.

That does not mean we freeze every time the product is imperfect. No early-stage product is perfect. It means we need a readiness test before we scale.

We recommend four questions:

- Do users reach the key activation moment consistently?

- Do meaningful cohorts stick around long enough to matter?

- Does monetization happen at a rate that can support payback?

- Can we measure those answers with enough confidence to act?

If the answer is no, the next move is not always to stop everything. Sometimes the right move is limited spend for learning. Sam notes that, in some cases, a partial return can be acceptable in the short term if the goal is to gather data and improve the product. But that only works if everyone is honest about the tradeoff.

That honesty is where most teams struggle. Founders are naturally emotionally tied to their product. But leadership still has to separate love from evidence. Our job, if we are serious about growth, is not to flatter the product. It is to protect the company.

So when this conversation arrives, we should handle it with care and firmness:

- name the evidence

- separate product potential from product readiness

- define what must improve before scale

- agree on what “ready” will mean

- spend only against that reality

That is not pessimism. It’s stewardship. That is how we protect runway, team morale, and the chance to scale something real.

What Good Acquisition Architecture Looks Like in Practice

Theory matters. Pattern matters more.

When acquisition architecture is working, we can usually see the same sequence. The team gets clearer on measurement. It narrows the real objective. It improves the conversion path. Then it expands with discipline.

That pattern shows up across MAVAN’s case studies. The channels change. The products change. The mechanics do not. The work starts by making the system more legible, not more chaotic. That means end-to-end execution across acquisition, data, experimentation, product, creative, and lifecycle — with line of sight across the whole funnel.

ElevenLabs — prove the channel, then scale it

With ElevenLabs, MAVAN did not treat search as a side test forever. It treated it as a growth engine once the economics held. Our team used proven campaign structures, disciplined bid management, and international expansion logic to turn early search experiments into a scalable program across Google and Bing. The result was search spend scaled from zero to a high six-figure monthly budget, while maintaining sub-12-month payback and expanding into 20-plus international markets with positive incremental ROI.

Luke Harries, Head of Growth, said the program became efficient enough to transition in-house after MAVAN proved it out.

Fireflies — expansion works better when the signal is clean

Fireflies shows a similar pattern, but with a different constraint. The problem was not to run more search. The problem was how to scale beyond the U.S. without losing efficiency. We identified stronger geographies, prioritized higher-ROAS search campaigns, refined non-brand keyword sets, and used targeted tCPA bidding to keep spend under control. The result was six-figure spend in four months, about 1.5x ROAS on non-brand campaigns, and launches in 31 new regions.

Dionatan Korb, Growth Lead, credited MAVAN with helping the team identify the right markets, optimize customer value, and find scale efficiently.

KidStrong — creative and CRO are acquisition work

KidStrong is useful because it breaks the lazy habit of blaming media first. MAVAN improved paid acquisition by testing authentic creative, guiding paid media execution, and optimizing signup flows through landing page CRO. The result was a 32% increase in conversions.

Erin Clift, KidStrong’s CMO, said MAVAN also helped cut customer acquisition costs by 60%.

Titan — better measurement changes the economics

Titan’s case points to the part many teams resist. Sometimes the real leverage sits inside tracking and attribution. Titan needed to scale paid acquisition, validate creative strategy, and rebuild tracking infrastructure for long-term growth. MAVAN focused on lowering blended CAC, prioritizing top-performing channels, and overhauling attribution and tracking. As a result, CAC fell by more than 3x while paid acquisition scaled by 5x. It also points to better measurement as part of what made that possible.

Angus Kirby, Director of Marketing, said MAVAN’s value was not just strategy, but execution through a coordinated specialist model.

The key takeaway from all of MAVAN’s case studies

Good acquisition architecture does not chase one heroic lever. It makes the whole system easier to trust.

Across these cases, the repeated lesson is simple:

- start with clarity

- fix the weak link

- scale only what earns scale

- treat creative, product, and measurement as one operating system

That is what “good” looks like in practice. It is not louder. It is not more fragmented. It is not built on hope. It is structured, legible, and accountable.

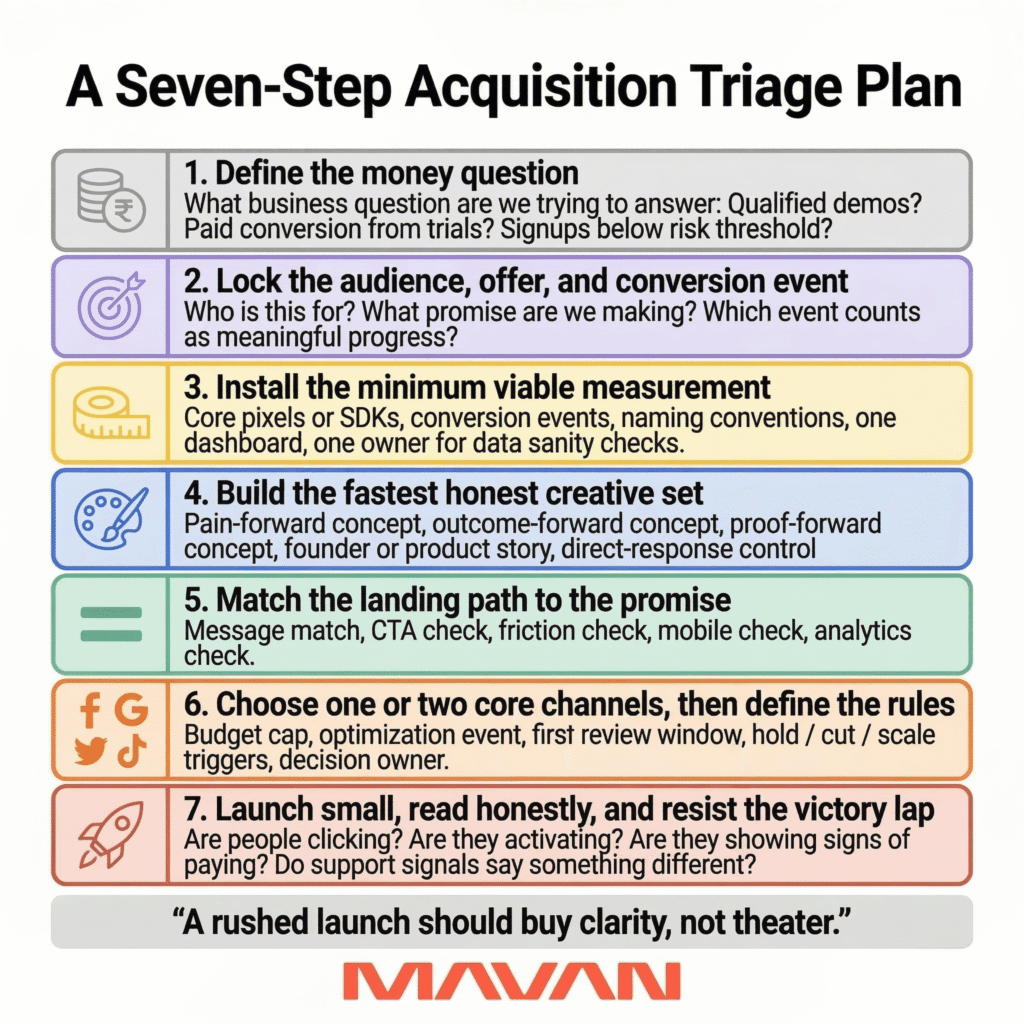

A Seven-Step Acquisition Triage Plan

If a team has little time to hit the ground running, that’s not ideal.

Sam McLellan, MAVAN’s VP of Growth, called it a true nightmare scenario if engineering is handed an attribution SDK with only days left before launch. In that case, he said, the honest answer may be that a few days is not enough time to do what is needed. But if the launch still has to happen, then a few minimums are non-negotiable: tracking, some form of creative, and a clear plan for what happens in the first 30, 60, and 90 days. Without those, fast execution just means fast confusion.

A rushed launch needs triage. We should focus on what will let us learn honestly, protect runway, and avoid scaling a false positive. As Sam notes, we should not launch blind.

Here is the seven-step triage plan we recommend.

Step 1 — Define the money question

We should start with one clear objective — not to run paid ads or drive awareness, but to get an answer to a specific business question.

Examples:

- Can this channel produce qualified demos?

- Can this product convert trial starts into paid users?

- Can this offer generate signups below our risk threshold?

If the team cannot name the question, the launch is not ready. Sam notes that the point is to know where the money is going.

Step 2 — Lock the audience, offer, and conversion event

This is where many teams drift into vagueness.

We need three decisions:

- who the launch is for

- what promise we are making

- which event counts as meaningful progress

Google Ads Help says conversion actions should reflect the business outcome that matters, such as a purchase, signup, or phone call. That is basic, but teams still skip it. They launch into a fog of “engagement,” then argue later about what success meant.

If the product has a long sales cycle, the event may be a qualified lead. If it is self-serve, it may be signup, trial start, or first purchase. What matters is that we choose it now.

Step 3 — Install the minimum viable measurement

This is the time for discipline.

Sam is clear that startups do not need the full enterprise stack on day one. If they are only using one or two major channels, platform-native tools can be enough to start. But they do need attribution at a basic level. They need to know where spend is going.

So Step 3 should cover:

- core pixels or SDKs

- conversion events

- naming conventions

- one dashboard or reporting view

- one owner for data sanity checks

Meta says Conversions API can improve measurement across the customer journey. Google says enhanced conversions and linked key events can improve matching and optimization. We should not overbuild here. We should make sure the system can see the actions we care about.

Step 4 — Build the fastest honest creative set

If the team has only a few days, Sam says the realistic output may be static creative. That is fine. The goal is not beauty. The goal is a testable promise. He also reminds us that, at launch, everything is a creative test. That means we should not act as if we already know the winner.

We recommend three to five variants built around different tensions:

- one pain-forward concept

- one outcome-forward concept

- one proof-forward concept

- one simple founder or product story

- one direct-response control

That is enough to learn from and make more fully-informed decisions with.

Step 5 — Match the landing path to the promise

This is where wasted spend often hides.

If the ad promises clarity, the page cannot create friction. If the ad sells one audience, the page cannot speak to three others. If the CTA asks for a big commitment, the path has to earn it.

So Step 5 should include:

- message match check

- CTA check

- form or signup friction check

- mobile check

- analytics check on the page itself

Step 6 — Choose one or two core channels, then define the rules

Sam says this plainly: if you have $10,000, do not spread it across 20 channels. Pick one or two. Get signal. Learn what the system can support.

This is also the step to define the rules before emotions take over.

We should write down:

- the budget cap

- the event we are optimizing toward

- the first review window

- the hold, cut, or scale triggers

- who gets to make the call

Step 7 — Launch small, read honestly, and resist the victory lap

Launch day should not be framed as proof. It should be framed as the start of learning.

Sam’s warning here is especially useful. He has seen teams celebrate huge free-trial starts, only to learn a week later that paid conversion was terrible because they had attracted the wrong audience. He cites one example where 75% of incoming traffic started a free trial, but paid conversion later fell into the single digits. The top of funnel looked healthy. The business result did not.

That is why Step 7 is about restraint.

We should ask:

- Are people clicking?

- Are they starting?

- Are they activating?

- Are they showing signs of paying?

- Are support or refund signals telling a different story?

If the answer is mixed, that is not failure. It is information for growth.

What a seven-step launch plan should do

A seven-step launch should buy clarity, not theater.

That is the whole point of this plan. We are not trying to look sophisticated in a week. We are trying to create enough truth to decide the next move.

So the working sequence is simple:

- define the question

- choose the audience and event

- instrument the basics

- launch testable creative

- fix the landing path

- limit channel sprawl

- read the first signals with discipline

That is how we protect the team from false confidence. It is also how we give the launch a real chance to become something scalable later.

A Simple Plan for Your Next Few Days

Acquisition rarely breaks in one obvious place. It breaks across systems. A clearer system, with a sharper diagnosis, and a team that can help you fix the real constraint is critical.

So here is the simplest next step we can offer:

- If you need a fast read on where acquisition is actually breaking, reach out to us about an acquisition architecture diagnostic.

- We will help you identify the weak link, the signal gaps, and the next highest-leverage fix.

- Then you can decide what to do with clarity, not panic.

Because that is what good growth work should do. It should lower the noise, tell the truth, and make the next move easier to trust.

Frequently Asked Questions About Scaling Acquisition Architecture

What is acquisition architecture?

Acquisition architecture is the full system behind growth: objective, audience, conversion path, creative, landing experience, measurement, and decision rules.

Why isn’t rising CAC always a paid media problem?

Because higher CAC can be caused by product issues, weak conversion, poor attribution, thin signal, or channel expansion mistakes — not just campaign performance.

What should teams diagnose before changing spend?

Start by asking whether you’re attracting the wrong traffic, measuring the wrong actions, converting poorly after the click, or missing enough signal to trust the data.

When is a product ready to scale acquisition?

Usually when activation is consistent, retention is meaningful, monetization supports payback, and measurement is strong enough to guide decisions.

Should startups invest in a full attribution stack right away?

Not always. If you’re only running one or two major channels, native platform tracking can often be enough to start.

What is minimum viable measurement?

At minimum: pixels or SDKs, key conversion events, naming conventions, one reporting view, and one shared source of truth.

How many channels should a team use at launch?

Usually one or two. Spreading limited budget across too many channels kills signal and makes learning harder.

Why can more segmentation make results worse?

Because small budgets don’t buy enough data for precision; too much granularity can create noise instead of insight.

How should B2B acquisition differ from D2C?

B2B usually needs more trust, education, nurture, and time, while D2C usually rewards speed, clarity, and low-friction conversion paths.

What role does creative play in acquisition architecture?

Creative is not decoration — it is part of the system, because it shapes promise, audience fit, click quality, and downstream conversion.

Why does the landing page matter so much?

Because if the page breaks the promise of the ad, adds friction, or asks for too much too soon, paid traffic gets wasted fast.

What should teams do if acquisition is underperforming?

Don’t rush to spend more; find the real weak link first, fix the system constraint, and scale only what earns scale.

What should a rushed launch still include?

A clear money question, defined audience and conversion event, basic tracking, testable creative, aligned landing path, limited channels, and disciplined review windows.

What is the biggest mistake growth teams make?

Treating acquisition like a set of isolated tactics instead of one connected operating system across media, product, creative, lifecycle, and measurement.

What’s the fastest next step if we’re not sure where growth is breaking?

Run an acquisition architecture diagnostic to identify the weak link, close signal gaps, and prioritize the highest-leverage fix. If you need help, reach out to us!

Book a complimentary consultation with one of our experts

to learn how MAVAN can help your business grow.

Want more growth insights?

Thank you! form is submitted

[hubspot type=”form” portal=”20951211″ id=”9c538ed2-fb12-45f1-a573-ad7953c058cc”]

Related Content

-

Acquisition Architecture That Scales: A Practical Performance Marketing Framework for Venture-Backed Teams

TLDR — 10 Growth Takeaways for Acquisition Architecture When Rising CAC Isn’t Just a Paid Media Problem It’s not uncommon for people to treat acquisition like a media problem. You might swap channels, raise bids, or cut creative. And since you’re taking action, it can feel like you’re accomplishing something.. But as Sam McLellan, MAVAN’s…

-

The Board-Ready Scoreboard: The 12 Metrics That End Arguments

TLDR — 10 Takeaways For Returning Decision-Making to Board Meetings When board meetings go wrong You walk into the board meeting with hope. The soft launch looks stable and the gates you set look good. You get the green light to go global and the board wants you to ship it immediately. It’s understandable because…