TLDR — 10 Takeaways For Returning Decision-Making to Board Meetings

- Build a one-page scoreboard that drives decisions, not debate.

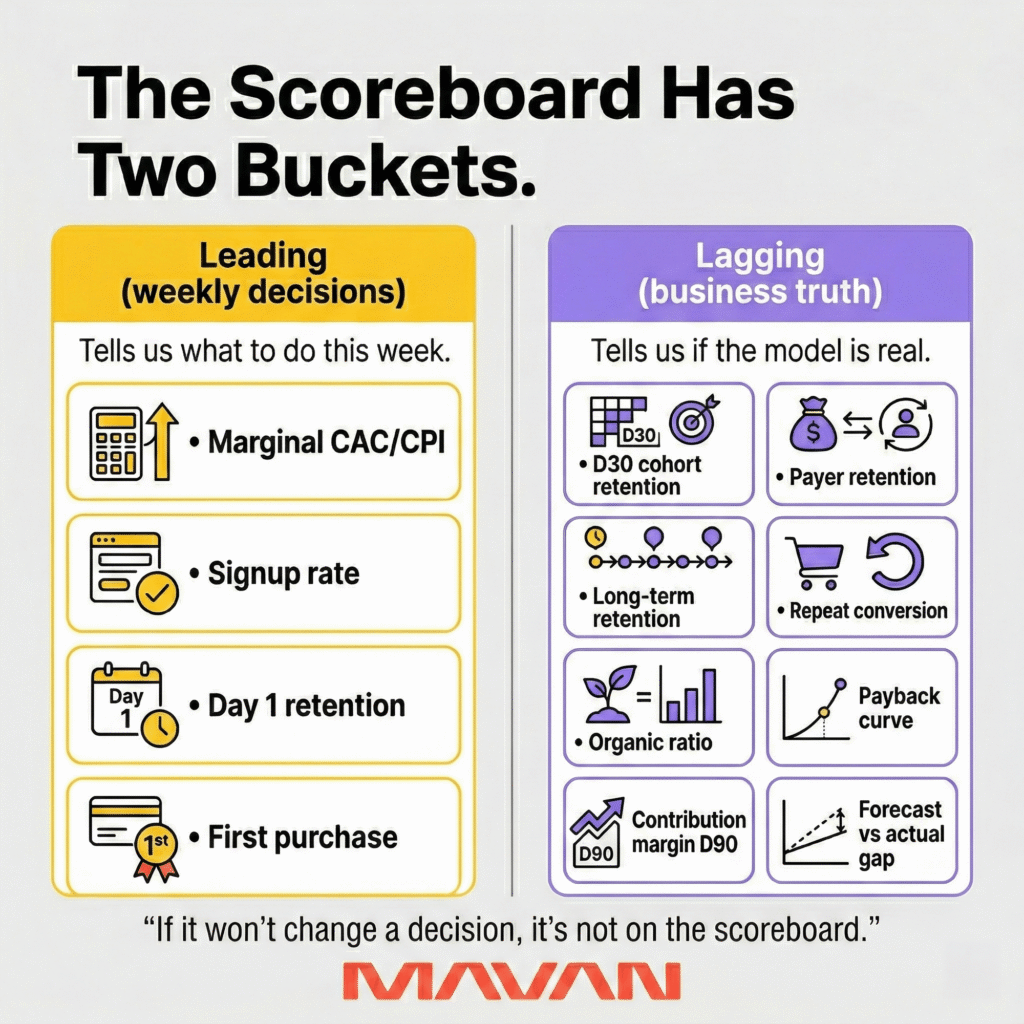

- Split metrics into two buckets: leading indicators for weekly action, lagging indicators for business truth.

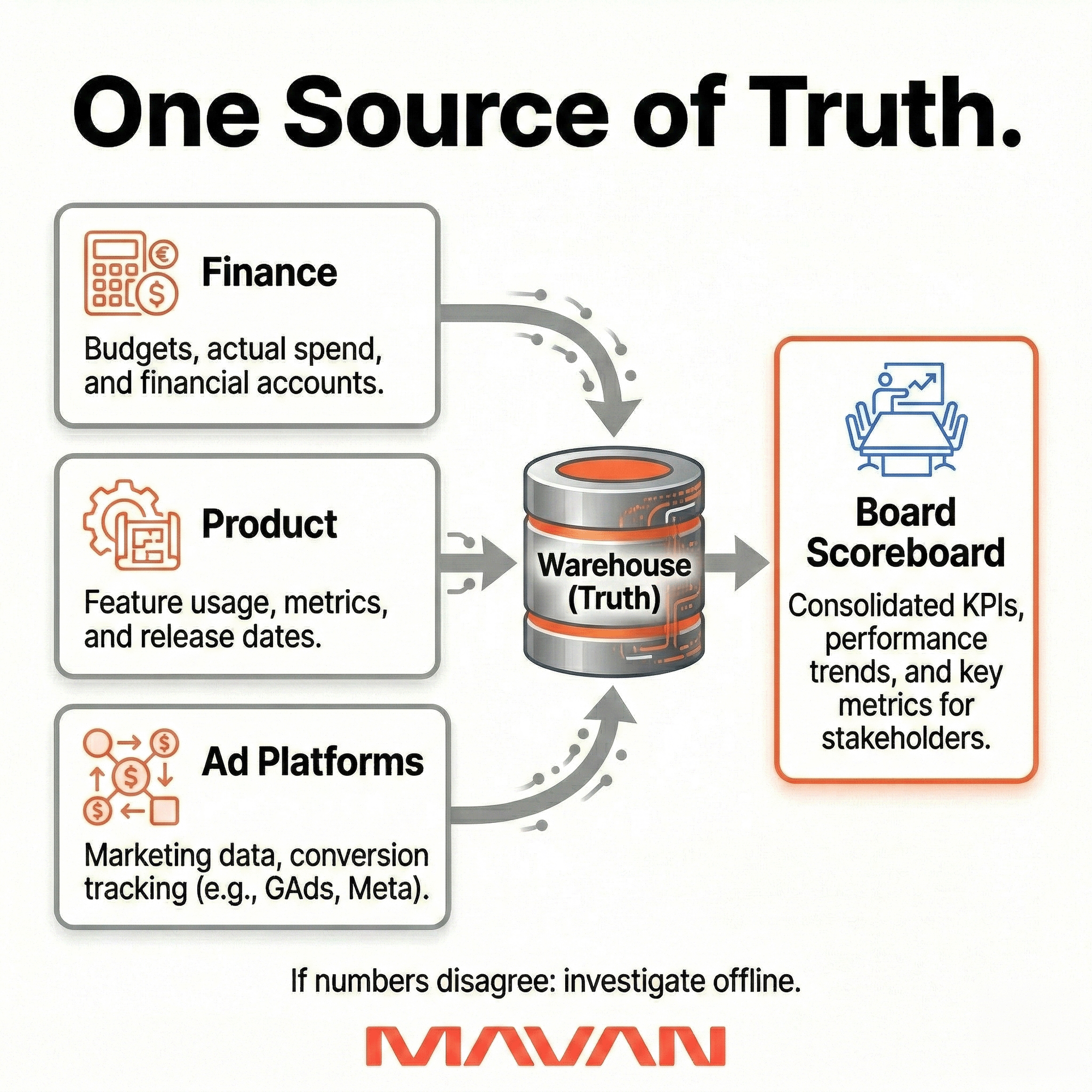

- Use one agreed source of truth across Product, Growth, Finance, and Analytics.

- Define every metric once: formula, time window, cohort rule, inclusions, exclusions, and decision trigger.

- Assign one clear owner to every metric so accountability does not get diffused.

- Use red/yellow/green thresholds instead of vague targets so each metric changes behavior.

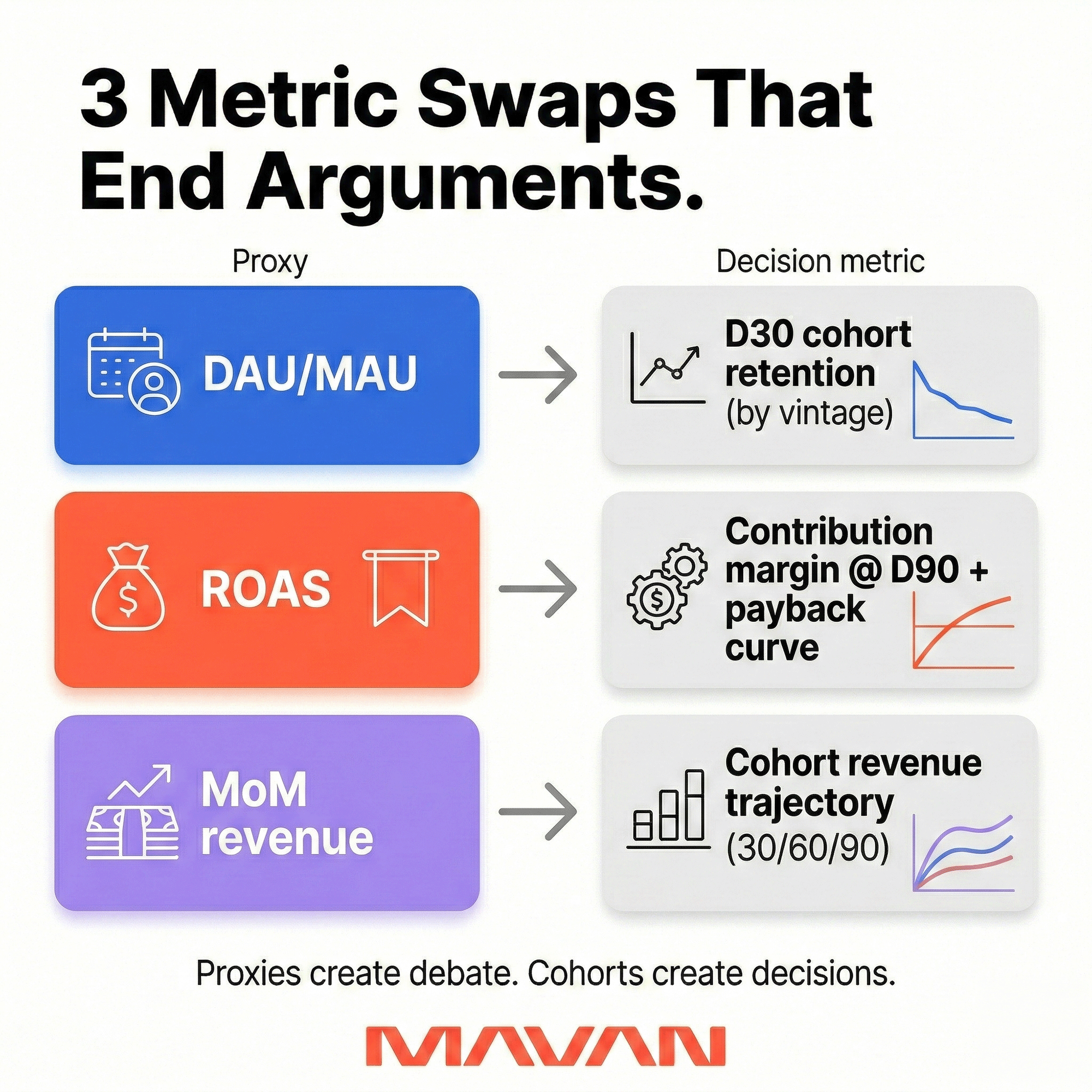

- Replace weak proxies like DAU/MAU and gross ROAS with cohort retention, contribution margin, and payback reality.

- Keep board meetings to one level of drill-down; deeper analysis belongs outside the room.

- Send a disciplined pre-read early with the scoreboard, key variances, tradeoffs, and clear asks.

- If your board meetings keep turning into metric debates, reach out to MAVAN and we’ll build a scoreboard together.

When board meetings go wrong

You walk into the board meeting with hope. The soft launch looks stable and the gates you set look good. You get the green light to go global and the board wants you to ship it immediately.

It’s understandable because that’s ultimately how you maximize revenue.

But you have reservations. Some of the metrics are looking weaker than you’d expect. The board chalks this up to a localization issue that can be easily fixed. But you’re worried that the issue runs deeper.

The top line can hide weak foundations

MAVAN President Dan Barnes has been in meetings like this.

He recalls one such meeting for a game where his team had a low CPI target and was hitting middle-of-the-road CPI. LTV looked good for some cohorts, but retention was bad.

From the top line, ROI looked okay, but it was being driven by a very small group of extremely engaged users who didn’t care about the game genre, only the systems of the game.

Dan worried that, at scale, those numbers would decay. CAC would go the wrong way and they wouldn’t find the kind of scale the business needed.

Truthfully, both sides could find data that fit their story. As Dan notes, “the argument wasn’t actually about the data — it was about conviction and ownership.”

So he made an executive decision. And they did not ship globally. They went back to the drawing board. And, in retrospect, Dan still believes it was the right thing to do.

What this reveals about the need for a board-ready scoreboard

Dan’s experience underlines the critical need for a shared reality. Boards are not villains, and neither are operators. One is simply there to reduce risk and the other is there to create sustainable momentum.

Having a board-ready scoreboard can help alleviate arguments and disagreements by building that shared reality between people and teams who sometimes have conflicting priorities.

In this article we’ll show you how boards and operators interact and the best way to build that shared reality, so everyone can work together constructively and walk forward with conviction and ownership.

Why boards butt heads with operators over growth

Most growth arguments in board meetings are not about charts. They are about risk, time, and trust. Boards are trying to decide where capital goes next. Operators are trying to protect learning time. Those goals can align. They often don’t by default.

Dan’s experience highlights this fact.

But the truth is that growth is not one number, nor does it fall under one team. It’s a system of cause and effect across functions. Marketing influences who arrives. Product influences whether they stay. Data influences what you believe. Finance influences what you can afford. When those functions do not share the same definitions or numbers, boards do not get one narrative. They get many different narratives.

Dan notes that this is actually the biggest challenge he sees in most companies.

“Multiple sources of truth means there’s no way to be objective about outcomes,” he says. “And when you can’t be objective about outcomes, accountability falls through the cracks. Everyone can always find a number that defends their position, which means no one is ever actually wrong, which means nothing changes.”

Dan’s solution to this is a simple one on the surface: There needs to be a single source of truth. Finance needs to be using the same numbers that Product uses, and so on. That means having one warehouse and one refresh cadence that is signed off across functions quarterly. When numbers disagree in a board meeting, the answer should always be that the warehouse number is truth, and that any discrepancies will be investigated offline.

“That’s the only answer that doesn’t derail the meeting,” Dan explains.

More slides do not fix an unclear direction or conflicting directions. What fixes it is a scoreboard designed for decisions. It forces one shared reality and reduces the opportunity for conflicting narratives to crop up.

The scoreboard principle: Two buckets, one job

A board-ready scoreboard has metrics that the whole room can remember. It’s one page that drives decisions, not debate.

When deciding on which metrics to focus on, MAVAN President Dan Barnes likes to organize things into two buckets: 1) leading indicators and 2) lagging indicators. He prefers that split because, in his experience, this matches how decisions actually happen.“Leading indicators tell you whether to keep spending or pull back right now,” he says. “Lagging indicators tell you whether the underlying business model is sound. They answer different questions and they sit in different parts of the board conversation.”

Bucket one: leading indicators tell us what to do right away

Dan’s leading indicators are early signals that sit near the top of the funnel. Leading indicators answer one question: Should we keep spending or pull back right now?

Dan names four examples:

- CPI

- Install-to-registration

- Day 1 retention

- first purchase conversion

These tell you quickly whether you have a problem before it shows up in the numbers that matter.

Bucket two: lagging indicators tell us if the business model is sound

Lagging indicators confirm business health over time. Is the business model actually sound, or are we just renting growth? Dan named the following metrics to focus on here:

- payer retention

- long-term retention

- repeat conversion rate

- organic ratio within DAU

- ROAS over time

- Discrepancy between forecast ROAS and actual ROAS

Dan notes that the last one is critical. “That gap between what you modeled and what you got is one of the most honest signals you have about whether your acquisition economics are working or degrading,” he says.

Three board-favorite metrics that create bad decisions (and what we use instead)

Boards ask for simple proxies because they need speed. But these proxies can invite conflicting narratives, as seen above. A good scoreboard’s job is to remove that wiggle room. Here are three metrics we see boards default to — and the swaps that tend to end arguments.

“Engagement” as DAU/MAU

Why boards like it: It feels like a clean usage signal. It looks like product health.

Why it breaks: “Active” is easy to define loosely. It is also easy to define differently across teams. You can get a “healthy” ratio while retention decays. You can also spike it with first-time users. Andreessen Horowitz calls this out directly. They warn that active users can have almost unlimited definitions, and they push teams to be explicit. They also point investors toward retention by cohort as a clearer read.

What we use instead: Day 30 retention by cohort vintage. Dan’s preferred replacement is cohort retention. Not DAU/MAU. It forces the question that matters: Do users you acquired in month X still show up later?

“Marketing efficiency” as gross ROAS

Why boards like it: It looks objective. It feels like “profit per dollar.”

Why it breaks: ROAS is revenue divided by ad spend. It is not profit. It also hides timing. A campaign can look great in-week and fail over 60 days. This is also where measurement design shapes behavior.

What we use instead: Contribution margin per acquired user at Day 90, plus the payback curve. Dan’s scoreboard replaces gross ROAS with contribution margin per acquired user at a fixed horizon. It is harder to game. It is also closer to business truth. Andreessen Horowitz makes a similar point when they define LTV using contribution margin, not revenue. They also tie contribution margin to CAC payback decisions.

“Momentum” as month-over-month revenue growth

Why boards like it: It answers the funding question fast: Are we growing?

Why it breaks: MoM growth is noisy. It can be pulled forward by promos. It can also hide cohort decay. A business can show rising revenue while new cohorts worsen. Andreessen Horowitz warns about this class of charting error. They note that cumulative charts can “go up and to the right” even while the business shrinks. They argue this is not a useful health indicator.What we use instead:Cohort revenue trajectory at 30/60/90 days. Dan’s replacement is cohort-based. We track what each acquisition cohort does after signup. Then we compare cohorts over time.

KPI design rules that keep everyone honest and keep the meeting moving

MAVAN President Dan Barnes gave four non-negotiables for board-ready KPIs: one definition, one owner, one source of truth, and a threshold. Here is what each rule means in practice — and how we implement it.

One definition (so we stop arguing about words)

If “CAC” means five things, it means nothing. Same with “retention,” “activation,” “payer,” and “organic.” We have watched teams burn weeks debating dashboards because the metric was never written down.

So we write definitions, keep them short, and make them testable.

For each metric on the scoreboard, we document:

- Plain-English meaning: What this metric is trying to tell us.

- Exact formula: Numerator and denominator. No ambiguity.

- Time window: Daily, weekly, Day 1, Day 30, Day 90.

- Cohort rule: “Users acquired in month X, tracked forward” (if cohort-based).

- Inclusions/exclusions: Refunds, fees, returns, internal traffic, bots.

- Decision link: What we will do if this goes red.

One owner (so accountability has a home)

When ownership is shared, it is usually abandoned. That is not because people are lazy. It is because incentives split and time is finite.

Dan’s rule is simple: one owner per metric. We still collaborate cross-functionally. We just do not split responsibility for the number’s integrity.

We assign owners based on who can change the outcome:

- Acquisition efficiency metrics → Growth lead.

- Funnel conversion metrics → Product + Growth, with one accountable owner.

- Margin and payback metrics → Finance partner, in lockstep with Growth.

- Retention and cohort health → Product analytics owner.

Ownership also includes one job that feels small until it isn’t. The owner brings the metric to the meeting with a clean explanation. No hedging. No hand-waving. Just “here’s what changed, here’s why we think it changed, here’s what we’re doing.”

One source of truth (so we stop living in parallel universes)

Dan was blunt on this point. “You have to have a single source of truth, full stop.” He called mismatched numbers a process problem, not a data problem. We see the same failure pattern across companies.

Dan’s practical fix is also blunt:

- One warehouse.

- One refresh cadence.

- Quarterly sign-off across functions.

And when numbers disagree in a board meeting? The warehouse number is truth and any discrepancies will be investigated offline.

Thresholds, not targets (so behavior changes on purpose)

Targets are aspirational, but thresholds are operational. Dan notes that most companies fail here. They set goals and call them controls, then act surprised when nothing changes.

We use red/yellow/green as behavior triggers:

- Red: Stop what we’re doing and escalate today.

- Yellow: Flag it and have a plan by next week.

- Green: Continue as is.

The key is that thresholds must be tied to action. Here is a simple way to set thresholds:

- Start with your last 8–12 weeks of variance.

- Define yellow as “outside normal variance.”

- Define red as “outside variance and financially meaningful.”

- Write the action beside each threshold.

The “one drill-down” rule (so complexity doesn’t kill clarity)

Boards will always ask “why.” That is fair. The mistake is letting “why” turn into a full dashboard tour.

Dan’s rule is the cleanest: the board sees the top-line number. If it is yellow or red, one segmentation cut is allowed. By channel, by geo, or by product line. Never more than one level in the meeting itself.

This protects clarity. It also forces us to do analysis before the room arrives. We do not outsource what should be our own due diligence to a meeting that should be focused on decision-making.

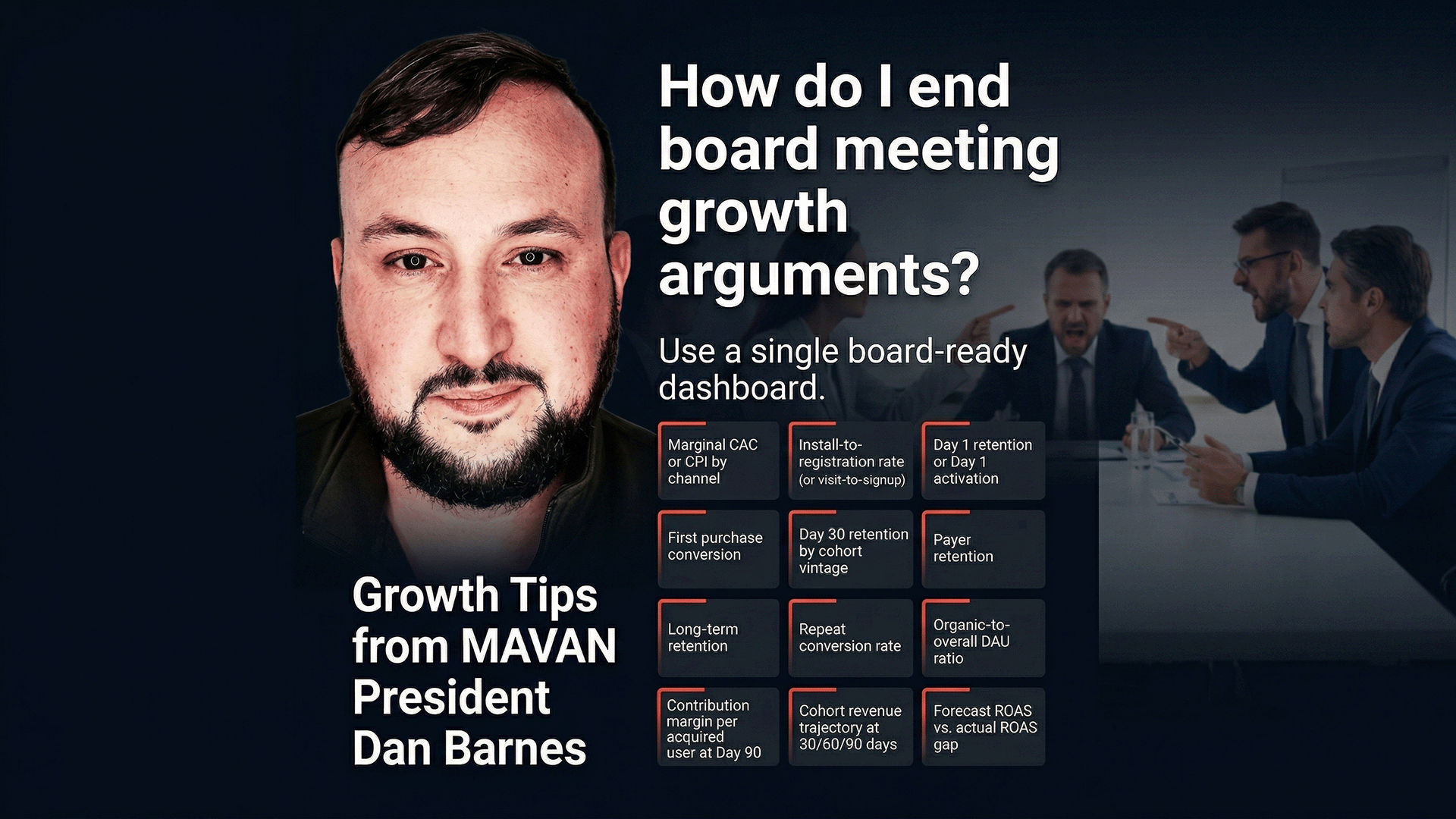

The actual scoreboard: 12 metrics that end arguments

We want the board looking at the same reality we run every day. Above, Dan framed the major KPIs to watch and the buckets they fall into. But he also gave three swaps for metrics boards over-value — including retention by cohort vintage, contribution margin per user, and cohort revenue trajectory.

We combine everything into one scoreboard: 12 numbers, total.

Leading indicators (fast signal, weekly decisions)

These metrics tell us whether to keep spending or pull back now:

- Marginal CAC / CPI (by channel): Marginal means “the next dollar,” not blended averages. We use it to decide if spend scales this week. Owner: Growth lead. Cadence: daily read, weekly board view.

- Install-to-registration rate (or visit-to-signup): This shows whether the first step is frictionless. It helps separate acquisition quality from onboarding issues. Owner: Product + Growth. Cadence: weekly.

- Day 1 retention (or Day 1 activation): We define the Day 1 “come back” or “complete first value” action. This catches product-market mismatch early. Owner: Product. Cadence: weekly.

- First purchase conversion (or first revenue event): This is the first moment someone pays. It signals whether the value proposition lands. Owner: Monetization or Growth. Cadence: weekly.

Lagging indicators (business truth, board confidence)

These metrics tell us if the model is healthy over time:

- Day 30 retention by cohort vintage: This replaces DAU/MAU as the board’s “engagement” proxy. We track users acquired in month X, then read forward. Owner: Product Analytics. Cadence: monthly, with weekly directional checks.

- Payer retention: We measure whether payers keep paying. This protects us from “one-and-done” revenue spikes. Owner: Monetization. Cadence: monthly.

- Long-term retention: For consumer, this may be Day 90. For SaaS, it may be Week 12. We pick the horizon tied to payback reality. Owner: Product Analytics. Cadence: monthly.

- Repeat conversion rate: This is the percent who purchase again (or expand usage). It reflects satisfaction better than raw sessions. Owner: Monetization + Lifecycle. Cadence: monthly.

- Ratio of organic to overall DAU base: We watch this because paid can hide organic decay. Owner: Growth Analytics. Cadence: monthly.

- Contribution margin per acquired user at Day 90: Dan replaces gross ROAS with this, because it reflects real profitability. It subtracts variable costs, not just media spend. Owner: Finance + Growth. Cadence: monthly.

- Cohort revenue trajectory at 30 / 60 / 90 days: This replaces month-over-month revenue growth. It shows whether newer cohorts outperform older ones. Owner: Finance Analytics. Cadence: monthly.

- Forecast ROAS vs actual ROAS gap (model error): Dan called this gap “one of the most honest signals” we have. When it widens, unit economics degrade or attribution drifts. Owner: Growth Analytics. Cadence: monthly.

How we keep meetings from collapsing into complexity

Dan uses two rules to keep meetings on target and above board:

- Use thresholds, not targets: Targets are aspirational. Thresholds are operational. Dan suggests three thresholds: red to escalate now, yellow to have a plan within a week, and green to keep going as is.

- The “one drill-down” rule: The board sees the top-line number. One level of segmentation is available if the number is yellow or red — by channel, by geo, by product line. There should never be more than one level of segmentation in the board meeting itself. Complexity kills clarity.

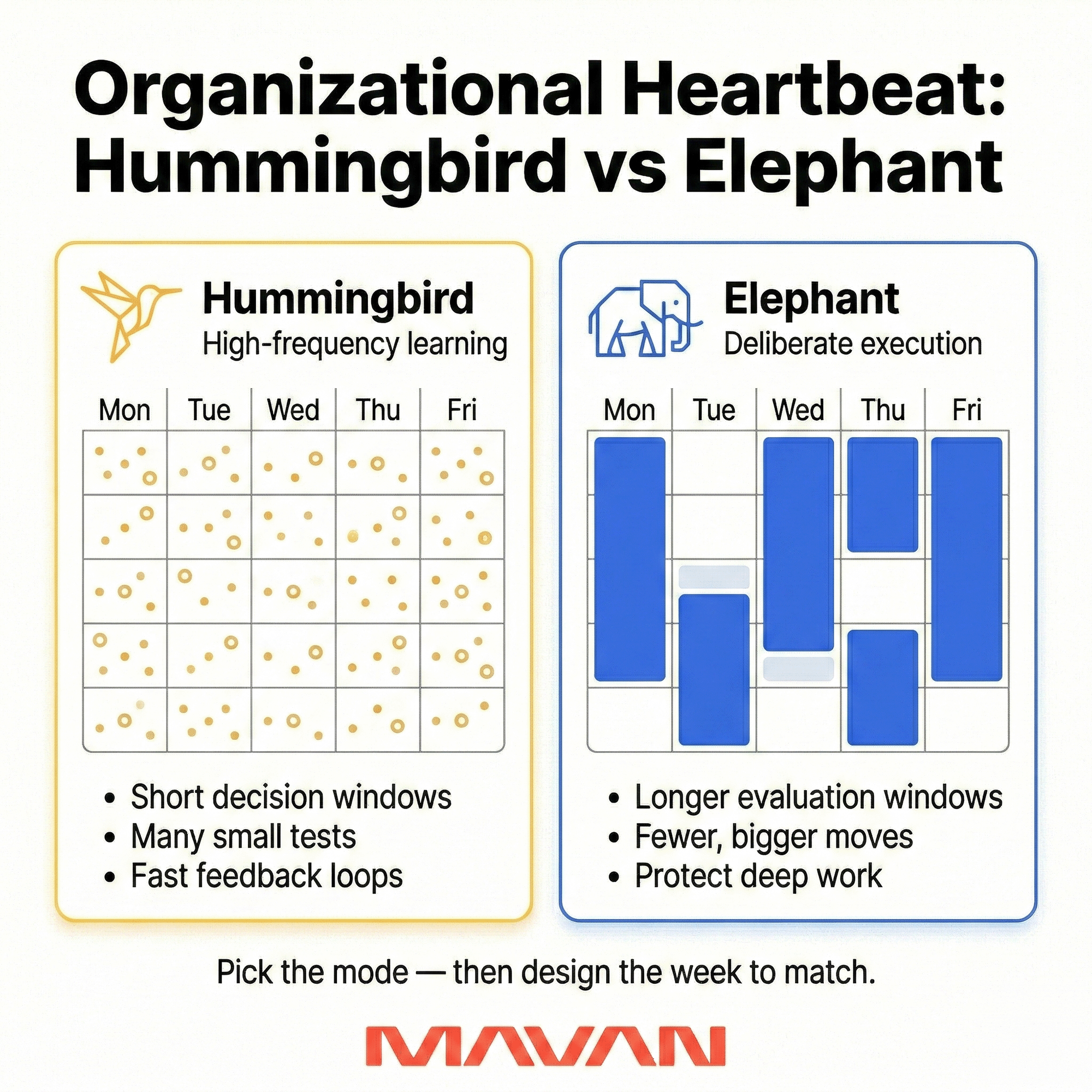

Turning the scoreboard into a system with an operating cadence

A scoreboard does not solve trust by itself. It solves trust when it runs on a rhythm people can rely on. Dan frames this as an “organizational heartbeat.”

Some weeks need a hummingbird pace. Other weeks need an elephant pace. The hard part is naming which mode we are in — and designing the week around it.

First, we pick the heartbeat on purpose

When we feel pressure, we often add meetings. That usually makes things worse. So we start with a simple question: what is the business asking of us right now?

- If we are learning fast (new channel, new pricing, new market) — we choose hummingbird. We’re in a phase where the main risk is being wrong for too long. Inputs are changing. We’re still searching for truth. So we bias toward fast feedback loops and tight decision windows.

- If we are executing a clear plan (stable inputs, known risks) — we choose elephant. We’re in a phase where the main risk is thrash. The strategy is clear. Inputs are stable enough. So we bias toward depth, stability, and compounding.

We do not mix the two. That is how teams burn out and still miss targets.

Next, we choose sync vs async

Dan notes that companies should be deliberate about synchronous versus asynchronous work. Sync time helps when we need fast decisions. Async time works better when the plan is clear and the work is stable.

So we set two rules:

- We keep sync time scarce.

- We make async updates consistent and visible.

A cadence you can copy this week

Dan’s weekly cadence is concrete:

- Monday morning: contextual alignment — objectives, progress, blockers.

- Daily: an end-of-day written report in an async channel — from everyone.

- Tuesday and Thursday afternoons: open blocks for unstructured problem-solving.

- No standups: Dan “avoids them like the plague.” He sees big meetings as expensive.

This structure does two things at once: It protects deep work and it creates fast feedback loops.

The board pre-read: what goes in, what stays out

A good board pre-read can change everything. Dan goes as far as to call it “the entire thing.” He sends the full deck in advance, and he expects everyone to read it before they walk in. This is sensible since boards are expensive rooms. These people come together for a reason.

If we spend the meeting presenting instead of decision-making, we waste that design.

Harvard Business Review’s meeting prep guidance echoes this sentiment, saying that if we want people to do pre-reading, we should distribute materials at least two to three business days in advance. Skadden’s The Informed Board goes further for quarterly board packs. It suggests five to seven days, so directors have enough time to consume the information.

But timing is the easy part. Content discipline is the hard part.

Content we include every time

Dan’s framing is a clean template: overview → challenges → how we’re thinking → discussion. And that structure works in practically all business scenarios.

Here’s what that looks like as a repeatable packet.

- Page 1: The scoreboard. We start with the board-ready scoreboard. We show thresholds. We show trend. We keep it stable.

- A short written narrative that matches the scoreboard. We explain what changed. We explain why we think it changed. We keep it honest.

- Variance explanation, only where it matters. We pick the two or three variances that change decisions. We ignore the rest.

- A “decisions + asks” box. We name what we need from the board. Capital, time, hires, risk tolerance, intros, or approval.

- One working topic. We choose a single deep dive. We bring tradeoffs, not a tour.

Sequoia Capital’s board deck guidance aligns with this. It argues the goal is to maximize the value founders get from the board, while minimizing prep time. It also notes the deck can even be a memo, if that is clearer.

What we leave out

Dan’s rule is: leave out anything that doesn’t drive a decision or explain a variance. That one sentence saves hours. It also reduces defensiveness.

So we cut:

- Long status updates with no decision attached

- “Vanity trend” slides that always go up

- Channel screenshots that cannot be audited

- Deep operational detail that belongs in an appendix

- Metric definitions that are still contested (we fix those before the meeting)

Similarly, Harvard’s corporate governance forum asks boards and management to improve pre-reads with executive summaries that point directors to the crux of decisions, and to force three to five bullets of key takeaways per presentation.

Indeed, most boards do not need more information. They need more clarity and better emphasis.

How we run the meeting when the pre-read has been sent in advance

Dan’s goal is discussion, not presentation. If directors arrive with context, the value comes from the room’s complementary perspectives.

Skadden makes the same governance argument. It recommends limiting presentations to highlights and context, so most board time can be spent on discussion of forward-looking topics.

So we do three small things in the room:

- We start with the scoreboard, then go straight to variances.

- We spend meeting time on decisions and risks.

- We keep the rest in the appendix, for offline reading.

An executive script for high-pressure moments

Board pressure is often more about urgency than trust. MAVAN President Dan Barnes notes that most founders lose the room early. They lead with the plan and skip the shared truth. Boards read this as defensiveness.

Dan said the best founders sound almost clinical instead. To be clinical yourself, you just need a little structure.

The four-line script we use under pressure

Dan’s best script follows a simple sequence:

- Here’s what’s true.

- Here’s what we’re doing about it.

- Here’s what it costs us.

- Here’s what we need from you.

This works because it respects the board’s job, it protects the team, and it keeps the conversation anchored in reality.

Indeed, research on board effectiveness under uncertainty shows that one one theme stands out: Directors want a common understanding of the company’s current state. They also want aligned risk appetite.

Putting truth first makes that alignment possible.

Tradeoffs are not a weakness

Dan warns of another trap founders often fall into: a fear of tradeoffs. But when they avoid them, the board just invents them anyway. The founders who do best name tradeoffs directly. They quantify them when they can.

Dan offered example language like this:

- Maintain CAC targets, grow slower this quarter.

- Relax CAC targets, hit growth, extend payback.

- Hold the line for a fixed window, then reassess.

This fosters a sense of control instead of uncertainty. We do not need perfect precision. We need honest ranges and clear consequences. And when we cannot quantify, we still name the tradeoff, what data will settle it, and when we will know.

How we state uncertainty

Dan gave us one rule we wish more leaders used: “We don’t have enough data yet. Here’s the earliest date we will.” Then we deliver on that date and treat it as a decision point. We can be candid and still be steady. A date helps.

The two tradeoffs founders hide — and why they backfire

Dan named two tradeoffs that “always come back to bite” teams:

Information lag

Boards build a mental model from what we share. If our reality shifts and we hide it, trust erodes. Dan described this like a rubber band. Stretch it enough and it snaps.

So we choose high context over perfect news. We update earlier and more often — reducing surprises.

Channel concentration risk

Most performance growth rides one or two channels. But that creates a single point of failure. Worse, this fragility stays hidden until it becomes a crisis. Policy shifts and auctions can reprice a model fast.

Harvard’s governance guidance suggests that boards should identify single points of failure and clarify risk tolerance for those failures.

Practical board prep you can run in 30 minutes

Before a hard meeting, we build a one-page brief. We write:

- The truth in three bullets.

- The plan in three bullets.

- The tradeoff in one sentence.

- The cost in one sentence.

- The ask in one sentence.

- The “earliest date we’ll know” line.

- One concentration risk statement, with mitigation steps.

We keep it short because people under pressure lose bandwidth. That is also why Deloitte’s Center for Board Effectiveness recommends not burying the lead in board communications. They emphasize highlighting the few key changes that matter.

This is the goal. We are not trying to “win” the meeting. We are trying to earn trust and create a decision.

Paid acquisition under scrutiny: where boards push — and how we answer

When paid is under the microscope, we’re not getting marketing questions. We’re getting capital questions. MAVAN President Dan Barnes notes that almost every board challenge on paid collapses into two questions: “Why are we doing this?” and “How do we know it worked?”

In practice, he said those show up as three predictable asks:

- Is this spend efficient?

- What happens if we cut it?

- How do you know the attribution is real?

Dan’s answer is direct. For “Is this spend efficient?” and “What happens if we cut it?” he said the answer isn’t a ROAS screenshot. It’s contribution margin by cohort at Day 90 — plus a clear model of what organic baseline looks like without paid support. That’s how we justify spend as a decision.

For “Is the attribution real?” he notes that you shouldn’t rely on platform reporting. We run rolling holdout tests, and we show the board the last incrementality measurement and the date we ran it.

One last alignment rule he uses matters more than people admit: Marginal CAC for decision-making; Blended CAC for reporting. Marginal tells us if the next dollar is worth it. Blended tells us how the whole machine performs.

Running with your own scoreboard

If your next board meeting is within two weeks, you don’t need a rebuild. you need a reset you can execute under pressure. You can do that in 14 days.

In Week 1, lock governance. Pick 10–12 metrics and define them once. Assign one owner per metric. Set red and yellow thresholds with actions.

In Week 2, lock rhythm. Run one Monday review. Write one daily async update. Send the pre-read at least a few days early so everyone is on the same page and no time is wasted during the board meeting.

What “good” looks like in practice

When the scoreboard stays stable, and the source of truth is clear, work gets done. The board stops litigating definitions. The team stops defending screenshots. The room starts choosing tradeoffs.

We’ve seen this in our own work.

- With ElevenLabs, we achieved 1,000% ad spend growth at target ROAS and over 90% top impression share globally.

- With Fireflies, we achieved a blended 1.96× ROAS at $700K+ monthly scale, plus a 46% CAC reduction quarter over quarter.

Those outcomes required measurement discipline and shared definitions, not louder opinions.

A quick if-then plan

If you’re leading growth under board pressure, we can help you install this system fast — not with more slides, but with a board KPI dashboard that drives decisions and makes investor reporting metrics harder to game.

If your next board meeting is within 14 days, then do this:

- Today: choose your 12 metrics and one source of truth.

- Tomorrow: assign owners and set red and yellow thresholds.

- Next week: send the pre-read three days early with one “decisions needed” box.

Then just copy and paste our one-page scoreboard template, fill it in once, and use the same page every month.

| Metric | Definition | Source of Truth | Owner | Cadence | Threshold | Current Value | Prior Period | Trend |

| Marginal CAC / CPI (by channel) | Cost for the next unit acquired (not blended average), by channel | Data warehouse KPI view (single agreed definition) | Growth lead | Daily read / Weekly review | — | — | — | — |

| Install-to-registration rate (or visit-to-signup) | % of new users who complete registration (or signup) after install/visit | Data warehouse funnel table + KPI view | Product (funnel) owner | Weekly | — | — | — | — |

| Day 1 retention (or Day 1 activation) | % who return on Day 1 (or complete defined Day 1 “value” event) | Data warehouse cohort table + KPI view | Product analytics owner | Weekly | — | — | — | — |

| First purchase conversion (or first revenue event) | % who complete first purchase (or first paid event) within defined window | Data warehouse events + revenue join | Monetization lead | Weekly | — | — | — | — |

| Day 30 retention by cohort vintage | D30 retention, shown by acquisition cohort (month/week acquired) | Data warehouse cohort table | Product analytics owner | Monthly (with weekly directional check) | — | — | — | — |

| Payer retention | % of payers who remain payers across a defined period | Data warehouse payer cohort table | Monetization lead | Monthly | — | — | — | — |

| Long-term retention (chosen horizon) | Retention at the horizon tied to payback reality (e.g., D90, W12) | Data warehouse cohort table | Product analytics owner | Monthly | — | — | — | — |

| Repeat conversion rate | % who purchase again / expand within defined window | Data warehouse revenue + events | Monetization + Lifecycle owner | Monthly | — | — | — | — |

| Organic ratio | Organic share of new users (or share of active base), per agreed definition | Data warehouse acquisition classification | Growth analytics owner | Monthly | — | — | — | — |

| Contribution margin per acquired user at Day 90 | Contribution margin generated per acquired user by Day 90 (after variable costs) | Finance-approved warehouse model (costs + revenue) | Finance partner (with Growth) | Monthly | — | — | — | — |

| Cohort revenue trajectory at 30/60/90 days | Revenue per user for cohorts at Day 30/60/90 (compares cohort health over time) | Data warehouse cohort revenue model | Finance analytics owner | Monthly | — | — | — | — |

| Forecast ROAS vs actual ROAS gap (model error) | Difference between forecasted ROAS/payback and actual (signals degradation/measurement drift) | Data warehouse forecasting + actuals comparison view | Growth analytics owner | Monthly | — | — | — | — |

Bring MAVAN in and make the scoreboard real

If you need a system — one that survives board pressure and actually empowers weekly decisions — that’s what we build.

MAVAN embeds a cross-functional growth pod into your team — strategy plus execution — to install the board-ready scoreboard and the operating cadence behind it. We don’t hand you a deck and disappear. We help you turn metrics into a repeatable growth engine your board can trust.

If you want support, contact us today.

We’ll align on your growth goals, pressure points, and current measurement reality — then show what it would look like to embed and execute across acquisition, lifecycle, product, creative, and data.

Frequently Asked Questions About Board Meetings and a Board-Ready Scoreboard

What is a board-ready scoreboard?

A board-ready scoreboard is a concise, stable set of metrics designed to create shared reality between operators and the board so meetings focus on decisions instead of conflicting interpretations.

Why do board meetings turn into arguments over metrics?

They usually break down because teams are using different definitions, different data sources, or different proxies for growth, which makes it easy for everyone to defend their own version of the truth.

How many metrics should a board-ready scoreboard include?

The article recommends keeping it tight at around 10–12 metrics so the room can remember them, track them consistently, and act on them.

What is the difference between leading and lagging indicators?

Leading indicators help you decide what to do right now, such as whether to keep spending or pull back. Lagging indicators show whether the business model is actually healthy over time.

What are examples of leading indicators?

Examples include marginal CAC or CPI, install-to-registration rate, Day 1 retention or activation, and first purchase conversion.

What are examples of lagging indicators?

Examples include Day 30 retention by cohort, payer retention, long-term retention, repeat conversion rate, organic ratio, contribution margin per acquired user, cohort revenue trajectory, and forecast-vs-actual ROAS gap.

Why is a single source of truth so important?

Without one trusted source, every team can point to different numbers and defend different narratives, which kills accountability and slows decisions.

What should replace vanity metrics like DAU/MAU or gross ROAS?

The article recommends using more decision-useful metrics like cohort retention, contribution margin per acquired user, payback curves, and forecast-vs-actual performance gaps.

What should go into a board pre-read?

Include the scoreboard, a short narrative on what changed, the few variances that matter, a decisions-and-asks section, and one focused deep dive topic.

How should teams run the board meeting itself?

Start with the scoreboard, move quickly to the key variances, spend most of the time on decisions and risks, and leave deeper detail in the appendix.

What is the “one drill-down” rule?

If a metric is off, the board gets only one level of segmentation in the meeting, such as by channel or geography, so complexity does not overwhelm clarity.

What can a team do before its next board meeting?

Lock metric definitions, assign owners, set thresholds, establish a review cadence, and send the pre-read a few days early so the meeting can focus on decisions instead of interpretation.

Book a complimentary consultation with one of our experts

to learn how MAVAN can help your business grow.

Want more growth insights?

Thank you! form is submitted

[hubspot type=”form” portal=”20951211″ id=”9c538ed2-fb12-45f1-a573-ad7953c058cc”]

Related Content

-

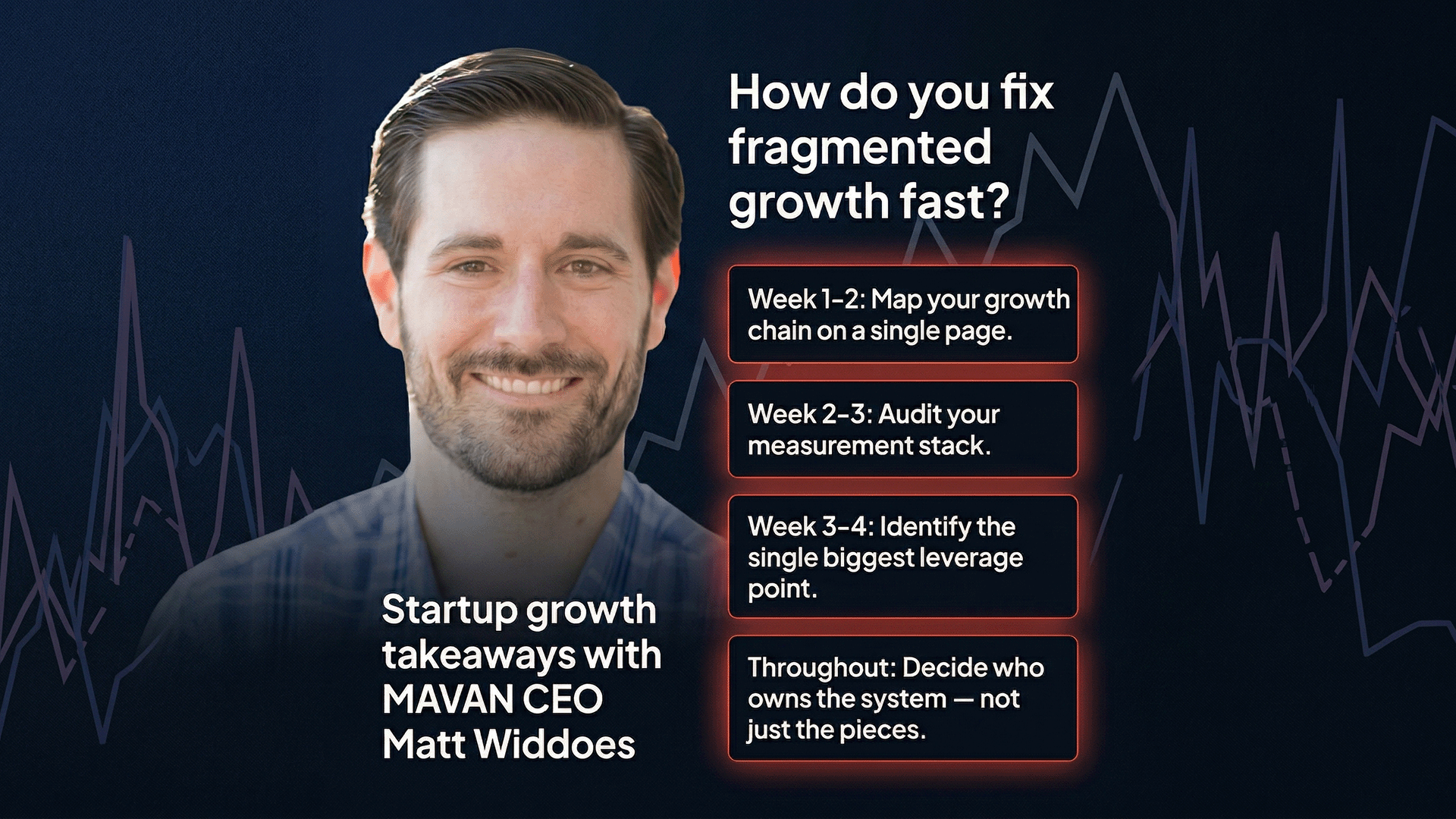

Why Does Startup Growth Feel Broken Even When Your Team Is Working Hard?

Startup growth breaks down when marketing, product, data, and creative teams work in silos. The fix starts with mapping your full growth chain, auditing your measurement stack, and assigning one owner to the whole system — not just the pieces. TLDR — Top Takeaways For Fixing Fragmented Startup Growth You haven’t taken a real vacation…

-

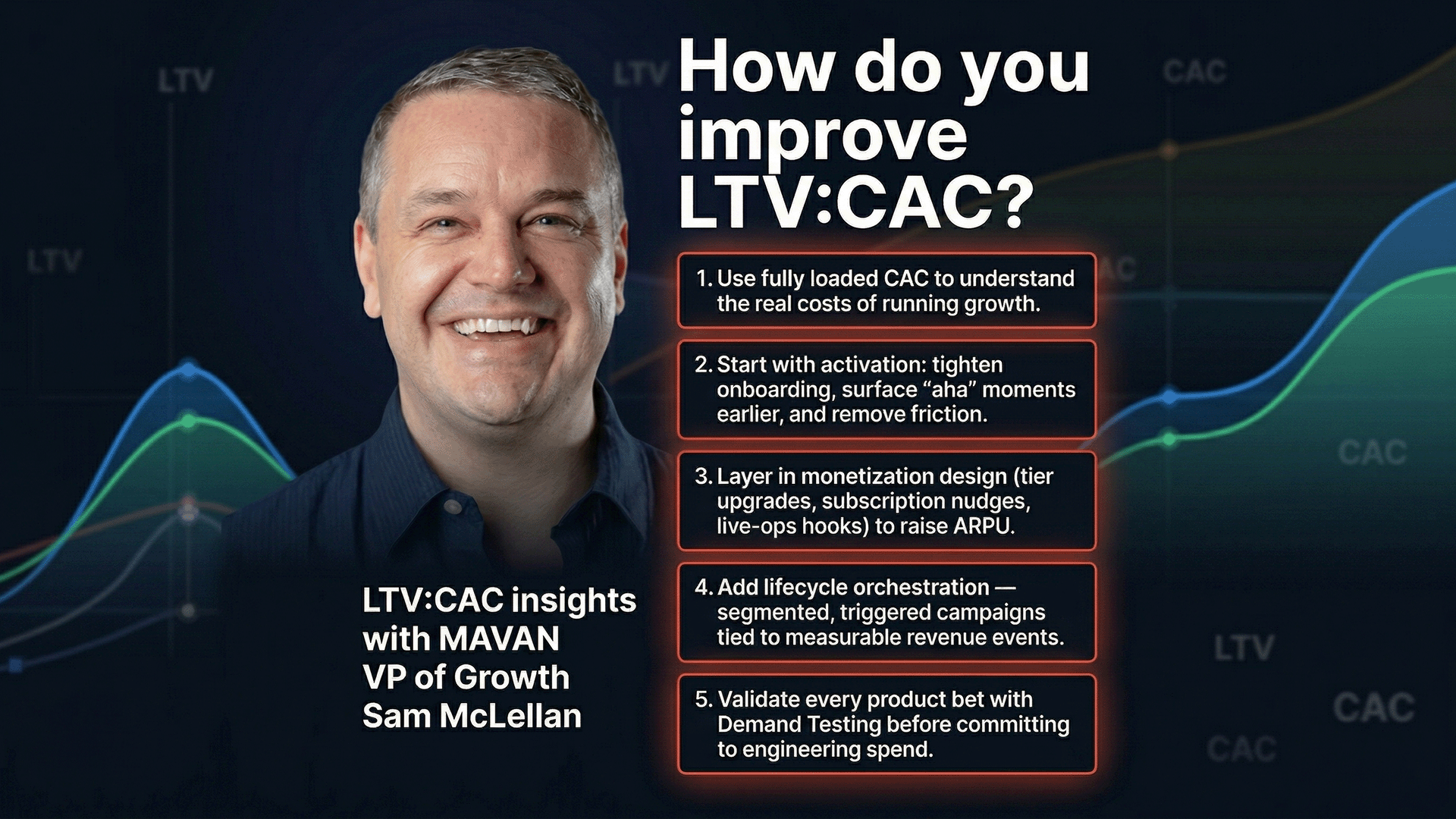

How Do You Actually Improve Your LTV:CAC Ratio?

Most teams try to improve their LTV:CAC ratio by cutting acquisition costs. But the higher-leverage fix is raising lifetime value through product changes — better activation, smarter monetization, and stronger lifecycle orchestration — then validating those changes with demand testing before committing engineering time. TLDR — 10 Critical Insights on LTV and CAC Everyone knows…

-

How Do You End Growth Arguments in Board Meetings?

A board-ready KPI scoreboard uses 12 metrics with one shared definition, one owner, one data source, and red-yellow-green thresholds tied to specific actions. It replaces competing narratives with a single shared reality that makes board meetings about decisions, not arguments. TLDR — What Do You Need to Build a Board-Ready KPI Scoreboard in 14 Days?…