A functioning B2B SaaS experimentation program is built around paid creative testing and landing page optimization. Even with long sales cycles, you can know if an experiment is heading in the right direction within two weeks — as long as you track from first touch all the way through to closed contract.

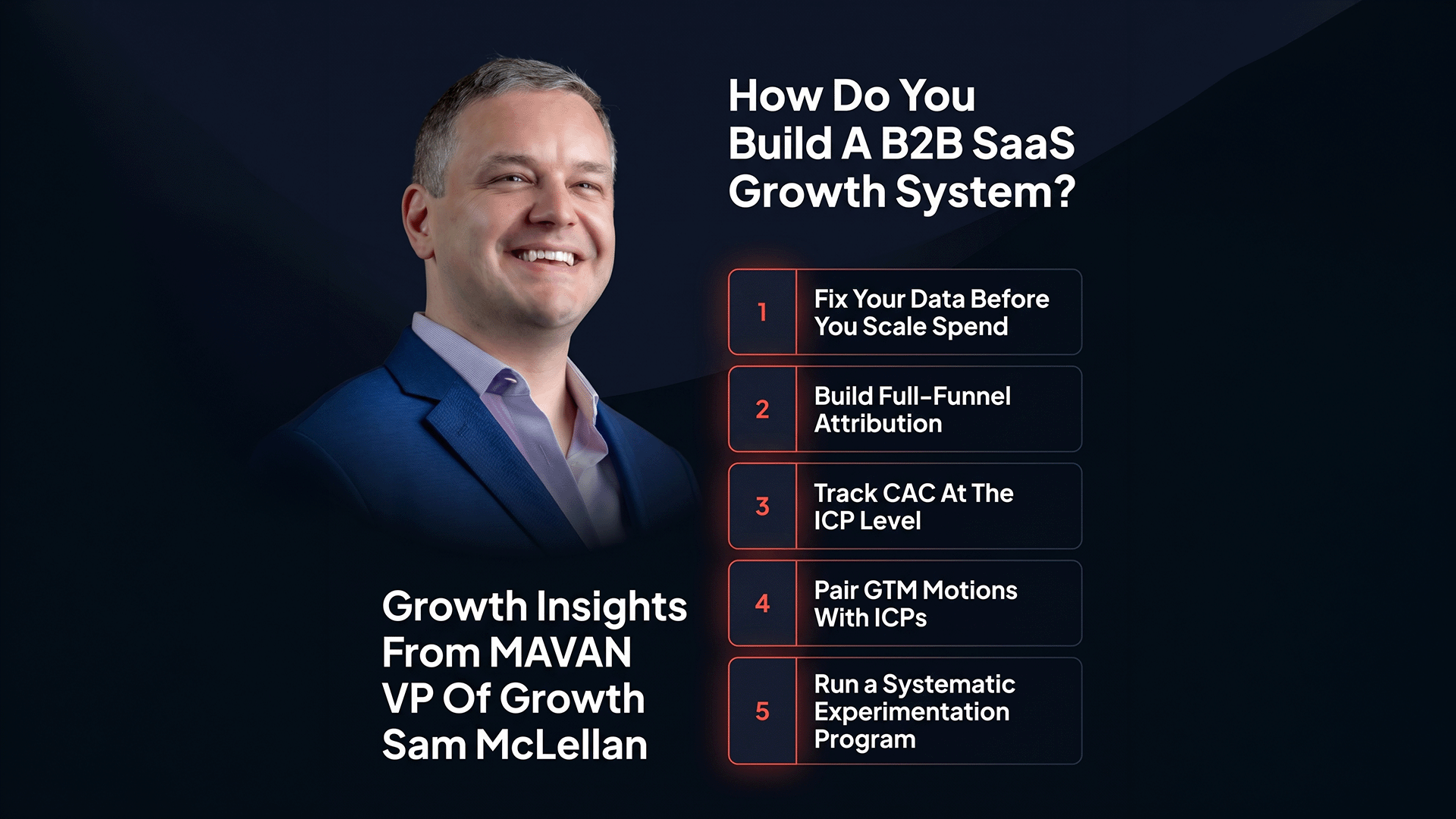

TLDR — Key Takeaways for Building a B2B SaaS Experimentation Framework

- Most B2B SaaS teams run tests — almost none have a testing system, and that gap is where budget disappears.

- Paid creative is the highest-leverage testing ground in B2B SaaS; start there, not in the product.

- ICP (ideal customer profile) targeting is the compass — define your segments before writing a single ad.

- Allocate 15–20% of paid media spend to a dedicated, isolated testing budget separate from performance campaigns.

- Structure every test around three ICPs, three hooks each, with a decision point set before launch.

- Directional confidence within two weeks is the standard — not statistical certainty built for consumer-scale audiences.

- Landing pages are the second half of your ad’s promise — message mismatch kills experiments at the handoff, not the click.

- Tag every experiment entrant in your CRM at first touch so you can close the loop when the deal signs nine months later.

- The minimum viable setup is five things: testing budget, hypothesis log, proxy conversion events, ICP landing pages, monthly review.

- Ready to build out an effective B2B experimentation system? Reach out to MAVAN today.

The mobile gaming industry runs A/B tests the way SaaS companies run board meetings — constantly, systematically, and with clear stakes attached to every result. In any mobile game with meaningful scale, there’s an experiment running at any given moment. B2B SaaS teams look at that cadence and reasonably conclude: our sales cycle is nine months long, our audience is a fraction of the size, and we can’t test our way to enterprise deals the same way a game studio tests its onboarding flow. That conclusion is half right. The cadence has to adapt. But the underlying system — structured hypotheses, dedicated budgets, documented learnings — transfers directly, and the B2B teams building that system are compounding their advantage every month.

The question most growth leaders get stuck on isn’t whether to test. It’s how to build a system that makes every test useful — even with small sample sizes, long cycles, and a dozen competing priorities. This is the framework that actually works.

Why Doesn’t Most B2B SaaS A/B Testing Actually Work?

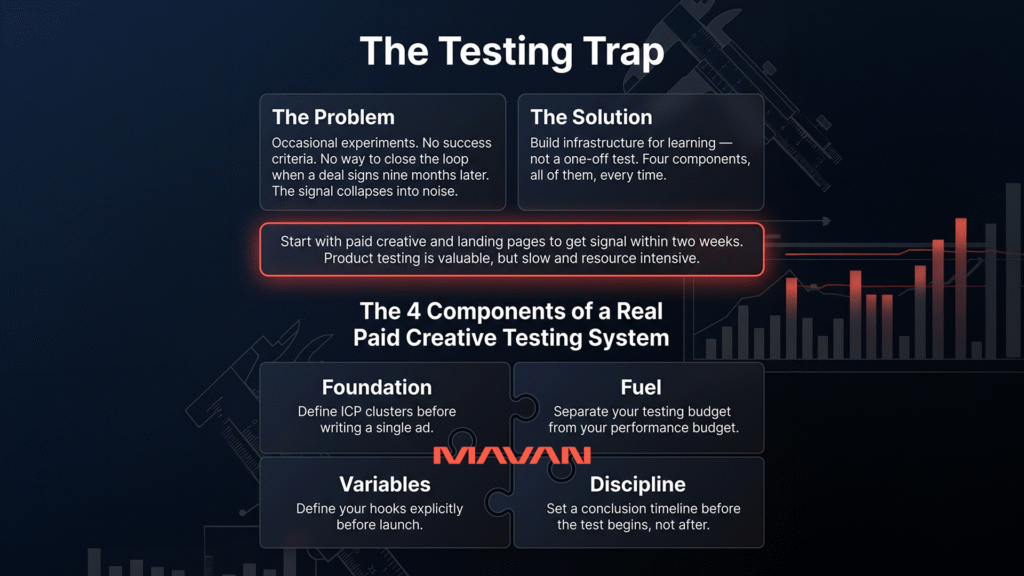

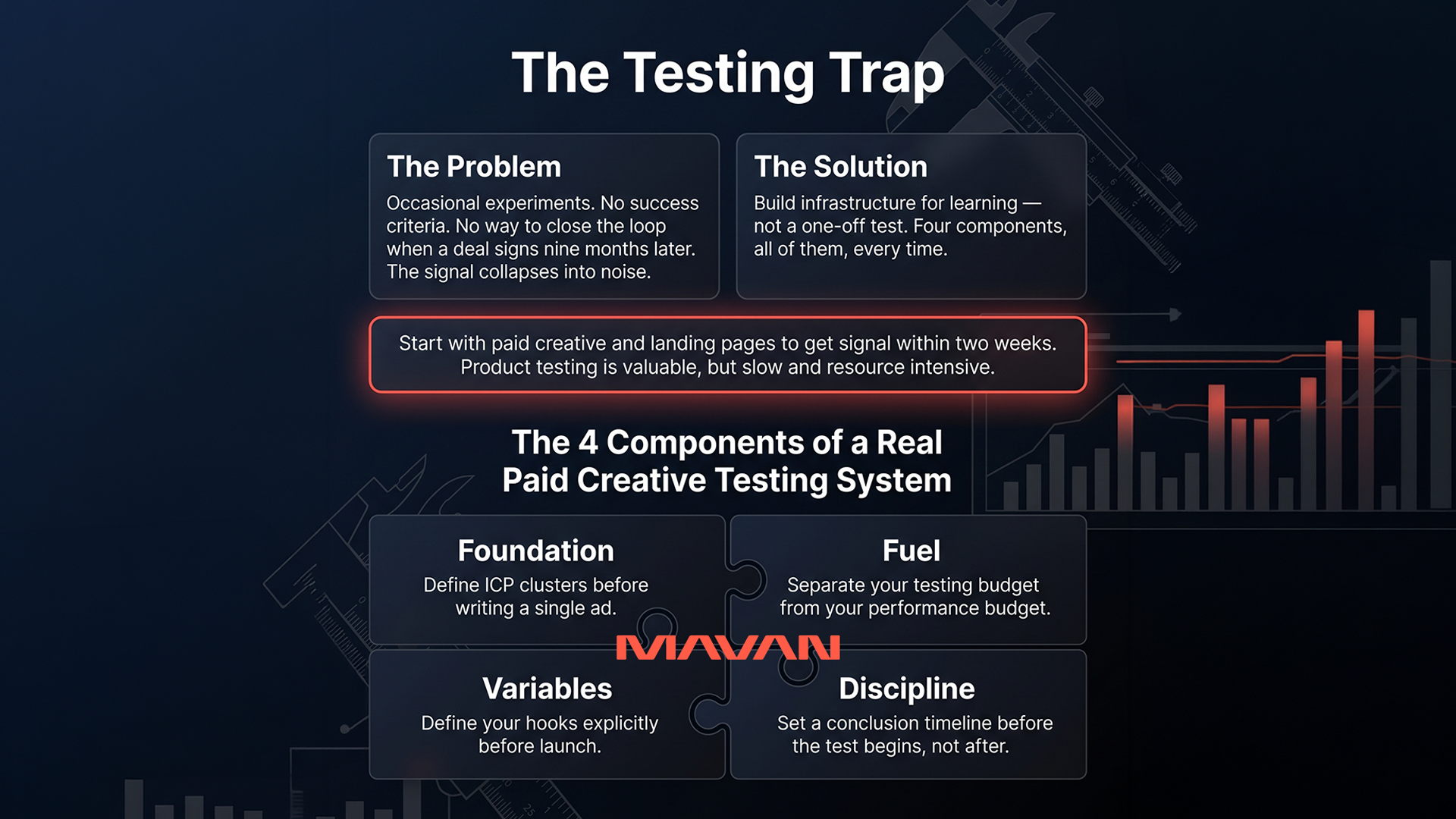

Most B2B growth teams run tests. Almost none have a testing system — and the gap between those two things is where budget dissipates. A real testing system has four components: a documented hypothesis, a dedicated budget, a pre-set timeline for drawing a conclusion, and a tracking chain from first touch to closed contract. Without all four, you’re running vibes with a slight delay.

Sam McLellan, VP of Growth at MAVAN, draws the contrast by borrowing from mobile: “Go into most mobile games — or pretty much any mobile product of any significant size — and there will be an A/B test running at any given moment. They are constantly testing and constantly iterating.” That discipline exists because those companies built systems for it. They didn’t just run tests. They built infrastructure for learning.

The failure mode in B2B SaaS is assuming that discipline doesn’t translate. Teams run occasional experiments — often in the wrong place (product UI changes requiring weeks of engineering), with no pre-defined success criteria, and no mechanism for closing the loop when a deal finally signs nine months later. The experiment collapses into noise. The lesson becomes “testing doesn’t work at our scale,” when the real lesson is: testing without a system doesn’t work at any scale.

What follows is a discipline-first framework for building an experimentation program that produces compounding learnings — regardless of your audience size, your sales cycle length, or your current tech stack.

Where Should B2B SaaS Companies Actually Run Experiments?

The highest-leverage place to run B2B SaaS experiments is paid creative — the ads you run to attract your ICP (ideal customer profile — the specific buyer type and company stage your product is built to serve). After that comes landing pages. Product-level testing matters, but it’s typically the slowest place to get clean, fast signal — and the most expensive place to experiment without solid upstream data first.

Sam McLellan, VP of Growth at MAVAN, is direct about the priority order: “For the paid media side, that to me is definitely where ICP tends to be the biggest compass — the one that companies use to determine where to put their ads, do their targeting, and how to connect it to the product.” The reason paid creative moves to the top of the experimentation stack is structural: creative testing doesn’t require engineering resources, it returns signal in days, and the hypotheses you’re testing — which buyer type responds, which message lands, which angle converts — are the same questions that make every other part of your growth system sharper.

Consider what happens in the moment someone clicks your ad. They’ve already made a micro-commitment — they saw something that matched a concern they carry around every day, and they acted on it. If you’re testing systematically at that stage, running three different angles against three different ICP segments, you’re learning who your buyers actually are before they even enter your funnel. That advantage compounds every month you run the system.

Product experimentation — testing dashboard layouts, onboarding flows, feature placements — is valuable in its place. But it’s slow, resource-intensive, and deeply entangled with sales cycles that make attribution genuinely hard. Paid creative and landing pages can return directional signal inside of two weeks. That’s where you start.

What Does a Creative Testing Framework Actually Look Like?

A creative testing framework matches ICP segments to message variants and hooks, runs them in isolated test cells with a dedicated budget, and draws a conclusion within a pre-defined time window. Think three ICPs, three hooks per ICP, a protected budget, and a decision point built in before you spend a dollar — all mapped out before a single ad goes live.

“There should be a systematic scientific process,” says Sam McLellan, VP of Growth at MAVAN. “These are the three ICPs we’re targeting, three variants, three hooks — we’re going to test all of that. And we’ll make sure that at least a significant percentage are heading in the right direction.”

“If you don’t have a creative testing framework as part of your spend — at any level — a percentage of your budget should be going to just testing in any given month. And if that sounds crazy, your competitors are already ahead of you.” — Sam McLellan, VP of Growth, MAVAN

Here’s what that framework looks like in execution:

- Define ICP clusters before writing a single ad. A CMO at a Series B company has a completely different problem set than a VP of Growth at an early-stage PLG product — even if your platform serves both. Each segment needs its own test cell, its own message, and its own hook. Don’t let creative do double duty; it will underserve both audiences.

- Separate your testing budget from your performance budget. MAVAN’s audit work with B2B SaaS clients consistently recommends allocating 15–20% of paid media spend to a dedicated testing pool — isolated from always-on performance campaigns. A test campaign is supposed to be learning, not converting at target ROAS (return on ad spend) on day one. Isolation protects the experiment from performance pressure.

- Define your hooks explicitly before launch. A “hook” is the core tension or claim in the first three seconds of your ad — or the first line of copy. It’s the reason someone stops scrolling. Test pain-based hooks (“Still spending six figures on a channel you can’t attribute?”) against aspiration-based hooks (“What does a 60% CAC reduction look like in your pipeline?”). The goal is knowing which angle your ICP responds to — which reveals more about your buyers than almost anything else you’ll learn from performance data alone.

- Set a conclusion timeline before the test begins, not after. McLellan is clear: “Within two weeks, you should probably know if you set this up right.” The timeline disciplines the process. Without a pre-set decision point, tests drift indefinitely, budgets blur together, and the learnings never arrive.

The mechanism that separates a creative testing program from random iteration is documentation. Every test has a name, a hypothesis, and a decision point. When you build that habit into the weekly cadence, you’re building a growing map of what your buyers respond to and why — and that map compounds in value every quarter.

How Do You Achieve Statistical Significance with Small B2B Audiences?

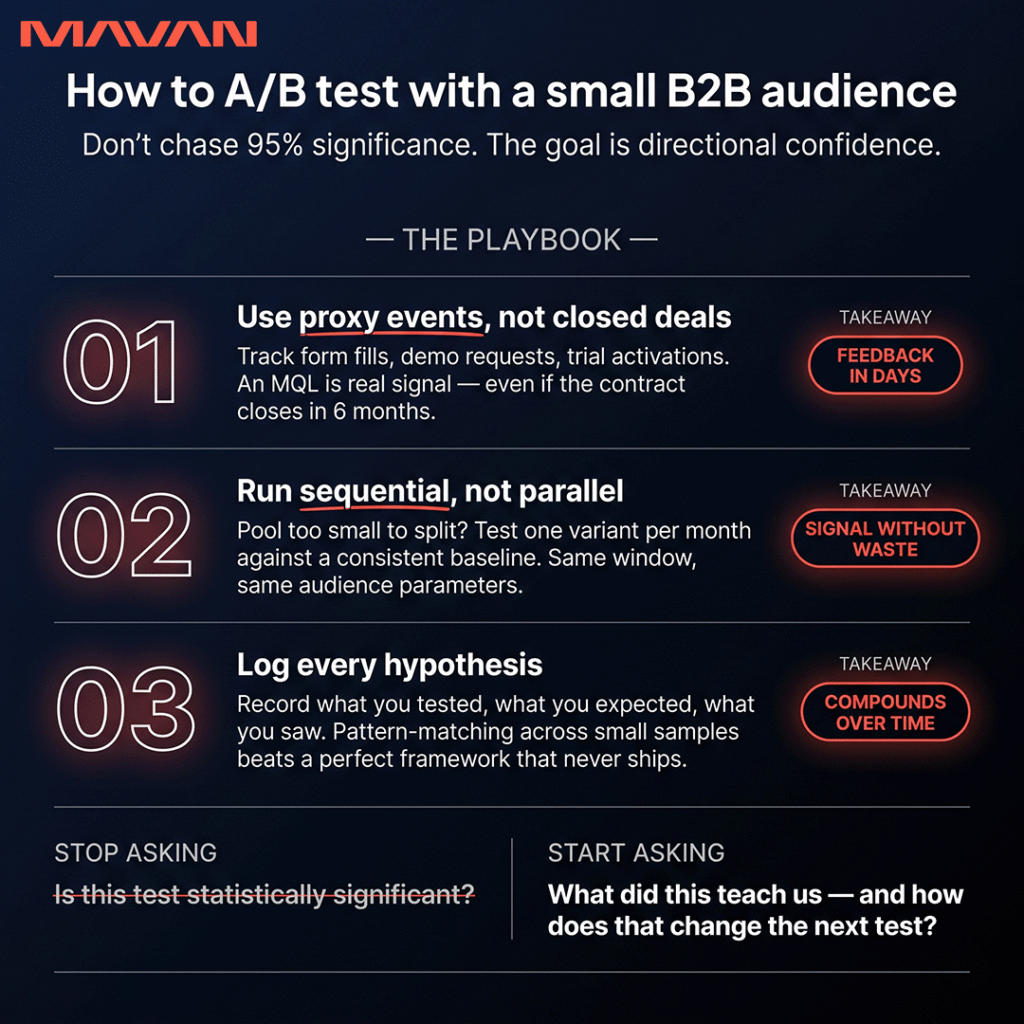

Reframe the goal. Statistical significance — the confidence threshold (usually 95%) confirming a result wasn’t due to chance — was built for high-volume consumer environments. In B2B, with test cells of a few hundred people each, waiting for that threshold is often impossible. The goal isn’t certainty. It’s directional confidence, gathered fast enough to inform the next iteration.

Sam McLellan, VP of Growth at MAVAN, captures this frame directly: “Within two weeks, you should probably know if you set this up right.” He’s not describing statistical certainty. He’s describing a well-structured experiment — one that generates a clear lean, a usable signal — inside a compressed window. For B2B growth teams, that’s the standard to hold, rather than importing thresholds designed for consumer A/B testing at massive scale.

A few approaches that make this work in practice:

- Use proxy conversion events to accelerate feedback. Don’t wait for a closed deal to assess an experiment. Track form fills, demo requests, or free trial activations instead. These events happen faster, accumulate quickly enough to reveal patterns, and are strong leading indicators in most B2B funnels. An MQL (marketing qualified lead — a prospect who’s shown enough interest to be passed to your sales team) is meaningful signal, even if the contract doesn’t close for six months.

- Run sequential experiments when your pool is too small to split. Test one variant at a time against a consistent baseline — same time window each month, same audience parameters. You lose some precision, but you gain usable signal without inflating spend or producing meaningless cell sizes.

- Document hypotheses and results formally, even from small samples. A shared hypothesis log — what you tested, what you expected, what you saw — is worth more than a statistically perfect framework that never launches. Small-sample learnings compound when recorded. Over time, you start pattern-matching. For instance, you might discover that a certain hook consistently outperforms another for VP-level buyers. That’s directional intelligence that makes next month’s test smarter, and that’s exactly what a real learning engine produces.

The shift is conceptual as much as methodological. Stop asking “Is this test significant?” and start asking “What did this teach us, and how does that change the next one?”

How Do Landing Pages Make or Break Your B2B SaaS Experiments?

Your landing page is the second half of a promise your ad already made. If that promise arrives at the wrong destination — a generic homepage, a feature-forward product overview, a headline unconnected to the ad someone clicked — the experiment fails at the handoff, not the click. And most teams never trace the failure back to where it actually happened.

Sam McLellan, VP of Growth at MAVAN, frames ads and landing pages as a continuous story: “Ads send a message. They are telling you about the product. You’re setting expectations. When they click through, that expectation should be continued — it should be like the next part of the story you already started beginning to tell.”

That principle has direct implications for how you build ICP-specific pages. A CMO landing on a page built for a VP of Engineering will feel the mismatch within seconds, even if they can’t articulate why. The page talks about integration speed and API documentation. The CMO wants to know what this does for pipeline visibility and board reporting. The experience ends before it starts.

The practical solution is a landing page per ICP — or at minimum, a headline swap that reflects the specific pain point driving each segment’s click. This is one of the lowest-friction tests available in B2B SaaS. No new design system. No major engineering lift beyond a parameter-driven template. Just message alignment, tracked through separate URLs so you can see which ICP journey is converting and which is leaking.

When a landing page isn’t converting — when people arrive and leave without submitting their information — McLellan is direct about the next move: “Find out why. There should be user interviews, or you should be talking to your audience.” And then more specifically: “There’s a multitude of ways to gather feedback — basic surveys to user sessions. If you’re not doing any of that, you’re not really getting any feedback on what you’re doing.”

Most teams look at bounce rate, run another headline test, and repeat. Fewer teams sit down with the people who didn’t convert and ask one simple question: what didn’t you understand, or what were you expecting that you didn’t find? Three to five conversations with real buyers can surface the insight that makes your next iteration land — and that qualitative signal is often more valuable than a full month of split testing.

How Do You Track B2B Experiments Through a 6–9 Month Sales Cycle?

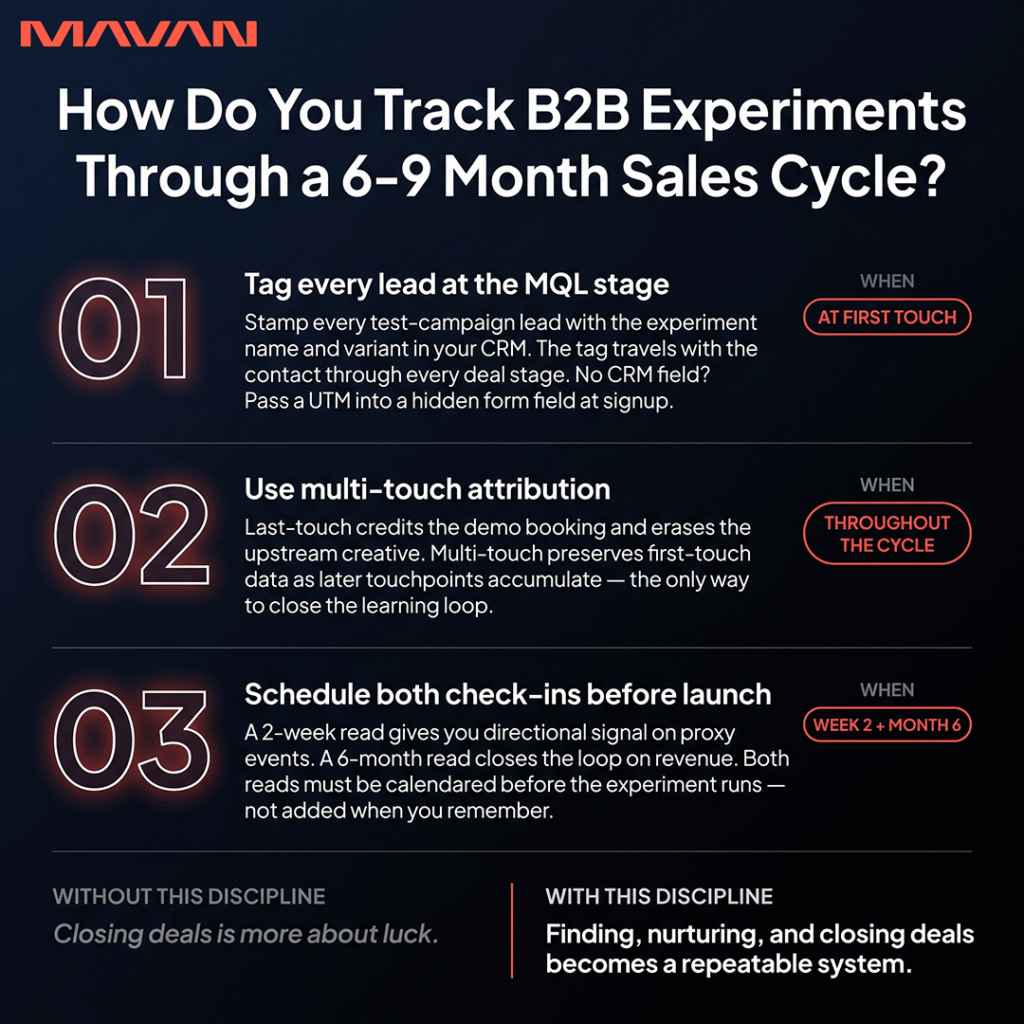

You commit to the tracking infrastructure before the experiment launches — naming the test, dating the cohort, and tagging every entrant in your CRM at the moment of first touch. A long sales cycle doesn’t make experiments impossible. It makes the upfront tracking discipline more critical, not less. The thread connecting January’s ad to September’s signed contract has to be laid before the ad runs.

Sam McLellan, VP of Growth at MAVAN, describes the discipline clearly: “This decides experiment A — we’re doing this, we’re doing it this period of time. We’re expecting these folks to come in, and we’re going to track it all the way along. The people who were first touch here — we’re going to not take all the credit, but this will be part of looking at that journey that person took from first touch all the way through to close contract.”

That framing matters. The goal isn’t to attribute all revenue to the test. The goal is to preserve the experiment entrant as a trackable segment in your pipeline — so that when a deal closes in month nine, you can trace it back to the creative, the ICP cluster, and the hook that generated the first click. McLellan acknowledges the timeline is real: in B2B, “a lead or MQL might not even register for a month or two — then the contract has to close, and if it’s a huge contract, you’re looking at nine months later and you got the green light.” Plan for it.

Practically, tracking through long cycles requires three things:

- CRM tagging at the MQL stage. Every lead from a test campaign gets tagged with the experiment name and variant. This tag travels with the contact through every deal stage. If your CRM doesn’t support this natively, a UTM parameter (a tracking code added to the end of a link URL) passed into a hidden form field carries the data through the handoff.

- A multi-touch attribution model that preserves first-touch data as subsequent touchpoints accumulate. Last-touch attribution — crediting the demo booking or the trial activation — erases the upstream experiment data you actually need to close the learning loop.

- A patient experiment log with a scheduled six-month check-in. The two-week read gives you directional signal on proxy events. The six-month read closes the loop on revenue, connecting upstream creative decisions to downstream business outcomes. Both check-ins need to be scheduled before the experiment runs.

What’s the Minimum Viable Experimentation Setup for B2B SaaS?

The minimum viable experimentation setup for B2B SaaS requires five things: a testing budget line item separate from performance spend, a hypothesis log, proxy conversion events tracked in your analytics, a landing page per ICP, and a monthly experiment review. You don’t need a sophisticated testing platform or a data science team to run a real program — you need rigor and consistency.

Here’s the full stack, built in the order that creates the most leverage fastest:

- A dedicated testing budget. Separate this line item from your always-on performance campaigns — even if it’s a small percentage of total spend. Isolation is what keeps experiments alive long enough to produce signal. Mixed budgets create pressure to kill tests early; a separated budget creates the conditions for learning.

- A hypothesis log. A spreadsheet works. Record what you’re testing, which ICP it targets, which hook it uses, when it starts and ends, and what “good” looks like before the test runs. Every test that isn’t in the log isn’t an experiment — it’s just spend.

- Proxy conversion events. Set up form fills, demo requests, or free trial activations as tracked conversion events in your analytics platform. Don’t wait for revenue to assess a test. These leading indicators give you the signal McLellan describes within two weeks — and they’re the bridge between test launch and the eventual closed deal.

- A landing page per ICP. Even minimal personalization — a headline that reflects the specific pain point driving each segment’s click — dramatically improves signal quality. Build one version per active ICP and track them through separate URLs.

- A monthly experiment review. Thirty minutes, once a month. What ran, what signal came back, what the next hypothesis is. This meeting is the moment a testing program becomes a learning engine. Without it, experiments accumulate without producing growth direction.

These five components generate compounding intelligence about your buyers. More sophisticated tools — advanced attribution modeling, multi-variant testing, predictive analysis — build cleanly on top of this foundation once the habits are solid.

FAQ: B2B SaaS Experimentation and A/B Testing

What’s the best way to A/B test in B2B SaaS when you have small audiences?

Focus on directional confidence rather than statistical certainty. Use proxy conversion events — demo requests, form fills, free trial activations — instead of waiting for revenue data. Run two-week sprints with a pre-defined hypothesis and document results even from small samples, because the learnings compound. When your pool is too small to split meaningfully, run sequential experiments — one variant at a time against a consistent baseline in the same time window each month — rather than splitting a too-small audience into statistically meaningless cells.

How much of my paid media budget should go to A/B testing in B2B SaaS?

MAVAN’s audit work with B2B SaaS clients consistently recommends allocating 15–20% of paid media spend to a dedicated testing pool, completely isolated from always-on performance campaigns. Mixing test and performance budgets creates pressure to kill experiments before they generate useful signal. The testing line item should be protected from short-term return targets, because its job is learning, not converting — and that distinction is what makes the learning usable.

How do you attribute B2B SaaS experiments when the sales cycle is six to nine months long?

Tag every experiment entrant in your CRM at the moment of first touch — before the deal moves forward. Use a multi-touch attribution model that preserves first-touch data as subsequent touchpoints accumulate. When the deal closes, you can trace the buyer’s journey back to the original experiment. The key is setting up tagging infrastructure before the experiment runs, not after — because the contact record is the only durable thread connecting an ad click in January to a signed contract in September.

What should B2B SaaS companies test first — creative, landing pages, or product?

Start with paid creative, then landing pages. Product-level experimentation is the most resource-intensive and slowest to return signal in a long-cycle B2B environment. Creative testing — which ICP you target, which hook you use, which message variant you run — returns directional signal within two weeks and requires no engineering capacity. Landing pages come second, because they’re where the promise of your ad either gets kept or quietly broken. Product experimentation becomes high-value once the upstream funnel is optimized and generating reliable volume.

How do I know if a B2B SaaS experiment is actually working?

Within two weeks of launching a well-structured experiment, you should have directional signal. Look at proxy conversion events: are people completing the form, requesting the demo, starting the trial? If those leading indicators are trending in the right direction, continue. If they’re flat or declining after two weeks with reasonable impressions, iterate the hypothesis. Don’t let an underperforming test run indefinitely — the two-week decision point is what keeps the program efficient rather than expensive.

How do I build an ICP-based creative testing framework without a large team?

Define three distinct ICP segments by job title, company stage, or primary pain point. For each segment, write three message variants around different hooks — pain-based, outcome-based, and process-based work well as a starting set. Run each ICP-hook combination in a separate campaign with a dedicated budget. Review results every two weeks, retire the clear underperformers, iterate on the strong directions, and log every decision. Over three to four months, this process produces a genuine creative intelligence map — an evidence base for what each buyer type responds to, and why.

So What Does a Real B2B SaaS Experimentation Program Actually Look Like?

A real B2B SaaS experimentation program is built in paid creative and landing pages — not in product dashboards. It requires a testing budget isolated from performance spend, ICP-specific hypotheses documented before each test runs, a two-week directional review cadence, and CRM tracking that follows experiment entrants from first touch through to closed contract. Statistical perfection isn’t the standard. Documented, consistent, compounding directional learning is what the system is built for. Companies running this system don’t just test faster — they get more precise about their buyers every single month, and that precision shows up in CAC (customer acquisition cost), pipeline quality, and close rates long before it shows up in a board slide.

Ready to Build the System? Here’s Your First Move.

If you’ve read this and recognized the gap between the experiments you’re running and the testing system you want to have — no dedicated budget line, no hypothesis log, no ICP-specific landing pages — that gap is completely closeable, and faster than you’d expect.

If you have a paid media program but no structured creative testing framework, then start here: Name one ICP. Write one pain-based hook and one aspiration-based hook. Set a two-week window. Run them against each other in isolated campaigns with a protected budget, and document what happens. That’s your first real experiment — and the foundation of everything that follows.

When you’re ready to scale that system — to build the full creative testing framework, attribution infrastructure, and experiment pipeline across your entire funnel — MAVAN embeds a fully cross-functional growth team into your business in days, not months. We’ve helped grow 70+ venture-backed companies using exactly this approach.

Book a complimentary consultation with one of our experts

to learn how MAVAN can help your business grow.

Want more growth insights?

Thank you! form is submitted

[hubspot type=”form” portal=”20951211″ id=”9c538ed2-fb12-45f1-a573-ad7953c058cc”]

Related Content

-

How Do You Build a Real A/B Testing Framework for B2B SaaS?

A functioning B2B SaaS experimentation program is built around paid creative testing and landing page optimization. Even with long sales cycles, you can know if an experiment is heading in the right direction within two weeks — as long as you track from first touch all the way through to closed contract.

-

Why Is Your B2B SaaS Attribution Broken — and How Do You Fix It?

Most B2B SaaS attribution stacks fail in one of two predictable patterns: relying solely on last-touch data, or being bolted together piece by piece until the parts no longer communicate. The fix starts with mapping every top- to mid-funnel touchpoint in sequence and tying that complete journey back to the moment a contract closes. TLDR…

-

How Do You Build a B2B SaaS Growth System That Scales Beyond Founder-Led?

The fastest-scaling B2B SaaS companies are adopting measurement and experimentation disciplines from mobile gaming — including granular multi-touch attribution, ICP-segmented CAC tracking, and systematic creative testing. Companies that close that gap now are building compounding advantages that won’t be easy to replicate later. TLDR — The 10 Most Important B2B SaaS Growth Lessons Your board…