A little reset goes a long way.

- To uncover the true business impact of your marketing efforts, turn off your paid marketing.

- Hitting pause on paid allows your marketers and analytics teams to reset and reestablish a baseline for all media – organic and paid.

- While it may seem radical, this paused spend approach gives control back to your team, delivers trustworthy data, and eliminates bad incentives that can impede your goals.

Incrementality: the most fashionable word in the mobile app marketers lexicon for five years running. Incrementality is no longer an aspirational objective, it’s table stakes – and for good reason. In an ecosystem brimming with fraud and misattribution, mobile growth and performance marketing teams lean on incrementality testing to quantify and validate the true business impact of their paid tactics, not just average cost per acquisition (CPA) and return on ad spend (ROAS).

As the popularity of (and appetite for) incrementality has grown, so have the options for implementing such tests. You’re likely familiar with the most popular methodologies available to you: intent-to-treat (ITT), PSA and ghost ads. However, there’s another option worth considering that most advertisers overlook. It’s one that ad networks will never recommend and internal marketing orgs are hesitant to acknowledge.

Turn off all your paid marketing — all of it.

Although this may seem radical (especially in today’s mobile-dominated world) this methodology has no barrier to entry, requires no additional costs (in fact, it comes with a cost savings), has no biases, offers advertisers the most control, and can be implemented across all operating systems.

So, how does it work? At a high level, the philosophy behind this approach is very similar to that of the elimination diet. In the case of the elimination diet, you remove all potentially troublesome foods from your diet, and slowly reintroduce them back in one at a time to understand the effects of each. Similarly, when you turn off all your paid spend, you’re resetting and reestablishing what your true, organic baseline is in terms of your business’ digital presence. The goal is to remove any governable variables from what is soon-to-be your control. The time required for this “calm” period – the period in which all of your paid marketing is turned off – is completely dependent on the advertiser, which is why it’s important to leverage your analytics and data science team.

That team will have recommendations on the correct time frame, and can help you cement your paid reintroduction plan (by channel, OS, country, etc). As you reintroduce channels, remember that you need to do so one-by-one, and that spend needs to stay consistent during this phase. This will allow you to measure the impact that paid has to Organic (did it stay flat, go up or go down) but also the Total (did it go up with paid, and did it grow at the same volume as is attributed to paid). This measurement and analysis are both things that your data science teams can help with. Ultimately, this is going to be tailored to each advertiser, but that’s what makes this methodology so great: you and your team have all the control, transparency, and data.

How the Paused Spend Approach is Different

If you move forward with this approach, you should understand how it compares to other methodologies and their limitations:

- Although intent-to-treat or holdout tests have no cost and can be implemented on the client side, they often produce noisy and potentially biased results.

- PSA, on the other hand, has essentially zero noise as it serves ads to the test and control groups, allowing advertisers to obtain data on both. Unfortunately, PSA testing is costly and, if not implemented correctly, can create selection biases as test and control groups may be optimized differently.

- The ghost ads methodology is widely considered the “best” approach. It solves for the gaps in the previous two: no noise, limited-to-no bias, and – best of all – free to run. However, this approach is less transparent, uses self attribution from the ad partner running the test, and can only be implemented at the partner vs. portfolio level. It also relies on identifiers (such as device IDs) that – thanks to changes in iOS – are increasingly less accessible.

It would be naive to not acknowledge and address the hurdles that come with the paused spend approach. As an approach it requires patience, strong analytical skills, strong strategy, and confidence in your attribution windows and internal data pipelines. You can’t simply rely on an ad partner to implement the test and provide results for you. This approach is for the advanced marketer who has tested other methodologies and experienced their limitations first hand.

The Big Benefit: Corrected Incentives

If you do fit the aforementioned persona, and you think you’re ready to try the paused spend approach, there might still be a question lingering in your mind, namely: “if pausing spend is a superior approach, why has no one ever recommended it to me?” Skepticism is to be expected. Especially since this approach runs contrary to what many people believe to be true.

But, beyond the benefits mentioned above, what many marketers ignore is that the pause spend methodology removes any internal or external incentives. And while you may not think it, there are lots of incentives at play around your mobile marketing. There’s an external incentive from your ad partners for you to spend more. There’s an internal marketing team incentive to show positive ad performance at all costs. There’s an internal finance incentive to spend the allocated budgets. You get the picture.

This misaligned incentive structure is reminiscent of the early days of mobile app install campaigns. During that time, the incentive for app marketers revolved around install volume and efficiency. Ad partners and nefarious actors were well aware of this, and so began the days of rampant click/install fraud. On paper, marketers saw what they had hoped and they had no incentive to dig deeper or understand the data further. The high volume and unbelievable efficiency for the dollars they were spending brought internal praise to the team, which got them more dollars from finance, which made them spend more money with those ad partners.

And it’s the reason why we lean on incrementality tests today.

When you remove incentives from the equation, as you do with the paused spend approach, the focus and clarity of the test aligns with the original goal of the test: quantify and validate the true business impact of paid marketing tactics.

Reset your baseline, understand where your business stands, and remove misaligned incentives and when you restart your Mobile Marketing programs, you’ll do so from a place of understanding, insight, and control that could help launch you to new heights.

Book a complimentary consultation with one of our experts

to learn how MAVAN can help your business grow.

Want more growth insights?

Thank you! form is submitted

[hubspot type=”form” portal=”20951211″ id=”9c538ed2-fb12-45f1-a573-ad7953c058cc”]

Related Content

-

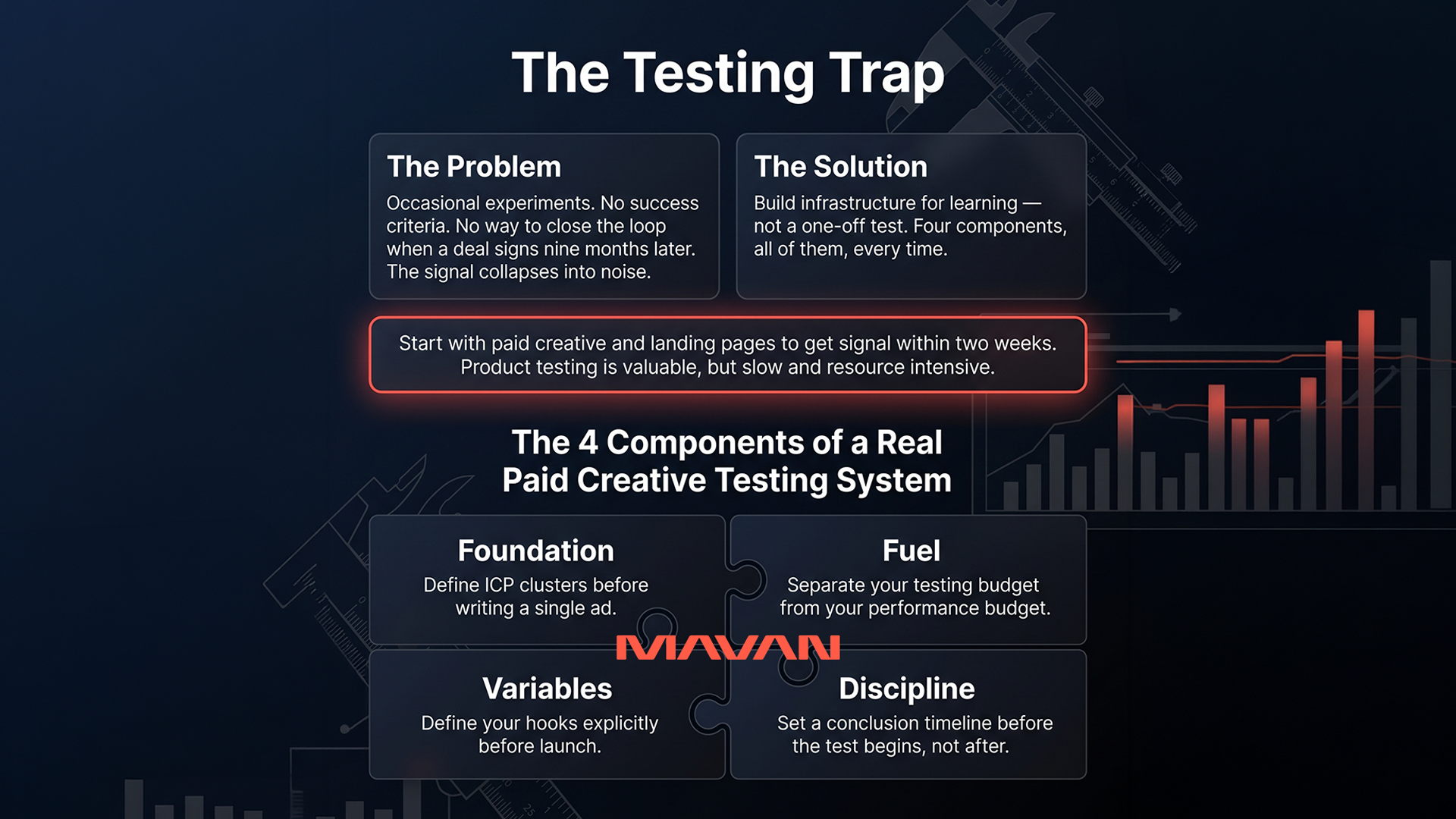

How Do You Build a Real A/B Testing Framework for B2B SaaS?

A functioning B2B SaaS experimentation program is built around paid creative testing and landing page optimization. Even with long sales cycles, you can know if an experiment is heading in the right direction within two weeks — as long as you track from first touch all the way through to closed contract.

-

Why Is Your B2B SaaS Attribution Broken — and How Do You Fix It?

Most B2B SaaS attribution stacks fail in one of two predictable patterns: relying solely on last-touch data, or being bolted together piece by piece until the parts no longer communicate. The fix starts with mapping every top- to mid-funnel touchpoint in sequence and tying that complete journey back to the moment a contract closes. TLDR…

-

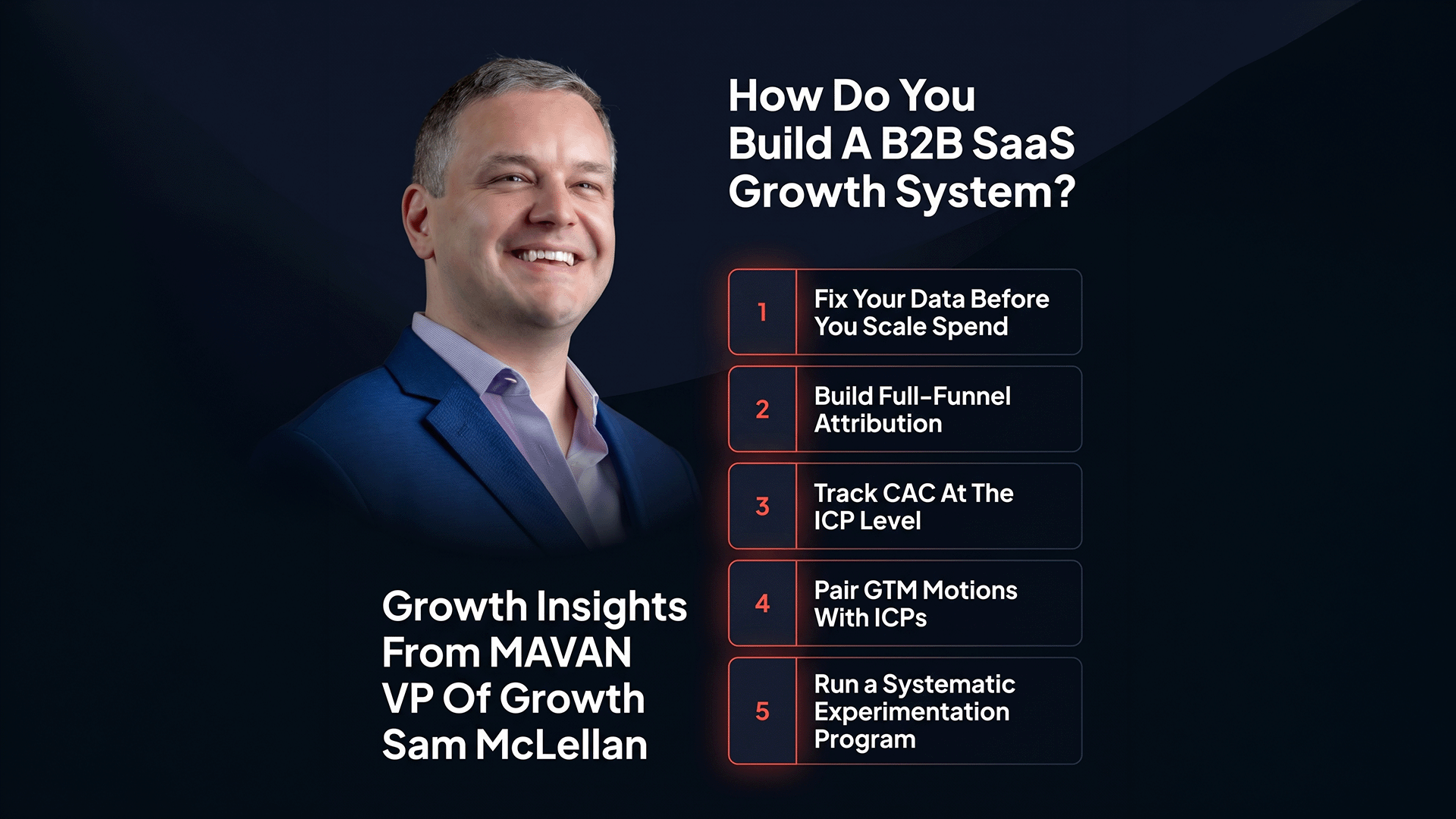

How Do You Build a B2B SaaS Growth System That Scales Beyond Founder-Led?

The fastest-scaling B2B SaaS companies are adopting measurement and experimentation disciplines from mobile gaming — including granular multi-touch attribution, ICP-segmented CAC tracking, and systematic creative testing. Companies that close that gap now are building compounding advantages that won’t be easy to replicate later. TLDR — The 10 Most Important B2B SaaS Growth Lessons Your board…